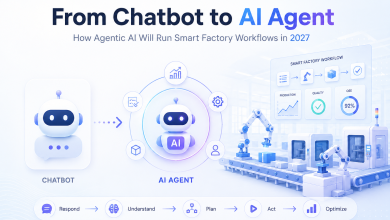

When industry experts and insiders talk about agentic AI, they are essentially talking about taking the technology to the next level. This is the level where autonomous agents can execute complex tasks and deliver outcomes on behalf of users above and beyond the generation and delivery of information.

Rather than just drafting an email or summarising a document, agentic AI envisions agents capable of planning an entire overseas business trip, complete with flight details and travel itinerary. Agents could help select and recruit people to fulfil workforce requirements or streamline customer service operations.

Across the UK, organisations are under pressure to move from experimentation to extracting tangible value from their AI investments. Agentic AI represents a pathway to intelligently automating workflows and, importantly, augmenting human decision-making. Already, organisations across a range of sectors are exploring how agents can enhance customer engagement while freeing skilled professionals to focus on complex, high-value work.

Under the bonnet of AI agents

AI agents are not the result of a single generative AI model or large language model (LLM) operating in isolation. They are architecturally more complex. In some implementations, a planning LLM works in tandem with smaller, specialised models that perform discrete tasks. In others, a single capable model orchestrates the entire workflow by calling tools, invoking external APIs, querying databases, or triggering downstream services as needed to complete a task. What these architectures share is the same underlying principle: the model is no longer just answering questions, it is taking action.

Crucially, agents must connect reliably to enterprise data sources. Whether retrieving customer records, querying inventory systems, or accessing policy documents, the ability to ground agent responses in accurate, real-time data separates useful automation from unreliable guesswork. Retrieval-augmented generation (RAG) architectures and well-designed data pipelines become essential infrastructure for production-grade agents.

That multiplication goes for cost as well. Many organisations currently budget for AI in terms of the tokens or compute required to run a particular model. With agentic workloads, token counts can increase significantly based on the computational requirements involved. Going from asking AI questions to assigning AI jobs leads to an increase in spending, and thus organisations need to account for that.

All that is not to say that agentic AI is only within the reach of large-scale, multinational enterprises with limitless technology budgets. Open source inference engines like vLLM provide high-performance, production-ready serving for large language models, while projects like llm-d build on this foundation to enable distributed inference across Kubernetes environments. Together, they allow organisations to bring LLM infrastructure in-house, on their terms – whether on-premises, in the cloud, or across a hybrid environment – without sacrificing performance or control.

Why hybrid cloud matters for UK organisations

Running complex, multi-step agents at scale requires platforms that address model inferencing efficiently and streamline agent development, deployment, and management. For many UK organisations, this naturally points towards hybrid cloud architectures that combine on-premises, private cloud, and public cloud resources.

In practice, agent workflows may need to run close to sensitive data that cannot readily leave a corporate data centre for regulatory or contractual reasons. At the same time, organisations often want to tap into elastic cloud capacity for bursty or experimental workloads. A hybrid approach allows them to do both, while maintaining consistency across environments.

Designing agents with a clear purpose

AI agents offer a wide range of use cases across some of the UK’s most important industries. In addition to financial services providers who are deploying agents for customer engagement, healthcare providers can use agents for patient management, identifying dispensary locations, setting up appointments and automating billing and claim submissions. In telecommunications, agents can offer hyper-personalised customer experiences, monitor network performance and reroute traffic as needed to avoid potential disruptions.

Well-designed agent systems augment human capabilities. They handle repetitive data gathering, initial triage, and routine processing, freeing skilled professionals to focus on the work that genuinely requires human judgment: complex problem-solving, empathetic customer interactions, creative thinking, and ethical decision-making.

This is where human-in-the-loop design becomes essential. Rather than granting agents full autonomy, enterprises define boundaries: which decisions can agents make independently, and which require human approval? A customer service agent might autonomously handle balance enquiries and transaction disputes under a certain threshold, but escalate credit decisions or complaints to a human specialist. This approach builds trust incrementally. Agents earn greater autonomy as they demonstrate reliability, while humans retain oversight of high-stakes decisions.

Regulation, sovereignty, and responsible deployment

Beyond workforce considerations, regulatory and jurisdictional factors also shape how UK organisations deploy agentic AI. Digital sovereignty concerns are increasingly prominent as more organisations have to comply with data residency and processing obligations. In fact, nearly three-quarters (72%) of UK organisations are prioritising operational control and autonomy for their cloud sovereignty strategy, highlighting the importance of clear accountability when deploying AI agents.

In any use case or with any organisation, agentic workflows may cross regional and international borders. For this reason, agents and their platforms require robust guardrails: content filtering to prevent harmful outputs, policy enforcement to ensure regulatory compliance, and comprehensive audit trails for accountability. In the UK, organisations must ensure that agents comply with GDPR, the Data Protection Act, and guidance from regulators such as the Information Commissioner’s Office.

Platforms must provide the tooling to define, test, and monitor guardrail policies across agent workflows, ensuring organisations can deploy with confidence. By embedding governance into the design and operation of AI agents, organisations can move faster while still managing risk. That confidence is a critical ingredient for sustainable, long-term success.

Interoperability and the rise of open standards

As agents become more capable, they must connect seamlessly to the tools and data sources they need to complete tasks. Model Context Protocol (MCP) is emerging as the open standard that makes this possible. Rather than building bespoke integrations for every database, API, or enterprise application, MCP provides a common interface that allows agents to discover and interact with external resources in a standardised way. For enterprises, this means faster agent development, greater interoperability across vendors, and reduced lock-in.

Agents built on MCP-compatible platforms can leverage a growing ecosystem of pre-built connectors rather than starting from scratch with each integration. This is particularly attractive in complex IT estates where legacy systems, modern SaaS applications and custom tools all need to cooperate. Open standards like MCP also support healthier competition and innovation in the AI ecosystem, because they make it easier to combine components from different providers. In a rapidly evolving field, that openness helps organisations avoid unnecessary constraints on their future choices.

Delivering real value with agentic AI

Agentic AI is on the verge of driving widespread, large-scale change in industries and heralding the next phase of enterprise AI adoption in the UK. But adoption must have an impact and deliver measurable value. When connecting AI agents to their workflows, organisations need a clear understanding of what agents are, what they can do, and where they can best be used.

Leaders should ask themselves, “how does this make my organisation more efficient, improve the user experience or make my employees more productive?” They should look at where agents can make the biggest difference in making their organisation more capable by making their people more capable. By handling the routine and surfacing the relevant, agents give employees the space to do their best, most creative work. Organisations that define meaningful use cases, invest in robust platforms and governance, and embrace open, interoperable foundations will be best placed to unlock the full potential of agentic AI.