Artificial intelligence adoption across enterprises is accelerating rapidly. Yet many organizations still struggle with a key limitation: most AI systems are built as rigid, monolithic solutions that are difficult to modify, scale, or integrate with existing workflows.

Traditional AI development often requires heavy engineering, complex infrastructure, and frequent model retraining, which slows experimentation and innovation.

Meanwhile, modern software development has shifted toward modular architectures built from reusable components. Composable AI applies this same principle to artificial intelligence by assembling smaller capabilities—such as models, data pipelines, and workflows—into flexible systems.

In this article, I explain what composable AI is, why enterprises are adopting it, and how organizations can build modular AI systems using no-code tools.

What is composable AI?

Composable AI refers to building artificial intelligence systems from modular components that can be combined, reused, and replaced independently. Instead of relying on a single monolithic AI model, organizations assemble multiple specialized components that work together to perform complex tasks. This modular approach makes AI systems easier to update, experiment with, and scale as business needs evolve.

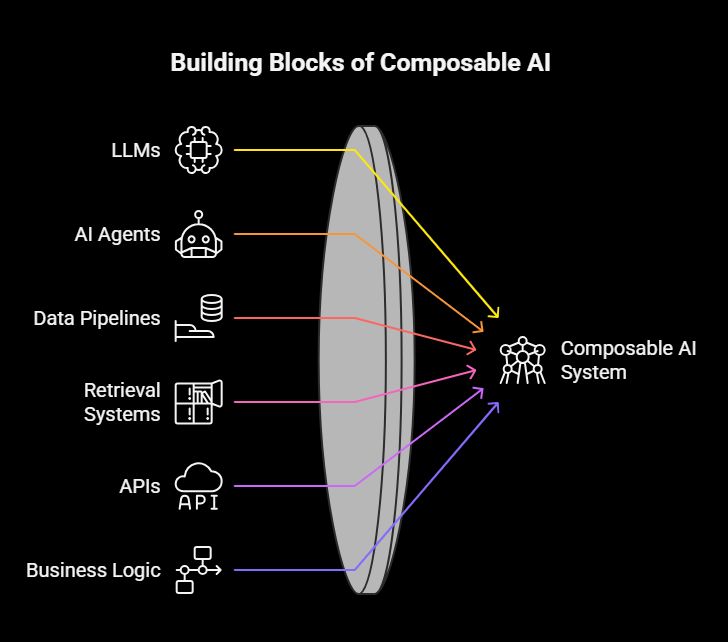

Building blocks of composable AI

Building Blocks of Composable AI

A composable AI system can include several building blocks, such as:

- Large language models (LLMs)

- AI agents

- Data pipelines

- Retrieval systems (vector databases)

- APIs and integrations

- Business logic layers

Typical composable AI stack

Most composable AI systems follow a layered architecture:

- Data sources – internal databases, documents, or SaaS tools

- AI models or agents – models that generate insights, predictions, or responses

- Orchestration layer – workflows that coordinate tasks between models and services

- Application interface – dashboards, internal tools, or apps used by employees

For example, a customer support system might combine an LLM for response generation, a vector database for knowledge retrieval, a workflow engine for routing tickets, and a dashboard for agents. Each component can evolve independently without disrupting the entire system.

This approach reflects broader software trends like microservices and API-first architectures. No-code platforms like n8n simplify this process by letting teams visually connect AI models, APIs, databases, and workflows into functional internal tools.

As composable architectures mature, enterprises are increasingly adopting them to accelerate AI deployment and innovation.

Why enterprises are using composable AI?

Enterprises are increasingly adopting composable AI because it enables faster development, greater flexibility, and easier scaling of AI capabilities across the organization.

- Faster innovation cycles. Instead of rebuilding AI systems from scratch, teams can reuse modular components and introduce new capabilities simply by adding or replacing models, agents, or workflows.

- Reduced vendor lock-in. Organizations can switch between different AI providers, such as OpenAI, Anthropic, or open-source models, without redesigning the entire system.

- Better scalability. Each module in a composable architecture can evolve independently, allowing systems to scale without major structural changes.

- Greater experimentation. Teams can test different prompts, models, and workflows without affecting the rest of the system.

- Cross-team collaboration. No-code platforms allow product, operations, and data teams to participate in building AI workflows.

Research suggests organizations adopting composable architectures can release new features up to 80% faster than competitors. Instead of isolated chatbots, many companies now deploy collaborative AI agents that work together across workflows. Platforms like n8n help teams compose these AI-powered tools quickly.

To fully realize these benefits, however, organizations must adopt the right implementation strategies.

Best practices for adopting composable AI

Adopting composable AI requires more than just choosing the right tools. Organizations need a thoughtful architecture and workflow strategy to ensure their systems remain flexible, scalable, and easy to maintain.

Design around modular capabilities

The foundation of composable AI lies in breaking systems into clearly defined capabilities. Instead of building a single large AI application, teams should design smaller modules responsible for specific tasks such as retrieval, reasoning, orchestration, and the user interface layer. This modular structure allows each component to evolve independently without disrupting the rest of the system.

Use APIs as the glue

Composable systems rely heavily on APIs, webhooks, and integrations to connect different components. APIs act as the communication layer between models, data sources, and applications, allowing teams to swap services or introduce new capabilities without rebuilding the entire architecture.

Start with small AI workflows

Rather than attempting to build a complete AI platform immediately, organizations should begin with focused workflows. Examples include automating support ticket classification, enriching sales leads with AI insights, or creating an internal analytics assistant. These smaller initiatives help teams validate use cases and iterate quickly.

Introduce orchestration

As systems grow, orchestration becomes essential. Workflow engines or agent orchestration layers coordinate how models, APIs, and data pipelines interact, ensuring tasks execute in the correct sequence.

Ensure observability

Composable AI systems require visibility across multiple components. Teams should track model outputs, workflow errors, and API latency to identify issues early and maintain reliability.

Enable non-developer participation

Finally, organizations should empower non-engineering teams to contribute to AI development. No-code platforms like n8n allow teams to visually compose workflows, connect databases, and build internal dashboards without complex backend development.

To implement these practices effectively, organizations also need the right technology stack to support composable AI systems.

Tools required to build composable AI

Building composable AI systems requires a combination of technologies that handle intelligence, data management, orchestration, and user interaction. Together, these components form the foundation of a flexible and modular AI stack.

1. AI models

AI models serve as the reasoning engine of composable systems. Organizations often rely on providers such as OpenAI, Anthropic, or open-source large language models. These models perform tasks like reasoning, summarization, classification, and content generation within AI workflows.

2. Data layer

A strong data layer supports knowledge retrieval and contextual understanding. Common tools include relational databases like PostgreSQL, vector databases for semantic search, and data warehouses for structured analytics. These systems store organizational knowledge and power retrieval pipelines that supply relevant information to AI models.

3. Workflow orchestration

Composable AI systems require orchestration layers that coordinate multiple components. Workflow engines, agent frameworks, and event-driven systems manage how tasks move between models, data sources, and APIs. This orchestration ensures that AI pipelines operate reliably and in the correct sequence.

4. Integration layer

AI workflows rarely operate in isolation. They often connect to APIs, SaaS tools, cloud storage services, and internal systems. Integrations allow AI applications to access operational data and trigger real-world actions across business platforms.

5. No-code application builders

Finally, organizations need an interface layer where users interact with AI-powered systems. Tools like n8n enable teams to visually build internal applications, automate workflows, and integrate AI services without heavy backend development. Teams can quickly create tools such as support dashboards, operations monitoring panels, or AI-powered data assistants.

While these tools make composable AI possible, the architecture also introduces new challenges that organizations must manage carefully.

Challenges and pitfalls with composable AI

While composable AI offers flexibility and faster development cycles, it also introduces architectural and operational challenges that organizations must manage carefully.

- Integration complexity. As systems grow, the number of components and dependencies increases. Without a well-designed architecture, connecting models, data sources, and APIs can quickly become difficult to maintain.

- Workflow orchestration issues. Poor coordination between agents and workflows may lead to redundant actions, inconsistent outputs, and unnecessary compute costs.

- Governance and security. Organizations must carefully manage permissions, monitor model usage, and protect sensitive data across multiple integrated systems.

- Observability challenges. Debugging composable AI pipelines can be difficult because issues may originate from models, integrations, or workflow logic.

- Tool sprawl. Teams may accumulate too many models, APIs, and automation tools, creating fragmented systems.

Despite these challenges, composable AI enables powerful real-world applications when implemented thoughtfully.

Use cases of composable AI

Composable AI enables organizations to combine multiple AI components to solve practical business problems. Because each module performs a specific task, teams can assemble powerful AI workflows tailored to different operational needs.

- AI-powered internal dashboards: Companies can combine LLMs with internal data sources and analytics tools to create intelligent dashboards. These systems not only display metrics but also automatically explain trends, summarize performance insights, and recommend actions for operations teams.

- Customer support automation: Composable pipelines can automate support workflows by combining a ticket classification model, a knowledge retrieval system, and a response generation model. Advanced support agents can also interact with internal systems to resolve issues more effectively.

- Sales intelligence tools: Sales teams can integrate CRM data, enrichment APIs, and AI summarization models to generate account insights, meeting briefs, and opportunity analysis automatically.

- AI-driven workflow automation: Composable AI can automate multi-step business processes such as finance approvals. A system may combine document parsing AI, compliance rules, and workflow automation to process invoices or expense reports.

- Enterprise copilots: Internal AI assistants can interact with company databases, SaaS tools, and APIs to help employees complete tasks faster. With platforms like n8n or Zapier, teams can build operational assistants, support resolution guides, or internal analytics bots.

Next, let’s explore how composable AI is expected to evolve in the coming years.

Future of composable AI

Composable AI is still evolving, but several emerging trends are shaping how organizations will build AI systems in the coming years.

- Multi-agent systems. Enterprises are increasingly deploying specialized AI agents that collaborate within modular ecosystems. These agents handle different tasks such as retrieval, reasoning, and automation, while orchestration layers coordinate their interactions.

- AI-native enterprise software. AI capabilities will become embedded across internal applications, turning dashboards, workflows, and operational tools into intelligent systems.

- Standardized AI protocols. New standards such as the Model Context Protocol (MCP) are beginning to define how AI models, tools, and data sources interact across systems.

- Rise of no-code AI builders. Low-code and no-code platforms are enabling business teams to assemble AI systems using modular building blocks.

- Composable AI ecosystems. Enterprises will increasingly build internal AI platforms composed of models, agents, workflows, and data services.

Building the next generation of AI systems

Composable AI represents a major shift in how modern AI systems are designed and deployed. Instead of relying on monolithic models, organizations can assemble modular AI capabilities that evolve alongside changing business needs. This approach enables faster experimentation, easier scaling, and greater flexibility when adopting new models or technologies.

When combined with no-code platforms, composable AI also expands who can build and deploy AI-powered solutions. Product managers, operations teams, and analysts can participate in creating intelligent workflows without deep engineering expertise.

Platforms like n8n or Zapier demonstrate how organizations can quickly compose AI-powered dashboards, workflows, and internal tools by connecting models, APIs, and databases in a visual environment.

As enterprises continue embedding AI into everyday operations, composable architectures will likely become the foundation of scalable, adaptable, and future-ready AI systems.