Walk into any radiology reading room, and the workflow looks the same. One radiologist opens a study, reviews hundreds or thousands of images, integrates prior exams with clinical context, and produces the final report. One person, one study, one signature.

That model made sense when imaging volumes were lower, and each exam asked fewer questions. It no longer fits today.

Imaging volumes are growing 5-10% a year, while the workforce isn’t keeping up. In the U.S. alone, projections estimate a shortage of up to 30,000 radiologists by 2030.

Better detection won’t close the gap. But treating diagnoses like infrastructure might. The closest analogy for what that infrastructure looks like is how teams build complex software.

Software handles complexity through scoped contributions, structured review, traceable changes, and a single accountable maintainer who signs off on the final output. Projects on GitHub run on this architecture every day.

In this article, I’ll explain how radiology can adopt the same logic.

A radiology study and report aren’t a one-person task

A radiology study is not one diagnostic problem. And a radiology report is not one diagnostic decision. The current workflow treats both as monolithic units, owned by a single person.

However, a CT scan of the chest, abdomen, and pelvis reveals a system of problems. One task is the search for pulmonary nodules. Another is liver lesion characterization. Another is the comparison of postoperative change with prior imaging. Another is the call on whether incidental findings need escalation for follow-up.

Each rewards a different kind of expertise and carries a different attention budget. The right scope of work for one radiologist is often a diagnostic task within a study, rather than the whole study.

The final report compresses dozens of small decisions into a few paragraphs. Is this lesion real or an artifact? Has it changed since the prior scan? Are the measurements consistent? Does the conclusion reflect the actual level of certainty?

And almost none of the decisions in the report process are visible to anyone outside the radiologist’s head.

As studies get larger and questions get more specialized, more of the work behind a report sits in one person’s working memory, and less of it stays reviewable, attributable, or improvable.

Software development solved this problem decades ago

Software development stopped relying on a single author per codebase decades ago. Complexity outgrew that model. So they added infrastructure: version control, scoped contributions, structured peer review, and clear final ownership.

Radiology faces the same complexity problem, but with a fraction of the infrastructure.

The clearest working example of that operating logic is GitHub, the platform millions of software teams use to coordinate complex work across many contributors:

- A project lives in a repository.

- Anyone can propose a change.

- Reviewers comment on specific lines.

- A maintainer merges the work into the final version.

The same architecture maps onto radiology:

The study is the repository.

A measurement, annotation, or partial interpretation is a commit.

A request for subspecialty input is a pull request.

Peer review becomes code review, tied to a specific image region instead of happening in a hallway.

The signing radiologist is the maintainer who merges contributions into one accountable output.

Note: Treating radiology as code does not mean opening medical data to the public or turning diagnoses into open source. It means borrowing the operating logic that made large-scale technical work scalable: modular tasks, traceable changes, structured review, and accountable maintainers.

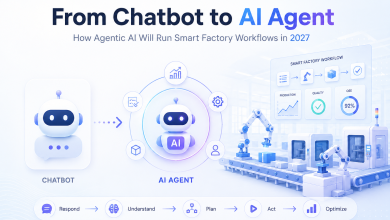

AI belongs in the coordination layer, not the diagnosis

Most of the AI investment in radiology is going into models that try to read the scan: detection, classification, measurement. The AI speeds up one part of radiologists’ work. But given growing demand and workforce shortages, it’s more of a fix than a solution.

The more challenging and beneficial use case for AI is coordination. Routing the right task to the right expertise. Flagging when a piece is missing. Standardizing how contributions get assembled into a final report. Keeping the workflow coherent across multiple contributors.

That’s the role AI can actually play in medicine, rather than taking on clinical responsibility.

The radiologist’s role becomes more specialized

If AI handles coordination and the workflow distributes contributions across many specialists, the radiologist’s role shifts with it.

The expertise stops getting spent on blanket ownership of entire studies. Instead, it concentrates where it counts.

Some radiologists become specialist reviewers for narrow problem types like cardiac imaging, musculoskeletal, neuroradiology, or chest. Some become final integrators who assemble the report from validated inputs and hold accountability for the signed output. Some focus on hard-edge cases that fall outside routine pathways and require experienced clinical judgment.

The redesign organizes the role around expertise rather than coverage. And it raises a different question: how does this kind of distributed contribution stay coherent across many hands?

Multiple contributors don’t create chaos when the workflow is structured

The standard worry about distributed contribution is inconsistency. Structured workflows resolve that concern.

Radiologists already seek peer input today. They walk into the next room. They send screenshots. They ask the subspecialist down the hall. Collaboration is not rare in radiology. It is informal, unstructured, and largely invisible to the system.

GitHub solves a similar problem with version control, scoped permissions, review rules, and accountable maintainers.

The same architecture works for diagnosis:

- Contributions tie to specific regions or questions.

- They are time-stamped and attributable.

- Conflicts surface rather than stay buried.

- One radiologist still holds the final sign-off.

The system gains visibility into how each report was produced. The signing radiologist still owns it.

Bottom line: diagnostic infrastructure scales where staffing cannot

Radiology has been organized as a staffing model. Hire enough people, assign enough studies, and hope capacity roughly matches demand. The current workforce shortage shows the limit of that logic.

In 2024, UK trusts spent £325 million on temporary radiology workforce solutions, with £216 million of that going to outsourced reporting. This staffing approach is already getting expensive.

As I see it, the next step treats diagnosis as infrastructure. Expertise becomes accessible on demand. Cases are decomposed, routed, reviewed, versioned, and assembled into a final report. The system no longer depends on one person carrying the full cognitive load of an entire study from start to finish.