Inside many companies, decisions that once required constant human coordination across infrastructure, security, and operations are increasingly being delegated to software. Not static automation or scripts, but AI-powered agents that can interpret goals, decide on actions, and execute multi-step tasks across live systems.

This shift is still early, but no longer theoretical. What began as experimentation is moving into production. A late-2025 industry survey found that 62 percent of companies are at least experimenting with AI agents, though only 23 percent have scaled them in any business function. The gap reflects less a lack of tools than discomfort with delegating real operational decisions to autonomous systems. Early deployments already point to a change in how systems are run. Agentic AI is handling provisioning, monitoring, and routine remediation in live environments, absorbing work that previously required human orchestration. Engineers remain cautious, recalling that now-foundational tools like Terraform and Kubernetes were initially met with skepticism. But as governance matures and failure modes become clearer, agentic AI is beginning to look less like a novelty and more like a new operating layer.

From Automation to Autonomy

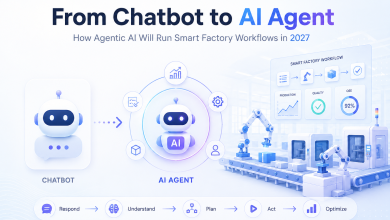

Agentic AI refers to systems endowed with agency: the ability to act independently toward a goal with limited human guidance. Unlike traditional automation, which follows predefined rules, agentic systems interpret objectives, plan sequences of actions, and adjust in real time. They do not merely recommend decisions. They execute them.

A conventional AI tool might suggest when to apply a system update. An agentic system can schedule the window, perform the update, validate the outcome, and notify the team. What distinguishes these systems is their closed-loop capability to observe, plan, and act across real infrastructure by calling APIs, running scripts, and enforcing policies.

Within companies, this work typically sits with infrastructure or internal systems teams: the groups responsible for the platforms others depend on. In practice, agentic AI is increasingly assembling entire environments, configuring access controls, applying standards, and enforcing compliance. Humans define intent. Software determines execution.

This transition matters because it changes where operational decisions are made. Authority that once lived entirely with people is increasingly shared with systems.

Modern infrastructure has grown more complex even as expectations for speed and reliability have increased. Cloud-native stacks expand quickly, dependencies multiply, and operational surface area grows faster than teams. In that environment, the scarcest resource is often sustained human attention.

Agentic AI promises leverage. Many operational workflows still depend on humans to connect automated steps, chase approvals, and validate outcomes. Agentic systems aim to collapse those handoffs into goal-driven flows, allowing teams to scale output without scaling coordination overhead.

Speed and internal experience also matter. Many organizations now treat internal systems as products, where responsiveness is a key metric. When teams can request operational changes in natural language and receive results quickly, backlogs shrink. Agentic AI also captures institutional knowledge that would otherwise live in senior engineers’ heads or scattered documentation.

Where Agentic AI Works Well

Agentic systems perform best in workflows with clear success criteria, repeatable patterns, and observable outcomes. Infrastructure provisioning is a natural fit, as objectives are explicit and results can be validated. Drift detection and remediation also benefit, since they involve continuous comparison between desired and actual state followed by corrective action.

Routine troubleshooting works well when incidents map to known patterns. Even when agents cannot fully resolve an issue, they can gather diagnostics and produce structured handoffs. Documentation and internal knowledge support are quieter but meaningful wins, reducing onboarding time and operational friction.

Across these use cases, the value is not replacement of human judgment, but absorption of predictable workload.

The hardest failures do not usually come from execution. They come from context and judgment. Organizations operate on implicit norms: undocumented exceptions, historical tradeoffs, and unwritten rules that are difficult to encode.

Without that context, agents may take actions that are locally sensible but globally wrong. Auditability compounds the challenge. When agents make changes, teams need to know what happened, why it happened, and under whose authority. Weak traceability turns technical errors into governance failures.

Because agentic systems rely on probabilistic models, failure may appear as confident incorrectness rather than clean error. And in genuinely novel situations, humans still outperform AI in reasoning under uncertainty and balancing tradeoffs.

Managing the Risk of Delegation

Once agents can act, mistakes propagate quickly. Incorrect changes may repeat at machine speed. Cost risk rises if reliability goals are pursued without cost constraints. Security risk increases when agents hold broad permissions.

There is also human risk. If agents handle routine work, junior engineers may lose chances to build intuition, while senior engineers drift away from operational reality. The role of infrastructure teams can shift from builders to supervisors, productively or not.

Successful adoption tends to follow a staged maturity curve: grounding agents in internal standards, moving through advisory and supervised execution, and expanding autonomy only in bounded domains. Observability, logging, and rollback are not optional features, but core requirements.

The most realistic future for agentic AI is collaborative. Agents absorb repetitive work and execute constrained workflows. Humans set objectives, define constraints, and handle ambiguity. Responsibility does not disappear. It shifts.

Agentic AI is moving from experimentation to operational use, cautiously and unevenly. When implemented with strong governance and oversight, it can become a durable part of how companies run systems. The future is not AI replacing engineers. It is engineers shaping systems that extend their capacity without sacrificing control, accountability, or safety.

Lior Koriat is the chief executive of Quali, a technology company specializing in AI-driven infrastructure orchestration and governance. He has more than two decades of experience leading and scaling technology ventures in the enterprise software industry.