The headlines around AI projects failing have become almost routine today. Another AI rollout stumbles. Another company’s chatbot goes off-script. Another story surfaces about how an institution’s rush to deploy artificial intelligence eroded the very trust it was supposed to strengthen. And yet, for every organization learning these lessons the hard way, there are others, quietly, methodically, getting it right.

Higher education is one of them.

Speed Is Not a Strategy, It’s a Reputational Risk

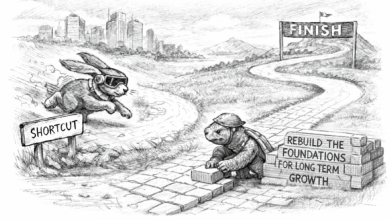

When OpenAI, Google, and a wave of well-funded startups began racing to put generative AI into the hands of as many users as possible, the implicit bet was that speed would produce dominance. Ship first, fix later. The market would sort it out.

What industries are seeing now at a global scale are the consequences of that bet. Regulatory scrutiny is intensifying. Users are pushing back on hallucinations, bias, and privacy violations. Institutions are facing severe fines for not doing what should have been done in the first place. Enterprises are seeking more than a chatbot demo. They want governance, accountability, and guardrails built in. They want proof that the technology saves time, not adds more burden.

Colleges and universities have long understood something Silicon Valley is still learning: reputation is infrastructure. You don’t rebuild it quickly. You don’t recover from a catastrophic loss of trust in a news cycle. And when the people you serve are students, faculty, and researchers—people whose academic futures and intellectual work hang in the balance—cutting corners is not a competitive advantage. It’s an existential risk.

Taking A Hybrid Approach: Balancing Innovation with Accountability

A hybrid approach to enterprise AI doesn’t mean teams need to do more complex tasks. It’s a philosophy that treats technology deployment as change management first and a technical problem second.

Most teams rush to launching the latest chatbot, iterating on the recommendation algorithm, or fine tuning a system – and by the time it reaches the production scenario – it has no user base.

I have heard this question more than a hundred times now: “Did we do everything right technically?” Most teams that fail at this stage often didn’t start with the problem or the use case at hand. They started with the fact that it can create cat images quickly, and all the other possibilities around it. But does it really help simplify business operations? Does it really help build momentum? Are the results reliable if a domain expert were to review them?

If teams begin with user needs, operational bottlenecks, and then work backwards from how technology can supplement those workflows, it will not only support the business in the right way, but it would also drastically reduce the reputational risk and operational redundancies.

When we pair the power of large language models with institutional expertise, human oversight, and an ethical framework, it has proven to drive rapid adoption and seamless expansion. And in higher education, this approach isn’t aspirational, it’s operational and often a foundation. The largest enterprise implementations in the sector have been built on exactly these principles.

Responsible AI Is A Process, Not a Product

Across some of the largest enterprise AI deployments in higher education my team has led, a pattern has emerged among institutions ranging from the largest community colleges serving thousands of students to national workforce organizations serving professionals worldwide

When AI is introduced through a structured, values-aligned framework rather than dropped into an existing workflow, satisfaction rates reach 98% and adoption expands organically. Not among technology enthusiasts. But among typical skeptics, administrative staff, counselors and many more—the people who have the most to lose from a bad implementation and have often suffered the consequences of lengthy, complex implementation cycles.

Organic expansion is the rarest signal in enterprise technology. It doesn’t come from feature lists or marketing. It comes from trust that was earned in the first 90 days of building a plan and strong foundation through proper training, governance structures and feedback cycles.

Higher Education as a Model, Not an Outlier

It’s tempting to view higher education as a laggard in AI adoption—too bureaucratic, too cautious, too slow to move. That framing misunderstands what deliberate actually looks like, and it confuses motion with progress.

Colleges and universities have spent centuries developing frameworks for evaluating evidence, questioning assumptions, and protecting the integrity of inquiry. Those are not bureaucratic impulses. They are the operating system of institutions that exist to generate and transmit knowledge responsibly. When those same institutions apply that rigor to AI adoption, the result isn’t slowness. It’s durability.

The enterprise AI market is beginning to understand this. The organizations that will define AI’s second decade won’t be the ones that deployed fastest. They’ll be the ones whose implementations are still running five years from now—still trusted, still expanding, still serving the people they were built for.

The Reputational Stakes Have Never Been Higher

The stories emerging from Big Tech’s AI missteps are instructive—not because they are surprising, but because they were predictable. When you optimize for speed above accountability, when you treat ethics as a PR problem rather than a design constraint, when you ship to market before you understand the consequences—you are borrowing against trust you may not be able to repay later.

Higher education institutions don’t have that option. They serve communities that depend on them across generations. Their reputations are not quarterly metrics. They are the result of decades of relationship-building, and they are not recoverable in a single news cycle.

That constraint, which might look like a limitation, is actually a competitive advantage—for the institutions deploying AI responsibly, and for the partners building with them. The hybrid model isn’t the cautious path. It’s the durable one.

The race to deploy AI is real. But the institutions that will win it aren’t the ones moving fastest. They’re the ones building something that will stand the test of time.