The real race is not about models

Spend any time in technology investment circles right now and the conversation is almost entirely about models. Which foundation model is winning. Which application layer will capture the most value.

Whether the latest release changes the competitive picture. It is a fascinating debate, and mostly a distraction.

I have spent years investing in large-scale compute infrastructure. That work taught me that digital industries are industrial ones. Power, land, hardware and the operational knowledge to run it all at scale are what determine who can actually deliver on AI’s promise. Global spending on AI infrastructure is projected to exceed $690 billion by 2026, and this may be an underestimate.

Power is the constraint nobody is talking about

Every AI query, every generated output, every model training run consumes electricity. Training a frontier model takes millions of GPU hours. The energy cost is real, it is large, and it grows with every new user and every new capability.

Most enterprise technology teams have never had to think about power supply as a strategic problem. Securing reliable capacity at the scale AI demands means long-term contracts with grid operators and sometimes direct investment in generation. The organisations that sort this out early will have an advantage that is genuinely hard to close later. Those that leave it to chance will find it becomes their ceiling.

The Middle East is an example to the world

From Dubai, the AI infrastructure picture looks different than it does from London or San Francisco. The UAE and the wider Gulf have been investing in energy capacity, land and regulatory frameworks for years.

That investment was not specifically about AI, but it means the region is unusually well positioned now that AI infrastructure demand has arrived.

The UAE is becoming a genuine tier-one technology hub, and the current buildout is proving that. Available land, serious grid capacity and a government willing to move quickly and work directly with the private sector. Those conditions take a long time to create, and most Western markets are now struggling to replicate them fast enough.

Regulation is an advantage, not a burden

Many in the technology industry treat regulation as an obstacle but here it can be an advantage. Investors committing capital to infrastructure need certainty about planning rules, data requirements and power agreements before they will sign contracts that tie up money for a decade. Ambiguity pushes that capital elsewhere.

AI workloads are already affecting grid planning in several markets. Governments will regulate this with or without industry input. The companies that engage now will help shape frameworks that work. Those that do not will find themselves operating under rules written without them.

Infrastructure investing is not like software investing

A lot of capital is flowing into AI right now from investors whose instincts were shaped by software. In software, you move fast, iterate quickly, and the main asset is people and code. Infrastructure is different in almost every respect. A major data centre takes three to five years from planning to operation.

The capital goes in long before any return is visible. Getting the site, the power and the operational model right at the start matters enormously, because fixing mistakes later is expensive.

There are smart investment firms that make serious errors by applying software-cycle thinking to infrastructure bets. They underestimate build times, underestimate the operational complexity of running large facilities, and overestimate how quickly the market will reward early movers. The money that will do well in AI infrastructure is patient money, backed by genuine operational knowledge.

What most enterprise leaders are missing

The most common mistake enterprise leaders make is treating AI infrastructure as a standard procurement decision. They choose a cloud provider and assume the capacity will be there when needed. Demand for AI compute is running ahead of supply, and that assumption is becoming less safe.

The companies with credible AI strategies have mapped their infrastructure dependencies honestly. They know where their compute comes from, they understand their energy cost exposure, and they have thought about what happens if supply gets constrained. That level of thinking is still uncommon, and it is a real competitive advantage for those doing it.

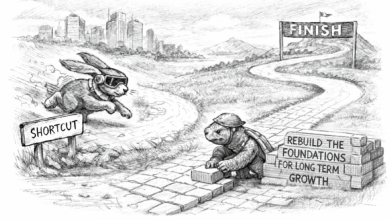

The foundations are being laid now

The foundations matter more to making the systems work than the stories built around them. The loudest story in AI is about models and applications. The more important one is about power, land and operational capability.

Countries and companies investing seriously in physical infrastructure today are building positions that will be difficult to challenge later. You cannot create gigawatt-scale power capacity quickly. You cannot develop the operational knowledge to run large compute facilities efficiently without years of experience. That is exactly what makes these positions worth building.