A modern hazardous production facility is a web of interdependent technological nodes. One bad temperature sensor can cascade into a full shutdown of the production loop in seconds. Static risk matrices and periodic safety audits were built for a simpler era. They record what already happened. They miss real-time changes. They ignore hidden operational factors. And the bill keeps growing: Liberty Mutual’s 2025 data puts the direct cost of severe workplace injuries and incidents in the US above $50.8 billion a year, out of $58.78 billion in total industry losses. Companies pay for disaster recovery year after year, even though the technology to anticipate and block failures before they escalate already exists.

Scaling predictive safety takes more than a new algorithm, though. McKinsey’s 2025 report lays out the maturity gap: 92% of organizations plan to increase AI investments, but only 1% of executives consider their AI deployment mature enough to drive scalable business outcomes. Most enterprises are still testing isolated models in sandboxes, leaving critical decisions exposed to human error and protocols that haven’t been updated in years.

From Periodic Audits to Streaming Architectures

In my engineering work on predictive safety systems for hazardous industrial facilities, I kept running into the same root problem: architecture. Legacy platforms collect sensor readings in batches, push them through threshold-based rules, and spit out reports hours or days later. By then, the window for prevention has closed. You are reading about yesterday’s near-miss, not preventing tomorrow’s.

A layered streaming architecture solves this. Sensor telemetry moves through five stages: data acquisition from IIoT devices (vibration, pressure, temperature, radiation), preprocessing for noise filtering and normalization, an AI/ML engine running anomaly detection and remaining-useful-life prediction, an automation layer that routes alerts and triggers response protocols, and a monitoring dashboard for engineering teams. None of these layers wait for a batch cycle. Edge computing at the acquisition stage cuts response latency to milliseconds and keeps safety algorithms going even when cloud connectivity drops.

The AI engine sitting at the center of this pipeline goes beyond checking individual readings against fixed thresholds. It builds an integral risk score from three independent probability streams: technical failure likelihood, drawn from equipment sensor patterns; human error probability, estimated from operator behavior and shift duration; and environmental risk, covering weather, grid stability, and cybersecurity status. These streams combine into a weighted risk index that recalculates with each new sensor reading. Once the index crosses a predefined boundary, the automation layer fires an alert through a webhook-based workflow, pings the duty engineer on a messaging platform, and logs the event for regulatory compliance. No one needs to interpret a chart at this stage.

The MineSafe initiative, deployed across high-risk mining operations, shows what this looks like in the field. Engineers wired streaming data on methane levels, air velocity, and heavy machinery status into predictive models. Maintenance teams got a 24- to 48-hour window to intervene before equipment failed. Over two years, the system prevented hundreds of potential failures. Fatalities and severe injuries tied to heavy machinery dropped by 24%.

The gap between the two approaches:

| Characteristic | Static Risk Model | Dynamic AI Architecture |

| Analysis trigger | Scheduled audits (monthly/quarterly) | Continuous real-time streaming |

| Threat detection | Rigid historical databases | ML-based anomaly detection before incidents manifest |

| Uncertainty handling | Binary acceptable limits | Algorithmic confidence scoring with hidden factor weighting |

| Operator role | Manual metric collection | Strategic validation of AI-generated insights |

| Business outcome | Reactive damage control and fines | Reduced downtime, optimized maintenance, asset protection |

Solving the False Alarm Problem

Adding more sensors creates a problem nobody warns you about: information overload. More data points do not make a facility safer on their own. Raw, uncontextualized readings produce “data swamps” where real threats drown in noise. About 70% of manufacturing companies name data quality and contextualization as the main barrier to scaling AI. And when a sensitive model throws hundreds of alerts a day, engineers tune it out. Alert fatigue kills the monitoring system faster than any equipment failure.

I ran into this myself while building a prototype system with Isolation Forest for anomaly detection. The algorithm was sensitive, sure, but the false positive rate made operators ignore it within a week. Fixing it took two steps. First, I ran ROC analysis to calibrate thresholds, tuning parameters until precision broke 0.9 and recall held above 0.8. Second, I layered in an LSTM-based remaining-useful-life predictor as a second opinion. Isolation Forest is good at sudden deviations. The LSTM, trained on sequential sensor histories, picks up slow degradation that builds over hours or days. Fusing both outputs into one composite score brought false alerts down without sacrificing the system’s ability to catch both fast breakdowns and gradual decay. One detection algorithm, no matter how well-tuned, cannot cover the full range of failure modes on a hazardous facility. You need at least two complementary methods running side by side.

The broader industry is converging on Conformal Risk Control, a mathematical framework that caps the expected false discovery rate. CRC-based systems build adaptive prediction sets and calibrate sensor sensitivity so that false alarm probability stays below a threshold the business defines. The effect: AI stops being an advisory tool and becomes a safety barrier with provable bounds.

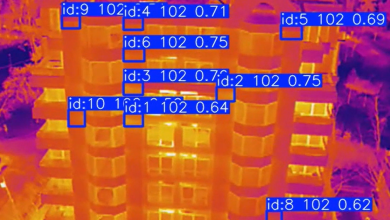

Computer vision adds yet another layer. Analysts project the AI video analytics segment will hit $12 billion by 2030, yet up to 95% of enterprise video data today goes unanalyzed. Modern CV algorithms scan footage in real-time, flagging missing protective equipment, unauthorized zone access, or a forklift getting too close to a worker, and routing those signals to the central control system.

Multiagent Coordination: Connecting Isolated Models

One predictive algorithm can tell you a rotor is wearing down. It cannot tell you that the cooling system next to it is losing pressure and the operator on shift has been working for fourteen hours straight. Connecting these isolated models is the next architectural step, and multiagent systems are the tool for it. Gartner lists agentic AI among the top strategic technology trends for 2025–2026, citing its potential for autonomous risk coordination.

In a multiagent setup, each software agent owns a distinct monitoring domain: one watches equipment vibration, another maps the facility’s thermal profile, a third tracks spare parts logistics. The payoff shows up when individual parameters sit inside acceptable limits but their combination spells trouble. Picture this: a turbine vibration reading at 78% of threshold, a cooling system pressure drop to 85% of norm, and an operator nearing the end of a double shift. No single alarm trips. But the agents, swapping data in real-time, recognize the compound pattern and initiate a safe shutdown in milliseconds, pulling humans out of the emergency response loop before anyone has to make a judgment call under pressure.

This extends the layered architecture I described earlier. The automation layer, which in a basic build routes alerts through webhook-based workflows, becomes an orchestration hub where agents negotiate responses across subsystems.

The Financial Case

Catching pre-accident conditions early pays for itself. McKinsey’s industrial data shows predictive AI cutting equipment failure-related downtime by 50% and extending physical asset lifespan by 40%. Companies spend less on emergency repairs and negotiate lower insurance premiums over time.

| Impact area | Before AI | With predictive AI | Result |

| Asset failure | Scheduled repairs by runtime | Replacement based on actual degradation | 40% longer asset lifespan |

| Downtime | Post-accident remediation | Anomaly resolution in planned windows | 50% less unplanned downtime |

| Personnel safety | Post-injury root cause analysis | Real-time dangerous proximity detection | Reduction of severe injuries |

| Insurance | High premiums from incident frequency | Lower rates from transparent telemetry | Freed working capital |

AI-driven risk assessment changes how management thinks about safety. You hand mathematical uncertainty analysis to algorithms and keep strategic oversight with engineers. But technology alone changes nothing if the culture stays reactive. I have seen facilities pour money into sensor networks and ML pipelines, then leave the output buried in dashboards nobody opens on the night shift. The missing piece is always the same: an automation layer that removes human inertia from the response chain. When the risk index spikes, the system should act first and notify second. Engineers validate and adjust after the fact, instead of scrambling to piece together a response from scratch while the clock runs.

The organizations that build self-learning, multiagent safety architectures before their competitors will hold an edge that is hard to close. Predictive safety is becoming a foundational layer of industrial infrastructure. Software catches potential catastrophes long before sensors register the first signs of degradation, and that capability, once operational, compounds over time as the models learn from each new data point.