Data center demand continues to grow. Therefore, the need for more power is increasing. Operators now face a hard truth. Power constraints have moved from a cost issue to a growth issue. Across the sector, teams need practical ways to build more energy efficient data centers without hindering performance, diminishing reliability, or stalling expansion.

Engineers and executives have started to treat efficiency as a design principle rather than a retrofit. That shift changes where investment goes and how technical teams judge value. The strongest gains now come from a connected stack of improvements in cooling, silicon, power delivery, controls, storage, and site operations. Each decision shapes energy draw, thermal load, uptime, and long-term scalability.

Installing Strong Cooling Systems

Cooling still drives a huge share of facility power use, so it offers one of the clearest paths to stronger performance. Standard air cooling struggles under dense artificial intelligence racks, especially as processors push higher thermal design power. Liquid cooling addresses the pressure head-on. Direct-to-chip cold plates, rear-door heat exchangers, immersion systems, and closed-loop coolant designs remove heat with far greater precision than room-scale air handling.

That precision helps operators reduce fan energy, shrink hot spots, stabilize rack density, and maintain tight thermal envelopes across demanding workloads. Teams have a better shot at raising compute capacity without overbuilding the mechanical plant.

Cooling upgrades work best with strong facility telemetry. Sensors at the rack, row, coolant loop, and white-space level give operators a live picture of temperature drift, pressure, flow, and load variation. Those inputs help teams tune cooling output in real time instead of running systems at a blunt, fixed margin.

Implementing Smart Chips To Cut Waste

Silicon design plays a direct role in site efficiency. General-purpose central processing units, graphics processing units, tensor accelerators, and application-specific integrated circuits each have different power profiles. A data center wastes energy if it forces every task through the same computing path. Matching jobs to the appropriate architecture cuts excess draw and lifts performance per watt.

This approach extends beyond processor choice. New server platforms now improve memory efficiency, reduce idle power, tighten voltage regulation, and support finer-grained power states. Better board design and cleaner internal power conversion trim losses before workloads even start.

Specialized electronics matter in adjacent control systems as well. High-performance labs, semiconductor environments, advanced sensing platforms, and precision testing workflows depend on stable electrical behavior. In niche engineering contexts, even choosing between unipolar and bipolar HV amplifiers shape system efficiency and thermal behavior across connected equipment.

Using Software as a Power Tool

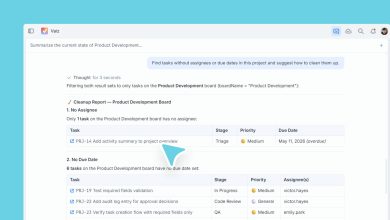

Hardware alone won’t solve the energy problem. Software is one of the sharpest tools in the facility. Intelligent orchestration platforms can shift workloads across clusters and curb idle server sprawl. Coordinating these components turns fragmented resources into a disciplined power strategy.

Artificial intelligence can improve this layer in practical ways. Predictive controls can forecast thermal spikes, estimate workload intensity, flag weak utilization, and recommend placement changes before waste builds. Operators can then balance demand across servers, cooling assets, storage systems, and backup resources with confidence.

This point has become urgent because the energy crisis is limiting AI’s potential. As model training grows and inference spreads across industries, energy efficiency stops being a sustainability talking point and turns into a competitive requirement. Companies that manage computing with tight software controls will expand artificial intelligence programs without overwhelming power budgets.

Upgrading Power Delivery

Ample energy disappears before it reaches the processor. Power conversion losses, distribution inefficiencies, transformer overhead, and redundant system drag chip away at performance across the entire site. Modern facilities now target these losses through high-efficiency uninterruptible power supplies, durable transformers, and advanced busway systems.

Teams have started to rethink the electrical backbone with the same rigor they apply to servers and chillers. Better power chain design reduces waste, lowers heat output, and eases strain on supporting infrastructure. It can even open room for density growth inside an existing footprint.

Operators should pay close attention to utilization patterns across backup and redundancy systems. Oversized resilience layers may protect uptime, yet they can burden the site with avoidable losses. Right-sizing the power path while preserving reliability demands careful engineering, sharp modeling, and disciplined commissioning.

Leveraging Heat as a Resource

Many data centers treat heat as a byproduct to remove it as fast as possible. A stronger model uses heat as an asset.

Heat recovery systems can route waste heat into district networks, nearby commercial buildings, industrial processes, and domestic water systems. It cuts total energy waste at the site and improves the broader economics of development.

This strategy works best in regions where utilities, municipalities, and infrastructure owners coordinate early in the project cycle. Site selection then becomes a strategic decision rather than a real estate transaction. A location with strong grid access, favorable climate, network connectivity, heat reuse options, and supportive policy can outperform a cheaper site with weak infrastructure.

Analyzing Metrics To Drive Decisions

Many teams still lean too hard on power usage effectiveness alone. Power usage effectiveness remains useful, yet it doesn’t tell the whole story. Leaders need a wide view that includes server utilization, energy per workload, rack density stability, and carbon intensity.

Good metrics sharpen capital allocation. They help leaders identify whether the next gain should come from cooling upgrades, server refreshes, electrical redesign, or site-level storage. They keep teams from chasing vanity numbers while real waste hides elsewhere in the stack.

A reliable program ties these metrics to planning. It treats design, procurement, deployment, operations, and refresh cycles as one continuous system. That discipline helps companies avoid the trap of solving a thermal problem in one quarter and a power delivery problem in the next.

The Next Step for Data Center Technology

The next era of infrastructure won’t reward expansion. Disciplined design and durable controls will make data centers more energy efficient.

For engineering leaders and enterprise decision-makers, the next step involves action. Audit where energy slips away, rank the highest-impact upgrades, and align facility strategy with workload growth before constraints tighten further. Businesses that solve efficiency with rigor will build infrastructure ready for the next wave of artificial intelligence, cloud demand, and digital transformation.