What happens when AI agents “join the workforce”? There will be innovative new workflows and productivity enhancements – but there will also be new challenges for organisations to wrap their arms around, particularly when it comes to governing agentic AI knowledge, reasoning, and decisions. This will be the new challenge for organisations that want to move ahead with autonomous or semi-autonomous AI securely and confidently – and it’s an area that they ignore at their own peril.

From smarter models to smarter workflows

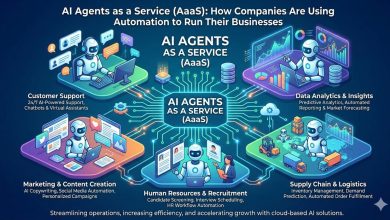

It’s worth zooming out for a moment to see how we got here. Over the past two years, the AI industry has been consumed by a race to build increasingly sophisticated large language models (LLMs). As models have reached an increasingly mature and refined state, the tech capability is evolving from text generation to action, and the focus has slowly started shifting to the agentic build platform: the foundation on which organisations design, deploy, and orchestrate AI agents that can reliably execute complex tasks.

The primary focus around agents thus far has mainly been around leveraging “knowledge vaults” – essentially, a repository of important documents and other content – that an AI agent can analyse and pull information from, allowing it to return answers to a professional.

For the most part, these tasks can be characterised as basic search and retrieval, but “basic” doesn’t mean “trivial” or “lacking utility”. In fact, there are quite a few scenarios where the ability to quickly and efficiently perform this type of narrow task is incredibly useful and can cut down the time involved from hours to minutes – for instance, any task that involves reviewing heaps of documents.

With the introduction of model context protocol (MCP) and Agent2Agent (A2A) –open-source standards that enables different AI systems and systems of record to seamlessly communicate with one another – it’s becoming feasible for AI agents to go beyond this basic search and retrieval and “hand off” the next part of the workflow to another agent. And that’s where the complexity begins to multiply, from a governance standpoint.

First steps towards agentic operations

To get their feet wet with agentic workflows, organisations might ramp up with “low hanging fruit” use cases, such as having AI agents take some form of content or “answer” that they’ve retrieved and then – as the next part of the workflow – email it to a certain individual or group of individuals, or post it to a specific Slack channel or Teams chat for review and release to the original requestor.

It’s not hard to imagine other early use cases around answering employee questions. Organisations typically have channels – whether it’s a messaging app, an online form, or a dedicated email addresses – for employees to submit everything from legal and HR questions to sales and marketing enquiries. Typically, these are answered by staff.

Introducing an AI chatbot streamlines this process. The AI uses official internal policies and contracts to provide responses, which are then reviewed by a human before being released. A human-in-the-loop automation in essence. This multi-step approach creates an agentic workflow, combining AI knowledge sourcing with human validation.

As AI agents develop, however, overseeing the communications of this new “labor force” will become a business imperative, particularly within all the different communication channels. And as AI agents assume greater decision-making authority, organisations will need robust safeguards to track, manage, and ensure accountability for the actions and outputs of AI in their business.

The governance framework behind safe AI adoption

So, how best to put guardrails in place without hampering AI’s potential? A prudent risk mitigation approach involves clearly delineating which components of your workflow are appropriate for AI assistance, while designating certain tasks as “AI agent-free” zones.

This process requires evaluating workflows on a spectrum from “low stakes” to “high stakes” in order to assess the potential risks associated with the involvement of AI agents. It’s also worth designating which workflows require a human in the loop – so that there’s at least one set of human eyes reviewing outputs – and which workflows the agents can handle on their own.

As another safety and governance measure, organisations must ensure they have high-quality data and a solid information architecture within the organisation for AI to draw upon. That’s the only way for AI to take action based upon accurate, relevant, and up to date information.

To help cultivate this information architecture, organisations should start by designating authoritative datasets and using a managed repository as a single source of truth, with staff curating content to keep data quality high. Simple practices like marking final contract versions help organise key documents for effective AI use and prevent important records from being missed – ensuring AI has the best possible data to leverage. Ensuring an active lifecycle on the dataset is also a vital component of this strategy, guaranteeing that responses are derived from current and validated information that remains relevant and reliable.

Ready or not, agents are coming

As organisations prepare for agents that don’t just assist but act, a key requirement will be the ability to effectively oversee and audit their activity, put robust governance frameworks in place, and ensure accountability for the actions they take and the outputs they produce.

After all, if AI agents are slowly becoming part of the labor force, the logical next step is to turn them into accountable workers. For organizations with an eye on avoiding security or governance missteps, it’s the only way to ensure safe, scalable AI adoption as the technology continues to evolve.