The rapid rise of AI-driven development has unlocked enormous potential across industries driven by unlimited coding capacity and unlimited analytical capability introduced by coding agents and the latest generation of models. The need for a reliable, auditable, and interoperable system for organizing the contextual signals that drive safe, relevant, and accountable behavior has never been more important for managing production where changes are higher risk than re-running tests in development when the model hallucinates or makes a mistake.

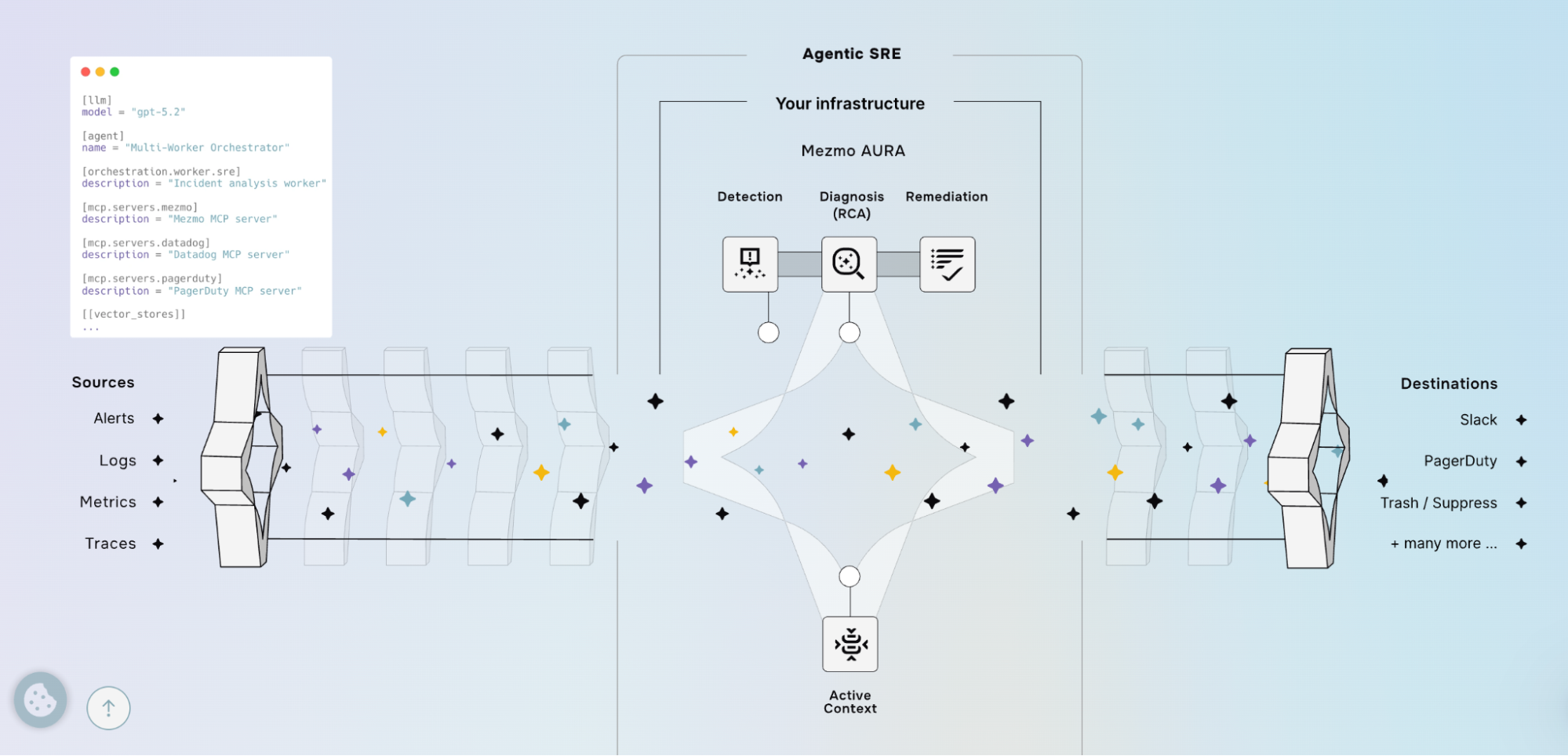

That’s where AURA comes in.

AURA is an open source project started by Mezmo that provides an extensible system of context for AI applications. Agents built on AURA make better decisions, explain actions, and integrate safely into real-world workflows.

The problem: context overload and data bloat

Modern AI agents rely heavily on context e.g. user history, real-time signals, system telemetry, policy constraints, and more in order to accomplish their tasks. Today that context is frequently:

- Fragmented across services and silos.

- Ad-hoc and proprietary, limiting interoperability.

- Opaque, making verification, auditing, and debugging difficult.

- Transient or unavailable at inference time, leading to irrelevant or unsafe outputs.

These issues hinder reliability, slow development, increase integration costs, and amplify model risks (bias, hallucinations, or harmful actions) when agents are assigned to mission-critical tasks.

What AURA provides

AURA is an open system for getting the right context to AI systems at the right time. It’s built around a few convictions:

- Open by default. Community-governed formats and interfaces. No proprietary lock-in. If you swap out a component, your context still works.

- Your domain, your schema. Extensible enough to carry business rules, data lineage, and operational metrics alongside the usual signals; not just what we thought you’d need.

- Now and then. Streaming context for what’s happening right now. Archived records for what happened before. Agents need both to reason well.

- Traceable end to end. Every piece of context carries provenance: where it came from, when it was used, what it influenced. You can audit the full chain from input to output.

- Policy-aware from the start. Consent, redaction, retention, and enforcement aren’t bolted on. They’re built into the context lifecycle so teams can move fast without moving recklessly.

How this changes AI SRE as a category

Right now, every team building AI into their SRE workflow is solving the same problem badly. They cobble together context from six different tools, shove it into a prompt, and hope the model figures out what matters. When it doesn’t, they add more context. When that breaks the token window, they start hacking together their own retrieval layer. Everyone is building the same plumbing, and nobody’s plumbing is good.

AURA kills that cycle. It gives agents a single, structured way to access the context they actually need: user history, telemetry, policy rules, operational state. One set of interfaces, open formats, works with whatever tools you already run. Your RCA agent and your remediation agent and your observability dashboards all pull from the same place.

And because it’s open source, the context layer isn’t a black box. You can see exactly what the agent saw when it made a decision. You can audit it. You can hand that audit to your compliance team and they can actually read it. Try doing that with a proprietary agent that assembles its own context behind closed doors.

The other thing that matters: patterns travel. When one team figures out a good way to structure context for Kubernetes incidents, that pattern is reusable. Not locked inside one company’s product, not gated behind an enterprise contract. Just available. That’s how a category grows up instead of fragmenting into fifty incompatible silos.

Call to action

As AI systems become more capable and more embedded in decision-making, the importance of reliable, auditable context cannot be overstated. Organizations building or deploying AI should evaluate how they manage contextual signals today and consider adopting or contributing to open initiatives like AURA to improve safety, interoperability, and trust. Participation through testing, contributing code, defining schemas, and/or adopting standards accelerates progress for everyone.

AURA is addressing a foundational infrastructure gap for the AI era: a standardized, open, and auditable system of context. By enabling models to access rich, governed, and traceable signals, AURA helps make AI systems more useful, safer, and easier to integrate at scale. Supporting and adopting open context systems is a practical step toward more responsible and effective AI-driven development.