Something important has happened in AI image generation over the past two years: many serious creators are no longer picking tools because they’re polished or backed by a big platform. They’re choosing tools because those tools give them control.

That shift matters more than it might seem at first.

The first wave of AI creativity was really about access. Anyone could type a prompt and get an image, a video clip, or a product mockup in seconds. For a lot of teams, that alone was enough to prove the value of generative AI.

But the next wave looks different. Creative teams are now asking tougher questions. Can we adjust how the model behaves? Can we keep a consistent visual style across dozens of assets? Can we run experiments without being locked into one platform’s way of doing things? Can we string image generation, video creation, editing, and audio into one repeatable workflow?

This is where open-source image models have become so influential. They don’t just offer another way to generate images — they represent a real change in how AI creative production is being organized. Teams are moving away from isolated, one-click tools and toward flexible systems they can customize, govern, and scale.

Why Open-Source Image Models Gained Creative Traction

Closed AI image platforms have real advantages. They’re usually easier to use, more accessible to non-technical people, and come with built-in guardrails that reduce risk for larger organizations. For mainstream business use, that kind of structure is often genuinely helpful.

But creative production often needs more flexibility than a single platform can offer.

A concept artist might need to test multiple visual directions fast. A game studio might want to explore character designs and environments in a tightly controlled visual style. A fashion team might need to generate campaign concepts and garment variations before committing to a photoshoot. A marketing agency might want a repeatable visual system for different clients without rebuilding everything from scratch each time.

Open-source and open-weight models appeal to technical and creative teams because they offer more room to experiment. Instead of accepting a fixed interface, you can build a workflow around the model — test different settings, combine it with other tools, run it locally, or plug it into a larger pipeline.

This is why tools like ComfyUI became so popular in the AI creative space. A node-based workflow isn’t just a technical nicety. It reflects how professionals actually work: one step feeds the next, outputs get reviewed and refined, and the process adjusts based on what the creative goal actually is.

The Professional Case for Flexible Generation

The debate around flexible or lightly restricted generation is often simplified into a safety argument. In reality, the professional use cases are more nuanced.

Fashion technology is one example. Virtual try-on, garment digitization, catalog production, and product visualization all require careful control over how bodies, clothing, fabric, and fit are represented. If a platform broadly refuses or distorts certain human-form outputs, it can create problems for legitimate commercial workflows.

The same is true in other fields. Medical and anatomical illustration, life drawing references for art education, film and game concept art, and character design can all require more control than a general-purpose consumer tool allows. These use cases do not remove the need for safety standards. They simply show that professional creative requirements are often more complex than broad platform rules can handle.

In professional contexts, a no-filter AI art generator is best discussed as part of a wider open-model workflow, where teams need more control over testing, deployment, and review than a one-size-fits-all platform experience can provide.

That does not mean every organization should use unrestricted systems. It means teams need to decide where flexibility is useful, where restrictions are necessary, and how human review should sit between model output and publication.

This distinction is important. The mature conversation is not “filters versus no filters.” It is about governance. Creative teams need freedom to explore, but businesses also need review standards, consent rules, brand safety policies, and clear internal boundaries.

Open models move more control to the user. That can be powerful, but it also increases responsibility. The teams that use these systems well will be the ones that combine flexibility with process.

The Fragmented Workflow Problem

As image models improved, a new problem became harder to ignore: creative workflows are still fragmented.

A single AI image can be useful, but most professional teams don’t need one isolated asset. They need a connected sequence of outputs. An idea becomes a brief. A brief becomes a storyboard. A storyboard becomes images. Images become video. Video needs voiceover, music, subtitles, pacing adjustments, and multiple export formats.

In many teams, each of those steps still happens in a completely different tool.

A marketer might write a script in one platform, generate images in another, create motion in a video tool, synthesize voiceover somewhere else, and then assemble the final asset in their usual editing software. Every handoff creates friction — files need to be re-exported and re-uploaded, prompts and context get lost, and style consistency starts to slip.

That friction isn’t just annoying. It affects output quality. Teams end up fixing formatting problems instead of improving the actual creative idea.

This is the gap between generation and production. AI tools have gotten very good at creating individual outputs. But professional creative work is almost never a single output. It’s a pipeline.

Orchestration as the Next Layer of AI Creative Infrastructure

The natural answer to fragmented workflows is orchestration.

A single-model tool asks you to adapt your creative process to one system. An orchestration platform works differently — it connects multiple tools or models, routes tasks to the right capability, and helps you move through a full production process without manually managing every step.

This matters because no single model will ever be best at everything. One might be stronger for images. Another might be better for short-form video. Another might handle voiceover or editing more effectively. As the AI market gets more specialized, the ability to coordinate these tools becomes more valuable than the tools themselves.

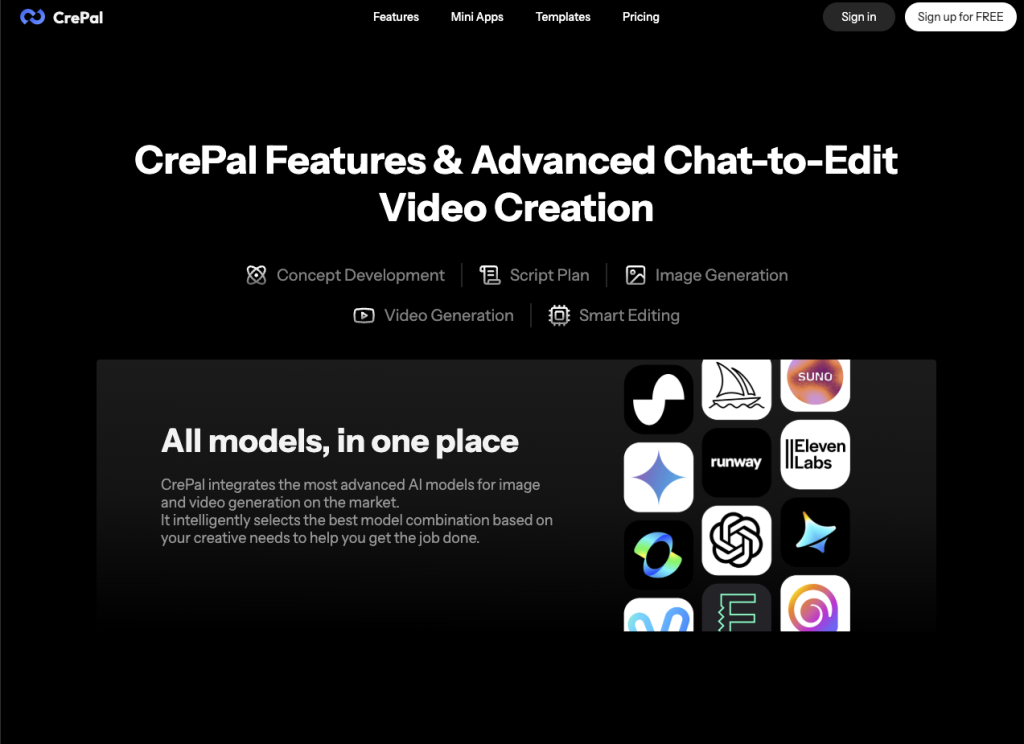

CrePal is one example of this orchestration approach. Founded in 2024 by former Tencent WeChat AI product manager Jacky Liu, the platform positions itself as an AI video creation agent that brings multiple creative tools and third-party models into one workflow. The broader point isn’t the specific company — it’s the architecture. Creative teams increasingly want a director layer that can sit above individual models and coordinate the full production process.

That coordination layer is where a lot of the next competition in AI creativity will likely happen.

What Creative Teams Should Take From the Shift

The rise of open-source image models is often framed as a battle between open and closed platforms. But that’s only part of the story.

The more useful question for most professional teams isn’t which type of tool is better. It’s: which parts of the workflow need control? Which need speed? Which need compliance? Which need repeatability?

Open models are well-suited to experimentation, customization, local deployment, and technical flexibility. Closed platforms tend to work better for ease of use, managed access, and built-in controls. Many serious teams will end up using both.

The real advantage will come from how those tools are connected.

A creative team shouldn’t build its entire process around one model or one platform. The AI landscape moves too fast for that. A more durable approach is a workflow that can adapt as new models emerge.

Teams should also separate experimentation from production. Open-source and flexible generation workflows might be great for research, concept development, or internal testing — but production assets should still pass through human review, brand checks, and safety standards before they go out.

And when evaluating AI tools, workflow fit matters at least as much as output quality. A model that produces impressive one-off results might still be frustrating in a real campaign if it can’t support revisions, consistency, or export requirements.

The strongest AI creative stacks will likely combine three things: flexible model access, orchestration across tools, and human governance.