For years, cooling played the role of a silent, reliable partner in data centres. As long as temperatures stayed within safe limits, the cooling infrastructure was deemed effective – a static, predictable utility.

That era has ended.

The rise of high-density AI workloads has transformed cooling from a passive background system into an active, responsive component of the compute stack. This shift is driven not only by the enormous heat output of modern graphics processing units (GPUs), but by the extreme volatility of that heat. Rack densities have grown from a few kilowatts to tens, and in some cases hundreds, of kilowatts, while thermal profiles and load patterns fluctuate sharply in sync with AI training and inference cycles.

AI training runs generate highly dynamic thermal loads that demand immediate infrastructure response. In this new reality, power and thermal management can no longer operate in silos.

The end of static margins

Traditional data centres saw electrical loads increase gradually, allowing operators to design with generous safety buffers. AI workloads obliterate those buffers. They are dynamic, introducing rapid, high-amplitude power swings that propagate through the electrical chain – affecting draw, harmonics, and transients. Power fluctuations across a heterogeneous hardware estate translate almost instantly into thermal stress at both rack and room level.

When cooling and power responses are not synchronised, the result can be unstable temperatures, unnecessary overprovisioning, protective trips, or degraded performance and uptime.

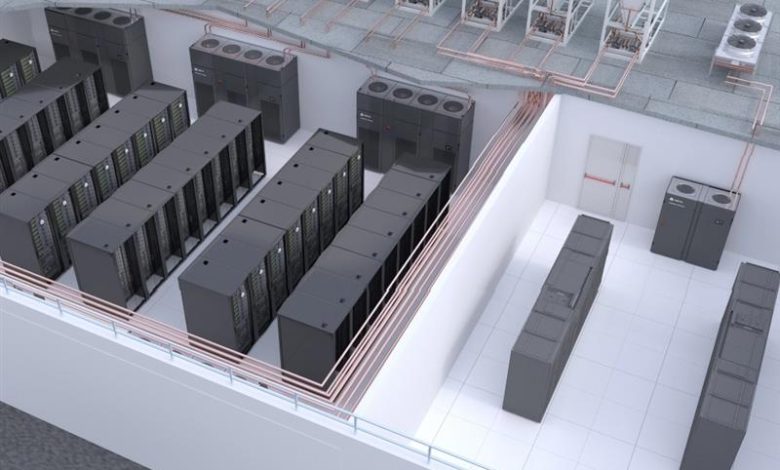

Heat management must now be treated as a connected system – a “Thermal Chain” that links the chip, rack, room, and external heat rejection plant. Decisions about rack density and placement now have immediate downstream consequences for the entire cooling ecosystem.

Data centre operators should therefore strive to unify the full thermal chain – from on-rack heat capture to plant-level rejection – under a single, data-driven control strategy capable of responding coherently to dynamic workloads.

Moving closer to the heat

The most visible industry change is the widespread adoption of liquid cooling. Cooling racks at 100 kW (and beyond) with air alone has become physically inefficient and economically unviable. Recent deployments in EMEA, for example, feature new coolant distribution units (CDUs) rated at 70 kW, 100 kW, and even up to 2300 kW, offered in both in-rack and in-row configurations, supporting liquid-to-air and liquid-to-liquid loops for both retrofits and greenfield sites.

Technologies such as direct-to-chip liquid cooling and rear-door heat exchangers capture heat at the source rather than allowing it to disperse into the hot aisle. This precision is essential. By intercepting heat early, operators can maintain stability without over-engineering room-wide airflow.

When paired with flexible CDU platforms, direct-to-chip approaches provide a future-ready path to ever-higher rack densities while preserving thermal stability and operational flexibility. Importantly, these solutions support incremental adoption.

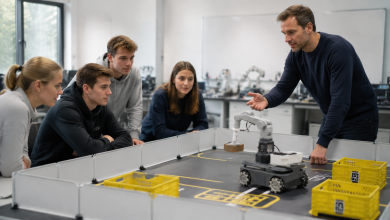

Many facilities are now running hybrid operations, where liquid-cooled AI racks coexist with air-cooled equipment, allowing operators to transition gradually as AI use cases mature.

The hybrid future

The transition is not all-or-nothing. We are entering an era of mixed-density halls, with high-density liquid-cooled AI clusters placed alongside air-cooled systems.

In such set ups, airflow management becomes significantly more challenging. Without careful design, heat from dense racks can recirculate and create hot spots that threaten adjacent equipment. Room-scale architectural choices are therefore gaining importance alongside rack-level cooling solutions.

Perimeter or slab-floor thermal wall technologies and intelligent containment systems are essential for defining clear airflow paths and preventing high-density zones from destabilising the rest of the hall. At the room level, thermal management in data centres typically relies on mechanical cooling via CRAC (Computer Room Air Conditioning) units or chilled-water-based CRAH (Computer Room Air Handler) systems. Both approaches support a gradual shift toward hybrid infrastructures that integrate air and liquid cooling methods.

As allowable operating temperatures for servers and GPUs continue to rise – driven by advancements in hardware design and updated guidelines – the required facility water supply temperature for liquid cooling loops can also increase (often to 40°C or higher in modern systems). This trend enhances the viability of air-based cooling strategies in hybrid setups while prompting a re-evaluation of traditional chilled-water and direct-expansion room cooling architectures to determine the most suitable air-cooling design. These elements serve as guardrails that enable hybrid cooling strategies to coexist reliably, giving operators the stability and optionality to increase density selectively rather than requiring a complete facility redesign.

Efficiency in waste heat rejection

The final link in the chain is waste heat rejection which can be repurposed or reused where feasible for district heating, industrial processes, or other low-grade thermal applications. As future operating temperatures continue to evolve, the thresholds required to efficiently cool AI deployments are likely to span a wide range of potential densities and temperature profiles. This variability makes it complex to lock in a precise chilled water temperature from the cooling system.

Within this framework, new efficiencies emerge by deliberately trimming the cooling load through design choices that prioritise flexibility. Reducing compressor-dependent cooling extends free-cooling hours, even in varied or challenging climates. Free-cooling screw chillers excel at maintaining high efficiency at elevated and even extreme ambient temperatures, while centrifugal chillers provide reliable baseload stability and critical uptime assurance.

Given the evolving nature of chip technology and unpredictable fluid temperature requirements, trimming the cooling loads offers the ideal path for data centre needing a high degree of flexibility in cooling solutions. This approach enables responsiveness.

Trimming the cooling is particularly effective in AI driven, high density scenarios where the goal is to minimise compressor operation, maximise free cooling hours, reduce energy consumption, and support future ready architectures designed around higher water temperature setpoints. It is important to maintain water temperature flexibility to accommodate potential temperature increases.

In this context, cooling transforms from a fixed mechanical system to an adaptive, low carbon extension of the IT load. It delivers flexibility at every level to handle varying water conditions, representing the best approach when definitive long-term decisions remain unclear.

Free cooling screw chillers represent a strategic choice for data centres seeking to reduce energy consumption without compromising performance. They deliver high efficiency at elevated ambient temperatures, allowing operators to extend freecooling hours throughout the year, even in challenging climates. By combining robust mechanical cooling with optimised freecooling design, these units help lower operating costs, stabilise system performance, and support more efficient thermal strategies.

Centrifugal chillers remain the preferred choice when applications demand consistent capacity, very high loads, or lower supply water temperatures. They deliver a stable, scalable backbone for heat rejection when free cooling windows are limited or when resilience and flexibility outweigh maximum compressor-free efficiency. At the same time, they deliver cooling efficiency, preserving more power for AI workloads and allowing available capacity to be directed where it creates the most value.

These approaches are not mutually exclusive philosophies. They represent different stages of a facility’s thermal evolution, with cooling increasingly tuned to real operating conditions rather than conservative design assumptions.

The critical role of control

Unifying all of this is the control layer. Modern infrastructure management platforms now bridge IT and facilities, giving the cooling system visibility into upcoming dynamic workload. By analysing real-time server data, the system can proactively adjust flow rates, setpoints, and capacity – often before temperatures begin to rise.

This predictive coordination is key for resilience. Legacy cooling reacted only after room sensors registered a change, which cased a lag that has become unacceptable in the AI era. Today’s control systems orchestrate behaviour across the entire thermal chain, enabling pre-emptive responses that protect performance and uptime.

This level of visibility also simplifies technology evaluation and risk management as AI deployments scale. Specialist services teams with end-to-end expertise across design, commissioning, optimisation, and predictive maintenance, are ideally positioned to maximise the value of this connected thermal intelligence.

Cooling is no longer just about keeping things cold. It is now intelligent, dynamic heat management, which is a core enabler of AI-scale computing.