Every major AI announcement in the past year has carried the same promise: autonomous agents that plan your calendar, manage your pipeline, and close deals while you sleep.

In reality, most AI agents in production still need constant monitoring, manual restarts when workflows break, and human intervention the moment anything unexpected happens.

To make matters worse, Gartner estimates that only about 130 of the thousands of vendors claiming agentic AI capabilities actually deliver genuine autonomous functionality. The rest fall into what Gartner calls “agent washing,” which in practice means old chatbots and RPA tools sold under new names.

The same research predicts that over 40% of agentic AI projects will be canceled by the end of 2027 because of escalating costs, unclear business value, or inadequate risk controls.

And yet the models themselves have never been more capable, which raises an obvious question: what is actually going wrong?

The wrong diagnosis

The common thinking in enterprise AI right now is that better models will eventually fix the reliability problem. A smarter model will hallucinate less, recover from errors faster, and need less hand-holding.

But most agent failures come from fragile workflows built around models that are perfectly capable on their own.

A typical enterprise agent has to handle multi-step reasoning, pull data from a customer database through an API, run retrieval-augmented generation against internal documents, and produce a formatted output. Every one of those steps can break, and when one does, the whole chain goes down.

Forrester flagged in its 2025 report that enterprises adopting AI agents keep finding that these systems fail in unexpected and costly ways. MIT Sloan researchers saw the same thing when they deployed an AI agent for clinical workflows and discovered that 80% of the work went into data engineering, governance, and workflow integration rather than anything related to the model itself.

So, the research all points in the same direction, and the conclusion is hard to argue with. The models work fine, but the systems they sit inside keep falling apart, and very few teams in enterprise AI are designing with that specific problem in mind.

Gaming industry figured out this problem years ago

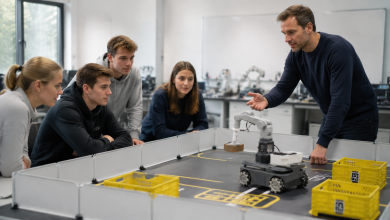

I have spent years building distributed AI systems for live AAA gaming environments, and the contrast with enterprise AI is hard to overstate.

The problems that enterprise teams are now wrestling with, including uptime, fault recovery, and autonomous operation at scale, were addressed in gaming infrastructure a long time ago.

A modern multiplayer game demands things that most enterprise AI stacks cannot deliver. AI companions make tactical decisions alongside human players in real time. NPC behavior systems adapt dynamically to millions of concurrent users without pausing for review.

All of these systems run around the clock, and none of them gets to stop and wait for someone to check the logs. When an AI system fails in a live match, millions of players notice within seconds. There is no grace period and no option to patch things up in the morning.

Gaming studios learned early on that this kind of reliability comes from making the infrastructure around the AI resilient enough to absorb failures without interrupting the experience.

That means distributed computing across server clusters, automated failover that reroutes workloads without human involvement, and task orchestration layers that redistribute operations when components stall.

What infrastructure-first actually looks like

Most of the attention in enterprise AI still goes to model selection, benchmark scores, and parameter counts. Very few teams spend the same energy on the operational layer that decides whether an agent can actually run on its own for more than a few hours.

A recent survey found that 65% of enterprise leaders see agentic system complexity as the top barrier to deployment, and that number has held steady for two consecutive quarters.

Full deployment is stuck at roughly 11% even though 65% of enterprises were already running pilots in early 2025. That gap between trying agents out and actually trusting them to run on their own is almost entirely an infrastructure problem.

And the most basic gap is in how computing is set up. Agents that depend on a single cloud region or one centralized inference endpoint are fragile by default, because when that endpoint goes down, every agent connected to it stops working.

A GPU layer that spreads workloads across nodes based on availability and demand removes that single point of failure entirely.

Task routing is just as important. When a system pushes a simple data lookup through the same pipeline as a complex multi-step reasoning job, bottlenecks show up the moment load picks up. Matching tasks to the right resources based on weight and urgency keeps the whole system responsive even during demand spikes.

Fault tolerance has to be built in from the start. Most enterprise AI systems just log failures for someone to review later, while resilient systems handle them automatically through circuit breakers, retry logic that routes around failed nodes, and self-healing workflows that pick up from the last successful step.

The same goes for output quality. Agents that cannot tell when their own results have gone off track or when their data has gone stale will eventually need someone to step in and clean up.

Here’s what has to change before agents can truly run on their own

Most of the energy around AI agents has gone into the models themselves, how well they reason, how many parameters they carry, and how they score on benchmarks. That focus makes sense given how fast models have improved, but it leaves the harder problem almost completely untouched.

The models are already good enough for a wide range of autonomous work. The infrastructure they run inside is what keeps falling short, and organizations that want agents to actually operate around the clock need to put the same effort into operational resilience that they currently reserve for model selection.

In practice, that means designing for failure and recovery from the very first architecture decision, tracking uptime over benchmark scores, and actually studying industries like gaming that solved continuous autonomous operation years ago.

Right now, the industry is pouring billions into making models smarter while the systems those models depend on still cannot stay up through the night. That will not change until infrastructure gets the same budget, the same talent, and the same executive attention that model development currently enjoys.