For years, the enterprise AI infrastructure playbook has been deceptively simple: standardise on a single chip architecture, deploy at scale, and trust the performance justifies the premium. That approach emerged from enterprise data centres, but it no longer reflects how AI is actually deployed.

In 2026, AI workloads span embedded systems in robots and sensors, to AI-enabled desktops and diverse enterprise deployments. Each of these workloads places a different demand on silicon. The one-size-fits-all approach collapses when confronted with this reality. The heterogeneous silicon era has arrived.

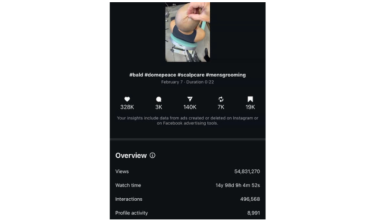

CES 2025 provided the clearest market signal yet. AMD unveiled its Ryzen AI 400 series with explicit workload-specific positioning across client and edge use cases. NVIDIA introduced its specialised Rubin platform optimised for Agentic AI, alongside continued expansion of its Grace CPU and Blackwell GPU architectures. These announcements acknowledge what production deployments have already proven: different AI workloads have fundamentally different infrastructure requirements.

The advantage lies not in choosing between vendors, but in leveraging architectural diversity within NVIDIA’s broadening portfolio, within AMD’s expanding range of CPUs and GPUs, and strategically across both.

The inference economics problem nobody discusses

Single-architecture strategies offered the appearance of simplicity: bundled infrastructure, integrated tooling, and the promise that everything would work together seamlessly. For enterprises under pressure to deploy AI quickly, this convenience proved irresistible.

But convenience obscures constraint. The cost gap between training and inference infrastructure becomes brutal at scale, and most enterprises only discover this after committing to a single architecture. Training foundation models justify premium GPUs at £30,000 per unit when you’re training once or infrequently. Inference economics work completely differently: millions of daily requests where latency matters and energy efficiency compounds. Running inference on expensive training infrastructure haemorrhages cash, but switching architectures becomes increasingly difficult as entire systems become engineered around specific chip designs.

Enterprises running customer-facing chatbots or real-time analytics generate enough daily requests that inference costs swallow their AI budget. Moving these workloads to specialised inference chips changes unit economics from unprofitable to sustainable. Similarly, edge deployments in robotics or sensor networks require purpose-built silicon optimised for power efficiency and real-time processing, while desktop users running AI-enabled applications benefit from architectures designed for local acceleration. This is the difference between AI projects that scale and those that get quietly shelved when the infrastructure bill arrives, whilst the provider’s roadmap dictates your innovation schedule.

Hardware diversity only works with software maturity

The heterogeneous deployment framework emerging in 2026 positions AMD and NVIDIA as foundational infrastructure providers, with specialists like Cerebras and Groq filling distinct performance niches. NVIDIA maintains dominance in training and general-purpose compute, where CUDA’s ecosystem has a formidable advantage. AMD increasingly captures workload-specific deployments where price-to-performance, open software stacks, and architectural flexibility create compelling alternatives across both inference and client-side AI. Cerebras delivers unprecedented efficiency for large-model training with wafer-scale architecture. Groq excels at high-throughput, low-latency inference with deterministic processing.

This diversification only becomes viable because the tooling problem has been solved. Historically, deploying mixed silicon environments meant wrestling with incompatible toolchains and operational complexity that negated any hardware advantages. Managing separate deployment pipelines for each chip architecture created an infrastructure nightmare that made single-architecture strategies the path of least resistance, regardless of the cost penalties.

That barrier has collapsed through open standards and vendor-neutral platforms. Agentic AI frameworks like n8n and Arize, combined with inference platforms like Fireworks and Baseten, establish neutral protocols between workloads and underlying infrastructure. Dev teams can orchestrate across heterogeneous environments without rewriting code for each architecture, protecting strategic flexibility whilst avoiding the lock-in that has historically made diversification impractical.

These platforms transform hardware diversity from an operational burden into a competitive advantage. When you can test multiple use cases simultaneously, integrate business feedback quickly, and accelerate time-to-market without infrastructure constraints becoming bottlenecks, silicon diversity stops being a technical decision and becomes a strategic one. Teams experiment with different GPU configurations, measure real-world performance and costs, then optimise without rearchitecting their entire stack or limiting themselves to a single architectural approach.

Neocloud providers complement this shift by offering transparent pricing focused on performance instead of bundles. Without the artificial scarcity and rationed access that characterise hyperscaler allocations, organisations can diversify infrastructure, reduce risk, and access competitive terms whilst retaining the freedom to innovate on their own schedules rather than queuing for compute access.

The workloads driving diversification first

Enterprises no longer ask which single GPU vendor to standardise on. They ask which combinations of silicon optimise their specific workload mix – across edge, desktop, inference, and training – and the adoption sequence is predictable.

High-volume inference applications lead the charge because cost pressures surface immediately. Customer-facing applications generating millions of daily requests make inference economics impossible to ignore. Organisations move these workloads to specialised inference chips whilst maintaining NVIDIA infrastructure for training and experimentation. The cost savings are immediate and quantifiable.

Agentic AI deployments follow closely behind. Autonomous agents orchestrating complex workflows require sustained reasoning capabilities, multi-step planning, and reliable execution. These workload patterns benefit from purpose-built architectures like NVIDIA’s Rubin platform, which targets requirements that differ from both pure training and inference. Early adopters establish dedicated infrastructure for agentic workloads rather than forcing them onto existing clusters designed for different performance characteristics.

Specialised research and development initiatives round out the first wave. Organisations exploring novel architectures or pushing performance boundaries experiment with Cerebras for training efficiency gains or Groq for inference optimisation. These deployments generate the production data that informs broader infrastructure decisions across the business.

Why 2026 is the tipping point

The silicon monoculture sustained itself through ecosystem lock-in and the illusion of simplicity. Standardising with a single chip architecture appeared efficient, but that convenience came at the cost of strategic autonomy.

As production deployments accumulate real performance data, the economic advantages of heterogeneous strategies become impossible to ignore, whilst the constraints of single-architecture dependency become impossible to justify. The silicon exists. The software platforms have matured. The cost pressures demand change.

Enterprises finally have the operational tools and economic pressure to deploy silicon that matches their actual workloads rather than whatever vendor lock-in dictates. That shift moves from early adopters to mainstream practice in 2026, driven by CFOs reviewing infrastructure costs and demanding better answers, and by CTOs recognising that strategic independence requires infrastructure diversity.

The monoculture era ends when businesses stop constraining their strategic future to a single chip architecture, and instead use the full spectrum of silicon diversity now available across the industry.