Most healthcare AI is judged as if medicine is a search problem: feed in symptoms, retrieve the right answer, measure accuracy. Real medicine does not work that way. Doctors make a chain of decisions under uncertainty, time pressure, and imperfect signals, and outcomes often depend on the path they take, not just the final label.

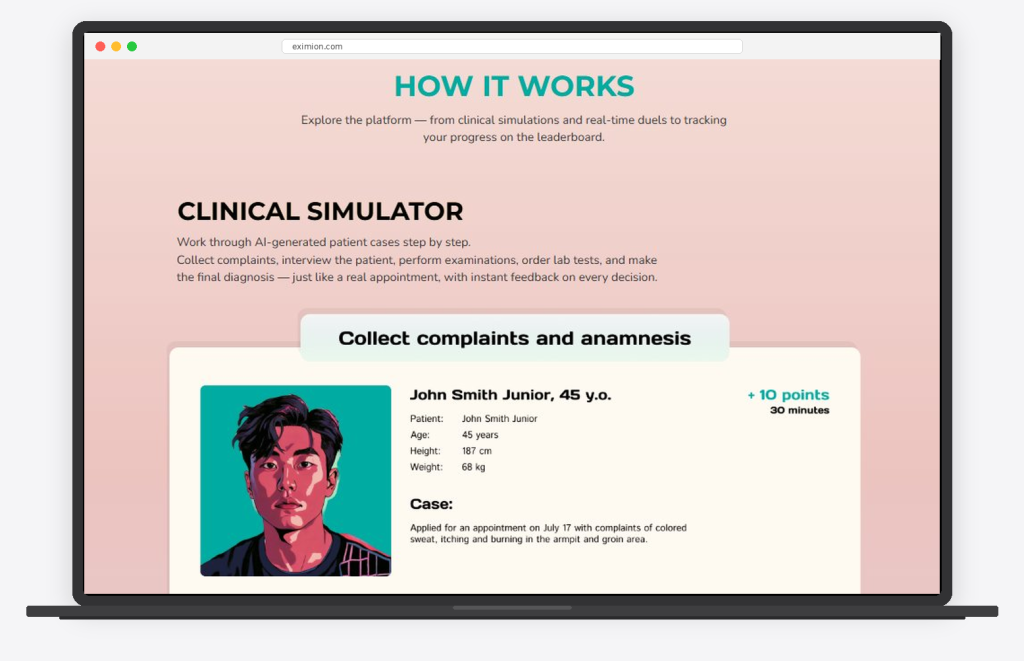

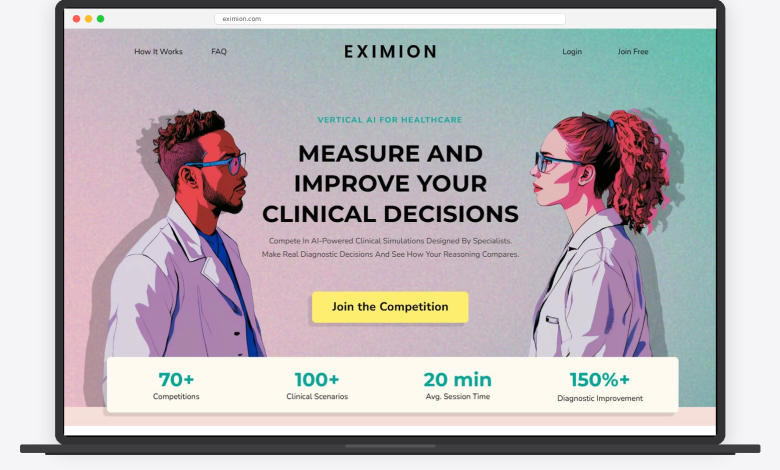

That gap is exactly what Eximion is built to address. Eximion is a vertical AI platform that runs competitive, high fidelity clinical simulations, structured as Medical Olympics for physicians. Doctors work through uncertainty rich patient cases and head to head diagnostic duels. As they move through a case, the system captures the decision pathway: what they ask first, what they test, what they rule in or out, when they escalate, and when they revise. The output is measurable clinical decision behavior, meaning how doctors reason when the case does not match the textbook.

If vertical AI in healthcare is going to improve outcomes, it needs this measurement layer, because the missing metric is not more data. It is decision behavior.

Why accuracy alone does not describe medical work

A model can score well on benchmarks and still miss what doctors face in practice. Medical work includes triage, prioritization, escalation, and revisiting assumptions as new evidence appears. Those steps unfold over time, not in one snapshot. If evaluation focuses only on the final answer, it ignores how the answer was reached.

Medical performance is also context dependent. The same patient presentation can lead to different defensible pathways depending on resources, timing, and risk tolerance. A measurement approach that ignores decision pathways risks overvaluing outputs while undervaluing how decisions are formed under pressure.

What clinical decision behavior means in practice

Clinical decision behavior is the observable pattern of how a doctor reasons through a case over time. It includes how the differential is built, how uncertainty is held or narrowed, and what triggers a shift in direction. It also includes what is prioritized first and what is deferred. This is not about judging doctors. It is about measuring the decision sequence.

A practical way to think about it is the reasoning trace each case produces. Which hypotheses surfaced early, what evidence was sought first, and how new signals changed the plan. When that trace can be observed consistently, it becomes possible to compare decision making across cases and settings.

The limits of passive measurement environments

Many environments used to evaluate medical performance are passive by design. They reward completion, attendance, or exposure to information. Those metrics can reflect engagement, but they do not capture the decision sequence itself. If an environment does not force choices, it cannot reveal how choices are made.

A doctor can understand a guideline and still struggle when a patient does not fit the expected pattern. A physician can recognize a condition in isolation and still miss it when competing signals, noise, and time pressure enter the scene. Passive environments rarely reproduce those conditions in a consistent way. That leaves a gap between what is taught and what is measurable.

Why competitive simulation produces clearer signals

If decision behavior is the target, the environment must reliably generate decisions. Simulation is one of the few environments where cases can be structured to create repeated decision points. It can also be designed to surface where uncertainty collapses too early, or where key cues are discounted. That is measurement, not just practice.

Competitive simulation adds focus and comparability. Time bound progression and branching cases can mirror pressure while avoiding patient risk. Repetition across varied scenarios can produce a clearer picture of how medical reasoning behaves when the signals are incomplete.

In Eximion’s model, simulations are not treated as learning content. They are treated as instrumentation, a way to repeatedly generate decision points and measure how decision making changes over time.

Rare disease and diagnostic uncertainty as the flagship stress test

Rare disease is a high signal use case for measuring decision behavior because it concentrates uncertainty. Presentations can be non specific, early symptoms overlap with common conditions, and the correct hypothesis may not appear early. The pathway to diagnosis often depends on recognizing when a case is not behaving like the most obvious explanation. That is a reasoning challenge more than a data challenge.

Diagnostic uncertainty is not an edge case in medicine. It is the daily condition doctors operate within, especially when symptoms are ambiguous, fragmented, or evolving. Rare disease magnifies the problem and makes the reasoning pathway easier to study. If an evaluation framework performs well only in typical cases, it is not describing real clinical readiness.

What vertical AI for healthcare should mean

Vertical AI for healthcare should not mean a generic system with clinical vocabulary layered on top. It should mean systems built around the constraints of medicine, including uncertainty, time pressure, and high stakes tradeoffs.

In a true vertical definition, the unit of value is not only a prediction. It is a measurable improvement in how decisions are formed, tested, and revised. This definition elevates clinical decision behavior as a core metric. If an AI system cannot account for how decisions unfold, it is not fully aligned with medical work. If it can, it becomes possible to support reasoning quality rather than only producing answers.

What should change in how healthcare AI is evaluated

First, evaluation should include decision pathways, not only final outcomes. Outcomes matter, but the pathway explains what holds up under pressure and what breaks in ambiguity.

Second, simulation should be treated as a measurement environment, not merely an educational add on. It is one of the few settings where uncertainty can be designed, repeated, and compared.

Third, healthcare AI should be tested in uncertainty rich conditions, not only typical cases. Rare disease is one example, but the broader category is diagnostic ambiguity. If a system cannot support reasoning there, it is not ready for the settings where support is most needed.

Eximion: What to do now

Healthcare AI will mature when we measure what truly drives outcomes in clinical settings: how decisions are formed, challenged, and revised under pressure. Clinical decision behavior is the missing metric that brings evaluation closer to practice, and it clarifies what vertical AI for healthcare should stand for.

If we want better outcomes, we should stop treating accuracy as the finish line. The next step is to build measurement standards that reflect real medical decision making, and to fund the tools and programs that can prove they improve it.

Learn more about Eximion at https://eximion.com/.

About the Author

Mike Litvinenko is the Founder of Eximion, a vertical AI platform that uses competitive clinical simulations to measure and improve how doctors make decisions under diagnostic uncertainty. He built Eximion after living through a rare disease diagnostic maze firsthand, which shaped his focus on shortening the time it takes for patients to reach a correct diagnosis. Eximion runs Medical Olympics style physician competitions that generate decision pathway data, helping surface where diagnostic reasoning breaks in real world conditions. Mike writes and speaks about vertical AI in healthcare, physician decision behavior, and the future of human and AI collaboration in medicine.