Pretty much every engineering team I’ve talked to in the last six months has gone through the same arc with agent frameworks. Someone on the team sees a demo — Claude running an agent loop, Codex writing code, something with memory and tool use — and decides to self-host the open-source runtime. Three weeks later the team has a prototype. Three months later they’re quietly discussing whether to just pay someone else to run the thing.

It’s not that the agents don’t work. They work fine. The problem is everything around the agent.

Running an agent runtime is an ops job, not a deploy-and-forget job

When you pull down an open-source agent runtime and spin it up on your own server, here is what you actually signed up for:

A server that has to stay healthy across memory-heavy inference workloads. Environment configuration that changes every release, because agent tooling is early and breaking changes ship weekly. Five separate LLM subscriptions, because serious agents don’t run on one model anymore — Opus 4.6 handles long-horizon reasoning, GPT-5.3 Codex dominates on code refactors, Gemini 3.1 Pro is the cheapest option for long-context retrieval, KIMI K2.5 is the quiet leader on vision-first workflows, GLM-5.1 is what you actually want for Chinese-language work. Each of those is its own billing account, its own quota management, its own rate-limit surface. Messaging integrations that your users actually want — WhatsApp, Telegram, Slack, Discord. And updates. When the upstream framework ships a release, you are the release engineer now.

The first two months you feel very clever. By month six you realize you just built a worse version of somebody else’s managed product, and your smartest engineer spent a quarter keeping it alive.

The five-subscription problem

Subscription sprawl is the part nobody flags at the planning meeting. “We’ll add API keys to the .env file” sounds trivial. In practice it means five payment methods on five invoicing cycles, five different usage dashboards nobody agrees on who reads, five quota negotiations when the team grows past starter limits, five credential rotations per security review, and one finance person signing off on what should have been one line item.

That is before anyone writes a routing policy to decide which model handles which request. Routing policy is the interesting work. Everything above it is plumbing.

What managed deployment actually changes

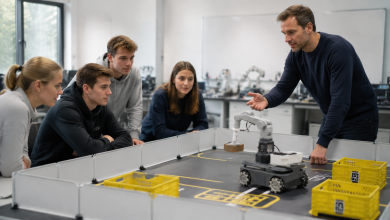

The quiet trend across the last six months is that teams that tried self-hosting are migrating to managed agent deployments — services where someone else runs the agent runtime, keeps it patched, and bundles the model access into a single subscription.

A team deploying OpenClaw today, for example, gets a one-click cloud deployment of the OpenClaw agent with all five of the frontier model APIs already provisioned inside the plan. No env file to hand-edit. No five invoices on procurement’s desk. No server for engineering to babysit. The agent is running on the day the team signs up, and it is reachable from the messaging apps those teams already use.

That shift is what “managed” actually buys you. It is not the hosting in isolation — hosting is cheap. It is the fact that hosting, model access, messaging integrations, and framework upgrades collapse into one recurring bill that somebody else is on the hook to keep green.

Where the user actually lives

There is a second reason managed deployment is winning, and it is about surface area.

The team running an agent internally tends to want a web UI, because that is the obvious frontend. The team using an agent internally does not. They want it in Slack, where they already live. Sales wants it in WhatsApp. Support wants it in a ticketing thread. A CEO wants it in Telegram because that is where their group chats happen.

A one-click managed OpenClaw deployment ships with those frontends as part of the product. The agent runs in the cloud; the user’s experience is wherever they already type. That is the right shape for a tool most employees encounter through a chat window, not a dashboard.

When self-hosting still wins

To be fair: not every team should outsource runtime. If your data cannot leave a specific region for regulatory reasons, you want the open-source runtime inside your own boundary. If you already have a strong platform team and an existing agent stack you are extending, the marginal cost of running one more service is low. If your workload is narrow enough that one model handles all of it, the five-subscription problem doesn’t apply.

But those are specific shapes. For the modal team — six-to-twenty engineers, mixed workloads, a handful of business functions that each want their own chatbot — the math has moved. The cost of self-hosting is not the server. It is the quarter of someone’s time you spend keeping it alive, and the five vendor relationships you spend another quarter managing.

The punchline

The phase where every team runs its own agent runtime is ending for roughly the same reason every team stopped running its own mail server. The framework is interesting. Keeping it running is not. And when the platform that runs it for you also bundles the model access that was going to be your next pain point, the decision makes itself.

If you are running a fleet of agents today, the useful question is not “which model is smartest.” It is “who is going to keep this thing running at 3 a.m. on a Sunday, and are they on my payroll or theirs?”