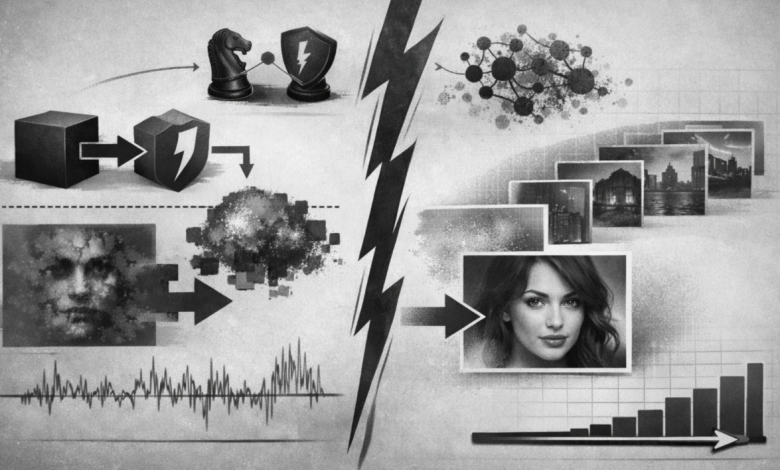

For most of the last decade, image synthesis was mostly an adversarial affair. Generative Adversarial Networks revolutionized the field by demonstrating that a generator and discriminator, trained in opposition with each other, could produce figures of stunning realism. They offered researchers a path to sharp output and high visual fidelity. But they also retained common weaknesses: unstable optimization, hyper-parameter sensitivity, and the persistent danger of mode collapse. In other words, GANs could generate realistic examples, but they weren’t always the best way to learn a complete data distribution.

Diffusion models take a very different tack to the same problem. Rather than training to fool a discriminator in one shot, they instead learn the reverse process: start with noise and iteratively remove it until structure emerges. That principle provides them with a more definite probabilistic basis, as well as training objectives that are vastly less temperamental than adversarial optimization. Early work with diffusion already showed that this technique could create high-quality samples, but to start with it still seemed slower and less practical than the best GAN pipelines. The conceptual elegance was clear, but the question remained whether that elegance could be applied to create a better functioning model family.

The real turn came when diffusion models no longer seemed merely competitive; they seemed better. The conversation changed when improved diffusion architectures started exceeding state of the art GAN systems on standard image-synthesis benchmarks. Sample quality alone was not what mattered. More importantly, diffusion models are achieving comparable or better fidelity than GANs while also providing better coverage of the distribution and less brittle training. It’s hard to ignore that combination. It naturally becomes more attractive to both researchers and engineers, as such a model family is easier to scale, relax conditioning on input and less dependent on fragile adversarial balance.

Which is why the moment we’re in feels decisive. GANs deserved their place because they were the plainest path to realism. Diffusion models are now assuming that role because they deliver the realism without requiring practitioners to dwell inside adversarial instability. It is as much an architectural shift as it is an empirical one. Diffusion systems can balance fidelity and diversity via sampling guidance, and more recent work has shown that this may be done without the need for a separate classifier. That matters, because it makes conditioning more of a general and controllable engineering tool instead of a fragile afterthought. The endpoint is a model class that acts less like some vaunted research trick, and more like a general-purpose generative framework.

Beside an incredible capability in unconditional image generation, another reason diffusion models feel like ascendant right now is that they also seems handy beyond vanilla image generation. Text-conditioned synthesis, inpainting and image editing have emerged as the principal use cases, and diffusion methods have adjusted to all three with remarkable versatility. We found guided diffusion to be very competitive for text-to-image and editing behavior. The hierarchical latent approaches showed that learned multimodal embeddings can serve as a strong link between text and image generation. Latent diffusion then made the entire framework computationally feasible by performing denoising in a compressed representation space while maintaining high visual quality. That mix of controllability, editability and efficiency is a nontrivial win over GANs. It alters what people expect a generative model to accomplish.

So far, the most powerful diffusion systems validate that both language conditioning and photorealism can scale together – so at this early stage. And this maters, because it means that the wizard behind the denoising curtain is not just a cleverly disguised generator – something which absolutely defines data in some sense without information flow between different modalities. When a generative model is good enough at conditioning on semantics (producing coherent composition and supporting editing flows), it starts to take ground that GANs never fully settled. GANs could sometimes be at their most powerful when they were narrowly brushed towards a particular output regime. Diffusion models for conditioning, control, transformation to the task – diffusion models keep becoming better and better when you factor this in – this is where most of the practical use-cases want to generalize towards.

That does not mean that GANs are now obsolete. They remain a case of sampling speed and can be favorable in certain niche domains, where one-shot generation or low-latency inference is more critical than flexibility. Doing that in real time is nontrivial, and diffusion models are still sort of paying a penalty because you have to iteratively sample them. And even that weakness is already under fire as a function of distillation, and more rapid sampling bulletins – the net effect being that really the only practical point left on the table today will be what you can do with downscaling vs upscaling. If one model family is burning up the charts on quality, coverage, controllability and momentum while a core weakness – being actively erased – is the other dimension that isn’t worked out, it’s safe to say the center of gravity has moved.

So a key moment in the out competition of GANs was not that GANs started performing badly, but how we defined quality of generative models. The most competitive model these days is not just the one able to spit out an image that looks crisp quickly. It is the one which scales, which remains stable during training, which captures the data distribution more cleanly and absorbs conditioning in a principled manner. As of October 2022, this is more true for diffusion models than it is for adversarial models. GANs changed the field. Any assumption they have about it is rewritten as a default, because of diffusion models.

Author bio

Jimmy Joseph is an engineer specializing in healthcare claim processing, payment integrity, applied machine learning, and scalable enterprise systems. His work focuses on building practical, audit-aware solutions that help healthcare organizations detect anomalous payment behavior, improve explainability, and deploy advanced real-world privacy and operational constraints.