Enterprise adoption of artificial intelligence has moved rapidly from experimentation to operational deployment. Organizations now rely on AI systems to support decisions across finance, healthcare, supply chains, and customer operations. These systems increasingly function as analytical collaborators that help professionals interpret large volumes of data and surface relevant insights.

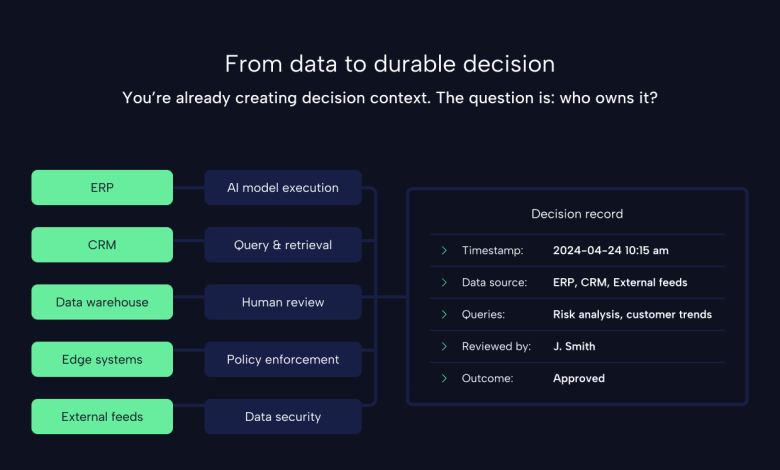

This progress has generated significant attention around model performance, data quality, and the emergence of autonomous agents. Yet an important dimension of enterprise AI remains underexamined. As AI becomes embedded within everyday workflows, organizations are generating large volumes of decision context that often remain uncaptured and ungoverned.

Understanding how decisions are formed when humans and AI collaborate is becoming central to the responsible operation of enterprise systems.

Every interaction between humans and AI produces reasoning context

When a professional consults an AI system, the interaction rarely consists of a single question followed by a single response. Most real-world workflows involve iterative analysis in which a user refines questions, compares signals from multiple data sources, and evaluates alternative interpretations before reaching a conclusion.

This sequence of inquiry produces a chain of reasoning that reflects how the decision emerged. The process reveals which data sources influenced the outcome, how conflicting signals were weighed, and how human judgment contributed to the final action.

Such reasoning constitutes decision context. It represents the institutional knowledge generated during the interaction between human expertise and machine-generated insight.

In many enterprise environments, this context disappears once the decision has been made.

Institutional reasoning is often lost after the decision is reached

Traditional enterprise systems are designed to capture the results of transactions and the lineage of data used to produce reports. They provide clear visibility into what occurred within a system and which datasets contributed to a particular output.

AI-assisted workflows introduce a different category of activity. Decisions emerge from a collaborative reasoning process that unfolds across prompts, data retrievals, and analytical exploration. Much of that reasoning takes place within conversational interfaces or analytical tools that were not designed to preserve the decision process itself.

The organization, therefore, retains the outcome while losing the reasoning that shaped it. Over time, this pattern leads to fragmented institutional memory.

The operational risk of fragmented decision knowledge

As AI becomes more deeply integrated into enterprise operations, the volume of these reasoning interactions grows rapidly. Analysts, clinicians, risk managers, and operators increasingly rely on AI systems as thought partners when evaluating complex situations.

Without mechanisms to preserve decision context, organizations lose the ability to analyze how decisions were formed across teams and time. Patterns of bias, inconsistent interpretation, or incomplete information may remain hidden. Teams may unknowingly repeat investigations that others have already conducted.

The absence of structured decision knowledge also complicates efforts to improve organizational judgment. When outcomes cannot be traced back to the reasoning that produced them, learning becomes significantly more difficult.

Trust in AI-assisted systems depends on the ability to understand how those systems influence decisions.

Model capability is no longer the primary constraint

During the initial stages of enterprise AI adoption, organizations focused primarily on improving model performance and expanding data access. These efforts remain important, yet many enterprises now possess models capable of generating useful insights in operational settings.

The challenge increasingly shifts toward governance and accountability. Leaders must demonstrate how decisions are formed, which sources of information influence outcomes, and how human oversight participates in the process.

These questions arise in operational reviews, regulatory inquiries, and internal improvement initiatives. A high performing model offers limited value if the reasoning behind a decision cannot be reconstructed or examined.

As AI participation in decision making expands, the transparency of reasoning processes becomes a critical component of enterprise trust.

Regulatory expectations increasingly emphasize traceability

Regulatory frameworks are beginning to reflect this shift in focus. The European Union Artificial Intelligence Act places strong emphasis on transparency, documentation, and accountability for high risk AI systems. Organizations deploying such systems must demonstrate the factors that influence decisions and maintain records supporting those outcomes.

These requirements reinforce the importance of traceability within AI-assisted workflows. Regulators, auditors, and stakeholders expect visibility into the reasoning that leads to consequential decisions.

The need for decision transparency extends beyond compliance considerations. It reflects a broader expectation that organizations can explain how automated insights interact with human judgment in real operational environments.

Decisions are emerging as durable enterprise artifacts

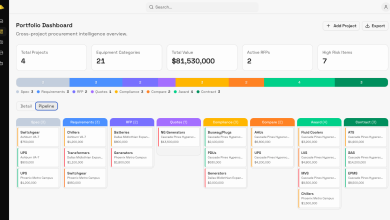

A growing number of organizations are beginning to view decisions in a new light. Rather than treating AI outputs as transient responses, they are recognizing decisions as artifacts that contain valuable institutional knowledge.

A decision artifact may include the signal that initiated the analysis, the datasets consulted during investigation, intermediate reasoning steps, and the final judgment applied by the responsible professional. When preserved in a structured form, these artifacts provide a detailed record of how an organization interprets information and applies expertise.

Such records support retrospective analysis and continuous improvement. Teams gain the ability to evaluate past decisions, identify recurring signals, and refine operational practices.

This perspective aligns with broader developments in governance and organizational learning, where leading organizations are moving toward adaptive governance models that embed oversight and continuous improvement directly within operational workflows

Decision context forms a strategic layer above the model

AI technologies will continue to evolve. Enterprises will adopt new models as performance improves and specialized capabilities emerge across industries. The technical landscape will remain dynamic for the foreseeable future.

Within this environment, the reasoning processes surrounding AI-assisted decisions represent a more durable layer of enterprise knowledge. These processes capture the ways in which an organization interprets data, resolves ambiguity, and exercises judgment in complex situations.

Preserving this knowledge enables organizations to maintain continuity even as the underlying models change. The intelligence created through daily operational decisions remains an enterprise asset rather than a byproduct of a particular tool.

This strategic layer becomes increasingly valuable as AI adoption expands.

Infrastructure for decision governance

Effective governance requires more than policy statements or compliance frameworks. It requires infrastructure capable of capturing and organizing decision context as it occurs within operational workflows.

Such infrastructure allows organizations to observe how decisions unfold across teams and systems. It supports review, auditing, and improvement without interrupting the speed of modern analytical processes.

Deloitte’s Tech Trends research highlights the emergence of adaptive governance approaches that integrate oversight directly into operational environments. In these models, governance evolves alongside the systems it monitors, enabling organizations to balance innovation with accountability. Similar principles appear in the NIST AI Risk Management Framework, which emphasizes continuous monitoring, traceability, and lifecycle governance for AI systems

Capturing decision context provides an essential foundation for this form of governance.

A new category of enterprise asset

Artificial intelligence is reshaping how organizations create and interpret information. Data continues to provide the foundation for analytical systems, yet the reasoning applied to that data increasingly defines how value is generated.

Decision context captures that reasoning. It reflects the interaction between human expertise and machine-generated insight that guides real-world actions.

Organizations that preserve this knowledge gain the ability to learn from past decisions, improve operational judgment, and maintain transparency as AI participation expands. Those capabilities become increasingly important as AI systems influence outcomes across critical sectors of the economy.

The emerging blind spot in enterprise AI, therefore, concerns visibility into how decisions are made. Addressing that blind spot will play a central role in building trustworthy and accountable AI-driven organizations.

Author Bio

A strategic, transformational technologist and founder, David has driven innovation across public and private sectors by solving complex, high-impact challenges through advanced AI and data-driven solutions, including founding and scaling BOSS AI to Gartner Cool Vendor recognition. His work has directly informed U.S. national security and public health decision-making, from shaping the Department of Defense’s data and AI strategy at DARPA to delivering industry-leading intelligence to the White House and architecting the federal government’s first secure cloud platform integrating classified and open data.