A decade ago, the path to autonomous vehicles seemed clear: add more sensors, collect more data, and the technology would eventually converge on safety. The industry chased higher resolution, longer range, wider field of view. The assumption was that better sensors meant better autonomy.

In February 2026, a major autonomy developer proved otherwise. Its next generation system deployed with more than 40% fewer sensors than the previous generation while still improving capabilities. Thirteen cameras instead of twenty nine. Four lidars instead of five. The system didn’t just maintain performance with fewer sensors. It performed better.

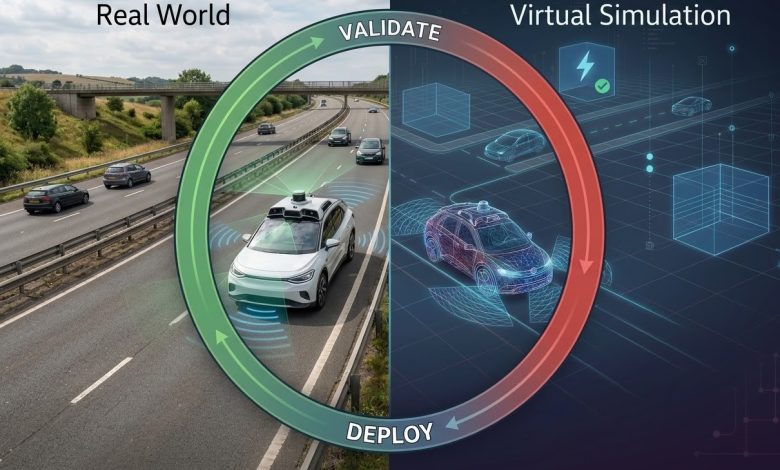

This is not a story about sensor optimization but about what makes optimization possible. The breakthrough wasn’t choosing better sensors. It was building the closed loop between sensor architecture, simulation-driven validation, and real-world learning that allowed the company to understand which sensors actually mattered and which were redundant.

Most autonomy programs treat sensor selection as a one-time design exercise. They optimize for resolution, range, and field of view, then freeze the architecture and move on. But architecture is not just what you sense. It is how you validate that sensing across millions of edge cases before deployment.

I have spent the last decade designing sensor architectures and validation pipelines across multiple generations of autonomous systems. A hands-free highway system that is now deployed on hundreds of thousands of vehicles. Two generations of truck sensor suites from concept to public road deployment across two continents. Most recently, the next-generation perception system that enables fully autonomous ride-hailing at scale across major metropolitan cities. Through each program, one truth has become clear. The race to scale autonomy is not won by only choosing the right sensors. It is won by closing the loop between hardware, simulation, and reality.

The Sensor Architecture Fallacy

Early in my career, I worked on what would become one of the first mass-market hands-free driving systems. The program was ambitious. Deliver a feature that could handle highway driving without driver intervention, launch across multiple vehicle lines, and compete with systems that had years of a head start.

The natural instinct was to focus on hardware. Which cameras? How many radars? What resolution? These questions consumed countless meetings. Teams debated field of view coverage, redundancy requirements, and the optimal placement for every sensor.

But the hardest problems were not about the sensors themselves. They were about validation. How do you test a system across millions of miles of edge cases when physical testing is prohibitively slow and expensive? How do you catch integration bugs between perception, planning, and control before they reach test vehicles? How do you know which sensors are actually contributing to safety and which are just adding cost?

The answer was a simulation driven validation pipeline that ran production software against virtual scenarios. We ingested camera and radar data, tested the full stack against stressing edge cases, and analyzed results to identify performance gaps. The pipeline caught more than ten issues before they ever reached test vehicles. It became the bridge between sensor architecture and deployment.

That experience taught me something fundamental. Sensor architecture cannot be designed in isolation from validation. Every sensor choice should consider how it will be tested across edge cases. Every architecture decision should be informed by simulation results. The loop between design and validation is not sequential. It is continuous.

Building the Validation Bridge

The shift from hardware focused development to validation driven architecture requires rethinking how teams operate. In traditional automotive engineering, hardware is designed and validated before software development accelerates. That model breaks for autonomous systems where perception software and sensor hardware must co-evolve.

At the commercial trucking program where I led perception systems engineering, we built this closed loop from the start. The sensor suite went through two generations of refinement, each informed by simulation results and real world data collected from deployed vehicles. When the system encountered edge cases on public roads, those logs flowed back into the simulation pipeline. When simulation revealed performance gaps, those insights shaped the next hardware revision.

This approach enabled deployment across two continents with different regulatory environments. The system collected more than fifty thousand miles of real world data in the United States and Japan, each mile feeding the next generation of architecture decisions. The team grew from two engineers to twenty one, and the validation processes scaled with it.

The key insight was treating simulation not as a testing tool but as a design input. Instead of asking “does this hardware meet requirements,” we asked “what does simulation tell us about how this hardware will perform across the full distribution of real world scenarios.” That shift changed everything.

The Regulatory Reality

In January 2026, the UN Economic Commission for Europe adopted a draft global regulation on Automated Driving Systems . The regulation establishes uniform safety provisions and a harmonized methodology for validating vehicles equipped with autonomous driving technology. Its requirements are worth reading carefully.

Manufacturers must demonstrate the credibility of test environments and methods, including virtual toolchains. They must present a safety case with structured claims, arguments, and evidence that the system meets outcome focused requirements. They must implement in-service monitoring and reporting that provides a feedback loop for continuous performance monitoring and corrective action.

For organizations that have built mature validation loops, these requirements are not burdensome. They are simply documentation of work already being done. For organizations still treating simulation as optional or validation as sequential, the regulation will be a shock.

The United States Department of Transportation published a request for comment on this draft in January 2026. China indicated it will draft its national standard following the structure of the global regulation. The window for building these capabilities is closing. The companies with mature validation loops will sail through compliance. Everyone else will scramble.

The Closed Loop Framework

Based on experience across three generations of deployed systems, here is a practical framework for organizations building autonomy programs.

Design for validation, not just performance. Every sensor choice should consider how it will be tested across edge cases. A sensor that performs beautifully in nominal conditions but cannot be validated across the full distribution of real world scenarios is a liability, not an asset. Ask yourself: how will we know this sensor is working as intended across millions of miles? If the answer requires physical testing that cannot scale, rethink the choice.

Build the simulation bridge early. Do not wait until hardware is locked. Run perception software against virtual scenarios from day one. The earlier you close the loop between simulation results and architecture decisions, the faster you converge on a design that can be validated at scale. The pipeline that caught issues before they reached test vehicles did not emerge fully formed. It was built incrementally, starting with simple scenarios and expanding over time.

Close the loop systematically. Every real world log, every simulation failure, every edge case discovered should feed back into next generation architecture decisions. This requires infrastructure for collecting, analyzing, and acting on data at scale. It requires teams that span the boundary between hardware, software, and validation. It requires a culture where simulation results are treated with the same weight as physical test data.

Scale validation with the team. The processes that work for two engineers will not work for a team of twenty. Build validation infrastructure that can grow with the organization. Automate what can be automated. Document what cannot. Create clear ownership for each component of the validation pipeline. The team that scaled by 10x did not succeed by working harder. It succeeded by building systems that scaled.

The Path Forward

The organizations that win the autonomy race will not be those with the most lidars or the highest resolution cameras. They will be those that built the closed loop where sensor architecture, simulation validation, and real world learning form a continuous improvement engine.

This is not theoretical. It is happening now. As mentioned earlier, a system with fewer sensors can perform better than one that is more sensor-heavy. That capability did not emerge from a one time design exercise. It emerged from years of closing the loop between deployment, simulation, and next generation architecture.

The new global regulation requires manufacturers to demonstrate testing credibility and present safety cases supported by evidence . The organizations with mature validation loops will meet these requirements effortlessly. Those still treating simulation as optional will find themselves locked out of markets.

The window to build this capability is closing. The only question is whether you will be ready.