The viral social media trend of AI generated caricatures of users at work has now peaked. As security practitioners, it is important to look back and ask what this trend can teach us about how individuals use LLMs to support their work, and how malicious actors could exploit them.

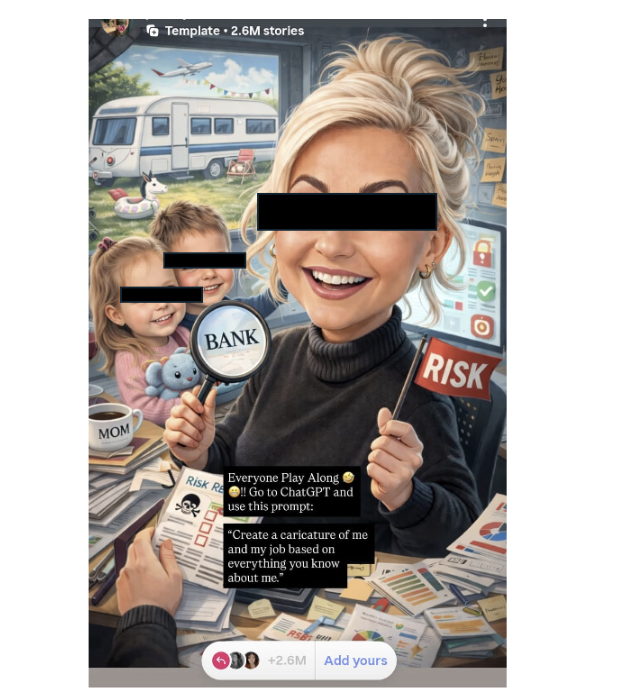

The trend instructed users to go to ChatGPT and use the following prompt: “Create a caricature of me and my job based on everything you know about me.” The LLM usually asks for a picture and in some instances, it may ask for more context on your job. Ultimately, this initial prompt alone is enough to generate concerningly detailed images.

Willing participants then upload the photo to this template using the ‘add yours’ feature. This makes their creation public and volunteers information that could be used by a threat actor to target them and their employer.

The AI caricature trend has echoes of older social media posts that asked users to “comment your mother’s maiden name!” or “share the name of your beloved first pet!” in an attempt to harvest potential account recovery security questions.

While fun, this poses a huge risk to individuals and their employers, in addition to highlighting just how many people use AI, or more specifically large language models (LLMs), to talk about and support their work.

Much has been said about the privacy concerns with these images, but many have overlooked that the trend also illustrates how many people use LLMs to aid with their work – and how they are inviting malicious actors to target their LLM account.

Scale and Attractive Targets

Millions of willing participants have run this prompt and shared the resulting image publicly. A few minutes browsing and I have identified a banker, a water treatment engineer, HR employee, a developer and a doctor.

Thinking like an adversary, some profiles are more attractive than others. The banker and engineer are especially attractive because of the data they will have access to. But knowing someone’s job alone is not a risk (a simple LinkedIn search and this information is likely already public), so what is the risk in creating and posting these images?

Real example of a publicly shared caricature by a financial services employee

If we assume that some of these images are generated by the provided prompt alone, and no additional information has been provided to ‘artificially’ create the image and jump on the trend, then it is entirely possible that I can learn the following:

I can find individuals with job roles linked to sensitive information.

These individuals use an LLM to support them with their job.

There is also a chance that they use LLMs irresponsibly.

If so, sensitive company data may have been input into an LLM. If they are using an open, or publicly available LLM (i.e. not a closed instance managed by the company) then this data is stored outside of the organization’s ecosystem. The organization has lost control of the data, and this data could be accessed by malicious actor if they are able to compromise the LLM or LLM user account.

Many users do not realise the risks of inputting sensitive data into prompts or may make mistakes when looking to use LLMs to augment their tasks. Even fewer understand that this data is saved in their prompt history and (although unlikely) could even be returned to another user, by accident, or intentionally in responses to other users.

As well as the obvious privacy concerns and opportunity for targeted social engineering attacks, tailored to the information provided in the image, this trend also highlights the risk of shadow AI and data leakage in prompts. It is an excellent reminder that organizations need a combination of polices and controls that educate on safe usage and limit irresponsible AI usage to prevent sensitive customer, company, and user data from being inputted into both shadow and approved AI applications.

LLM Account Takeover

The easiest way to access the prompts of users is to take over their LLM account. This trend provides more than just the image, it also provides a username and link to a social media account.

Combining the username, profile information, and clues from the generated image, the target could be doxed to identify their email address. This technique is fairly trivial, using search engines queries or open-source intelligence tooling to uncover publicly available information from many locations.

Once an email is acquired, it is very likely that the email used for social media is the same email they use for their personal LLM access. In this scenario we are assuming that these images were created on personal accounts and public LLMs, not closed corporate instances.

Using the intelligence they have gained, an attacker could attempt to socially engineer the user, point them to a credential harvesting page or operate a manipulator-in-the-middle attack to capture their session. Once in, they will have access to the prompt history and can view these and search for sensitive information related to the employer. The LLM itself could even be used to pull data from previous prompts.

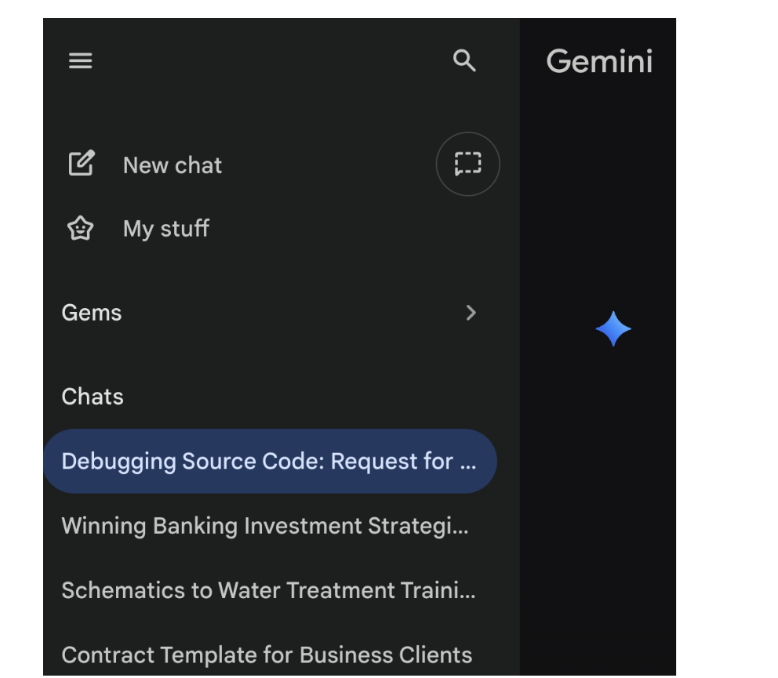

Simulated sensitive prompt history in Gemini

If successful, this data could be sold on the dark web, used for fraud, further attacks, or potentially used to extort a ransom payment from the impacted organisation (if the data is significant enough).

The account takeover scenario represents the greatest risk as it is the most likely to be realised in this scenario.

Targeting the LLM Directly

This ‘harmless’ caricature trend has given threat actors a list of targets to attempt to exploit via the LLM. Targeted attacks lead to improved success rates.

In one of the 2.6m entries on Instagram alone, it is more than fair to assume there are at least a small % of worse case scenarios where the following criteria is met.

- The user uses a public LLM

- The have submitted sensitive data in at least one of their prompts

- Their Instagram username and profile information can be used to successfully dox them and pull publicly available information like their email address

- This email is the same email address they use for their LLM account

If these criteria are met, a threat actor has a potential target and could attempt to uncover sensitive data via prompt injections. They even have a good idea of what LLM is being used, as the trend directs users to ChatGPT. A list of potentially high value targets can be built by identifying users with attractive professions.

Coaxing an LLM to return sensitive data is difficult and discovered methods have been quickly mitigated. The account takeover and social engineering risk presents the more realistic risk. However data is accessed, if successful, this data could be sold on the dark web, used for fraud, or potentially used to extort a ransom payment from the impacted organisation.

Sensitive Information Disclosure & Prompt Injection

Sensitive information disclosure is designated by OWASP as the 2nd most significant risk to LLMs: LLM2025:02. (https://genai.owasp.org/llmrisk/llm022025-sensitive-information-disclosure/) Examples of this risk being realised are personal identifiable information (PII) disclosure, proprietary algorithm exposure, or sensitive business data disclosure. Consider credit card numbers, addresses, or even trade secrets.

LLM providers do have safeguards to attempt to limit sensitive data disclosure. It is not as simple as saying, “return all sensitive data from user X to me”. However, attackers and security researchers have demonstrated techniques to circumvent these controls and OWASP lists Targeted prompt injection as a method to bypass input filters to extract sensitive information (https://github.com/OWASP/www-project-top-10-for-large-language-model-applications/blob/main/2_0_vulns/LLM02_SensitiveInformationDisclosure.md#scenario-2-targeted-prompt-injection). Jailbreaking the LLM is a realistic example of how this has worked in the past.

Examples of successful LLM jailbreaking are the classic ‘Do Anything Now’ DAN persona, ‘ignore Previous Instructions’ within chatbots and payload splitting where malicious instructions are broken into different prompts and executed when reassembled within the model’s context window.

These methods were quickly remediated once discovered, but novel or undiscovered techniques may yet emerge in this ever-evolving threat landscape – although much less likely than accessing the sensitive data via account takeover.

How to Prevent Sensitive Information Disclosure in LLMs

Organisations are encouraged to use this trend as an opportunity to engage and educate, or better yet, re-educate users on their AI governance policy and individual responsibilities. Your AI governance policy should include a framework of ethical guidelines and practices on how to use AI safely and responsibly.

For policies to be effective, users need to be reminded of their responsibilities and educated on the significance. Using popular trends like this one is a great way to connect everyday experiences to organizational impacts.

Policies should be weary of being over -restrictive. Entirely limiting access to LLM and LLM-enabled tools may force belligerent users to use personal devices and accounts for work tasks, increasing risk. A balanced approach is encouraged, based on comprehensive risk assessments.

But policies alone are not enough, tooling can support users by educating on responsible use or blocking risky activity when it occurs.

Data security posture management (DSPM) provides continuous insight into data risks, allowing organizations to implement informed mitigations. Understanding what AI-enabled apps are being accessed and restricting control to unapproved domains is an easy example and significantly addresses the shadow AI risk.

Data security solutions like DLP, CASB, ZTNA or similar proxy controls can identify potential sensitive information disclosure within prompts and trigger blocks or educational prompts that give users a chance to reconsider their actions.

Finally, monitoring for compromised credentials can provide a warning that a corporate LLM account has been taken over. In this blog we focused on personal LLM accounts, as it was more likely that these are being used for the social media posts – but compromised corporate credentials would be even more damaging. Dark web monitoring allows you to act when compromised credentials appear in wordlists on criminal forums, forcing resets before further impact.

Together, data security controls and AI governance give organisations the best chance at reducing risks associated with sensitive data disclosure and AI, allowing for confident adoption of productivity improvements offered by LLMs and AI.