Two years ago, boardrooms were abuzz with promises of artificial intelligence transforming every corner of the enterprise. Yet the numbers tell a sobering story. An S&P Global survey found that more than 40% of companies scrapped most of their AI projects in 2025, up from 17% the year before. Nearly half of AI proofs-of-concept never make it into production, and over 80% of AI projects fail—twice the rate of traditional IT projects. The biggest culprits? Cost, data privacy, and security concerns.

These statistics echo a pattern from economic history that should worry every CEO. In the early 1900s, manufacturers installed electric motors in their factories, expecting immediate productivity gains. Instead, it took two to three decades before electricity was being used productively. The problem wasn’t the technology – it was that manufacturers simply replaced steam engines with electric motors while keeping everything else the same. The revolution came only when they redesigned their factories from the ground up.

Today’s enterprises are making the same mistake with AI.

The Wrong Solutions to the Right Problem

The default playbook is predictable: pick a frontier model, hire consultants, launch pilots, repeat. Boardroom conversations focus on which LLM to deploy—Claude versus GPT versus Gemini. Engineering teams debate RAG architectures and vector databases. Some organizations are now turning to “context engineering.” In October, Gartner called context engineering a “strategic priority” for enterprises.

But these approaches treat symptoms, not causes. The real problem isn’t choosing the wrong model or mis-engineering prompts. It’s that AI models—no matter how powerful—are fundamentally blind to your business. Knowledge about how data actually works—the relationships between systems, the meaning behind fields, the policies that govern decisions—lives in people’s heads and scattered documents, not in any reusable layer.

RAND researchers note that many AI projects collapse because teams misunderstand the underlying business problem or lack the data infrastructure to support their models. A 2025 Broadcom survey found that 55% of enterprises cite data quality as a major challenge for their AI initiatives, and 45% cite governance as a top challenge. Nearly identical numbers are prioritizing data platforms and data quality tools at 41% and 40%, respectively, according to Futurum Group’s AI Data Quality Crisis Survey. Without shared definitions and consistent policies, AI initiatives rely on tribal knowledge and brittle integrations, which is why so many pilots are abandoned.

A Different Playbook

The enterprises breaking through are doing something fundamentally different. They’re not starting with technology choices. They’re starting with economic analysis and building a governed layer that interprets how data, processes, and policies relate across the organization.

Here’s the playbook that’s actually working:

First, start with cost analysis, not model analysis. Most AI initiatives begin with a technology choice—which LLM, which vendor, which framework. Instead, begin by identifying where time, cost, or risk are concentrated in your value chain. Map the workflows where decisions stall, exceptions pile up, or manual handoffs cause delays. A global bank recently discovered that manual reconciliation was generating 400,000 exceptions annually, each requiring human review. That’s where AI can deliver measurable impact—and where you should invest first.

Then, set clear metrics to track that impact. Resist the temptation to track pilot counts, use case inventories, or “AI-powered” feature launches. Focus on workflows where you can measure before and after clearly: quote-to-cash cycle time, claims resolution rates, onboarding duration, and reconciliation accuracy. Track uplift as time saved, headcount avoided, revenue accelerated, or risk reduced. Hold yourself to the same standards you would apply to any capital project.

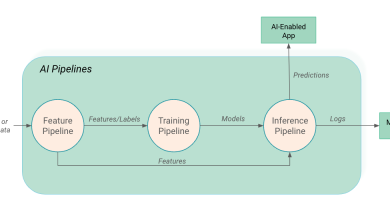

Finally, build context as a reusable asset, not a one-off integration. The goal isn’t to solve one workflow in isolation. It’s to create a governed layer that connects directly to your existing systems—your databases, CRMs, legacy platforms—without migration or duplication. This layer should interpret entities and relationships automatically, encode business policies, and expose a consistent view to any AI agent or application. When context is organized this way—curated once, governed centrally, reused across workflows—the economics finally start to bend in your favor. The second use case costs a fraction of the first. The tenth costs almost nothing. That’s when AI stops being an expense center and becomes an operating system.

The obvious pushback: isn’t this just another infrastructure project that will take years and millions to implement?

It’s a fair concern, especially given the track record of enterprise transformation initiatives. But the successful implementations don’t look like traditional IT projects. They start with a single high-impact workflow—often something that’s costing the organization millions in manual processing—and deliver measurable results in 10 weeks, not 18 months. They don’t require ripping out existing systems or massive data migrations. They connect to what you already have, interpret it, and make it usable by AI.

The alternative—continuing to run AI pilots that never scale, hiring more consultants to chase the same problems, switching from one frontier model to another—is the truly expensive path. It’s just that the costs are distributed and hidden: in headcount that keeps growing, in pilots that get abandoned, in opportunities that slip away while competitors move faster.

This isn’t theoretical. Air India’s virtual assistant Vihaan resolves 97% of more than four million passenger queries without human intervention. Microsoft has saved $500 million a year by deploying context‑aware agents in its call centres. These are real numbers that show up on the P&L.

The Choice Ahead

The gap between AI storytelling and AI financial impact is widening, not narrowing. The fundamental constraint isn’t computational power or model sophistication. It’s whether AI understands your business well enough to make decisions you can trust.

The winners will be organizations that treat AI as an operating system for workflows built on real business context. The losers will keep adding slideware and proof-of-concept dashboards while their engineers quietly hold the enterprise together with spreadsheets and manual checks.

It took three decades for enterprises to figure out how to use electric motors properly. We don’t have 30 years for AI. The question every board should be asking today is simple: which side are we on?