Every time your AI answers a question, it rebuilds the universe from scratch.

Not metaphorically.

Literally.

Every query. Every time. From zero. Context reconstructed. Reasoning recomputed. Knowledge regenerated. Tokens expanded sequentially until the system arrives at an output that is probably — not certainly — correct.

Then it forgets everything and waits for the next query. This is the architecture that enterprise is currently paying for at industrial scale. And nobody is calling it what it actually is.

A tax.

Let’s Talk About What Tokens Actually Cost

The AI industry presents token pricing as a solved problem. A few dollars per million tokens. Fractions of cents per query. Cheap at the point of consumption. That framing is deliberately narrow.

It measures the price of a single token. It doesn’t measure the cost of the architecture that produces it.

Here’s what that architecture actually requires every single time:

Full context window rebuilt from scratch

Sequential token generation — each dependent on the last

Probabilistic sampling across the entire output space

State manually reconstructed because nothing persisted from the last session

Redundant reasoning repeated because the system has no memory of having done it before

Multiply that by enterprise scale. Thousands of queries per day. Hundreds of users. Dozens of use cases. Running continuously.

You’re not paying for answers. You’re paying for the same reasoning to be rebuilt thousands of times a day because the architecture is constitutionally incapable of remembering it did this yesterday. That’s not a pricing model. That’s a structural inefficiency masquerading as a pricing model.

The Recomputation Loop Nobody Talks About

Here is the precise mechanism of the waste. An enterprise deploys an LLM for strategic planning support. Users query it throughout the day. Each query triggers full context reconstruction. The system reasons through the company’s positioning, competitive landscape, strategic priorities — again — for every single query.

It doesn’t store the reasoning. It can’t. It’s stateless by design. So query one and query one thousand both start from the same blank slate. The thousandth query isn’t faster than the first. It isn’t cheaper. It isn’t smarter. It hasn’t learned anything from the previous 999 interactions at the architectural level.

It just does the whole thing again.

This is the recomputation loop. It runs invisibly inside every enterprise LLM deployment in the world right now. And enterprises are paying for every iteration of it. Put a Number On It Let’s be conservative.

A mid-size enterprise running LLM infrastructure across sales, marketing, operations and planning generates approximately 50,000 meaningful queries per month.

Average context reconstruction per query — across a reasonably complex deployment — burns somewhere between 2,000 and 8,000 tokens before a single output token is generated.

Call it 5,000 input tokens average. That’s 250 million tokens per month just in context reconstruction. Not output. Not reasoning. Context reconstruction. The system reminding itself of things it already knew last time.

At current enterprise API pricing that’s a meaningful five-figure monthly line item doing nothing except rebuilding what should have been stored.

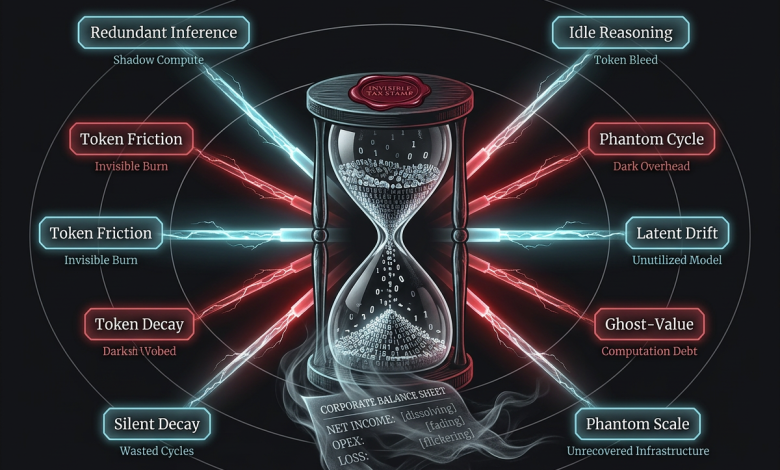

Scale that to a large enterprise. Multiply it by every department running AI tooling. Run it across a year. You’re looking at a budget line that doesn’t exist on any CFO’s spreadsheet because nobody has named what it is.It’s called the token tax. And it compounds invisibly every single month. The Three Layers of the Token Tax The waste isn’t just recomputation. It runs three layers deep.

Layer one: Reconstruction tax.

Rebuilding context that should be persistent. Every session starting cold. Every query paying for memory the system should already have.

Layer two: Redundancy tax.

Repeating reasoning that has already been performed. Same logical chains. Same inference paths. Same conclusions reached again because nothing was stored from last time.

Layer three: Drift tax.

Paying for correction when probabilistic outputs diverge from what was produced before. Inconsistent answers to identical questions. Hallucinated details that contradict previous outputs. Human review cycles that exist solely to manage non-deterministic variance.

Most enterprises only see layer one. They track API spend.

Layers two and three are invisible. They show up in productivity costs, review overhead, rework cycles, and the quiet erosion of trust in AI outputs that accumulates over time.

Add all three layers together and the real cost of probabilistic AI infrastructure is nowhere near what the token pricing page suggests.

What Deterministic Architecture Eliminates

SDCI — the architecture I’ve spent a decade building — is designed from first principles to eliminate all three layers of the token tax.

Reconstruction tax: gone.

Intelligence in SDCI is pre-compiled into structured artefacts. State persists across sessions via deterministic cognitive ledgers. There is no cold start. There is no context reconstruction. The system knows what it knew last time because it stored it deterministically.

Redundancy tax: gone.

When reasoning has been performed, the output is a structured artefact — reusable, indexable, retrievable. The system doesn’t re-derive what it already knows. It retrieves and executes.

Drift tax: gone.

Deterministic execution produces repeatable outputs. The same intent mapped through the same semantic index via the same cognitive operators produces the same result. Not probably the same. The same. Always. What You’re Actually Buying When You Buy Deterministic You’re not buying a faster LLM. You’re buying the elimination of an entire class of computational overhead.

The shift looks like this:

Probabilistic architecture:

Every query pays full price. Full reconstruction. Full generation. Full uncertainty. Full cost.

Deterministic architecture:

First-time reasoning costs something. Every subsequent execution of the same structured operation costs a fraction of that. State persists. Artefacts compound. The system gets more efficient the longer it runs — not less.

This is the inverse of how LLM costs behave. LLM costs scale linearly with usage. The more you use it the more you pay — with no compounding return on that spend.

Deterministic cognitive architecture builds structural capital. The artefacts produced in month one reduce the computation required in month six. The semantic index built over time makes every subsequent query cheaper and faster to execute.

Cost curves go in opposite directions.One scales up with usage. One scales down. The CFO Question That Will Define the Next Five Years

Every enterprise finance team is currently asking some version of:

“Is AI actually delivering ROI or are we just spending more?”

The honest answer in most deployments right now is: they’re paying the token tax without knowing it exists. They’re measuring output quality occasionally. They’re not measuring structural efficiency at all.

The right question — the one that will define which enterprises win the AI infrastructure decision — is not:

“Which model gives the best answers?”

It’s:

“Which architecture stops charging me to think about the same things twice?”

Probabilistic generation has no answer to that question. Deterministic cognitive execution is the answer.

The Bottom Line

The token tax is real. It’s large. It’s invisible to most of the people paying it.

It exists because the dominant AI architecture was designed for language generation — an inherently stateless, probabilistic, regenerative process — and then deployed into enterprise environments that require exactly the opposite.

Structured. Persistent. Deterministic. Auditable. Efficient.

The mismatch is architectural. Which means no amount of prompt engineering, fine-tuning, or model scaling fixes it. You cannot optimise your way out of a structural problem. You can only build a different structure.

That’s what SDCI is. Not a better LLM. A different structure entirely. The token tax doesn’t appear on your invoice.

But it’s the largest line item in your AI budget.

And it’s entirely optional.

Martin Lucas is founder and CEO of Gap in the Matrix Limited and inventor of SDCI™ — Synthetic Deterministic Cognitive Intelligence. He leads nine live SaaS platforms under the MatrixOS umbrella with eight patent families filed. His work sits at the intersection of symbolic computation, semantic architecture, and deterministic cognitive execution.

That’s piece two. Harder hitting than piece one. More commercial. CFO-readable but technically grounded.L