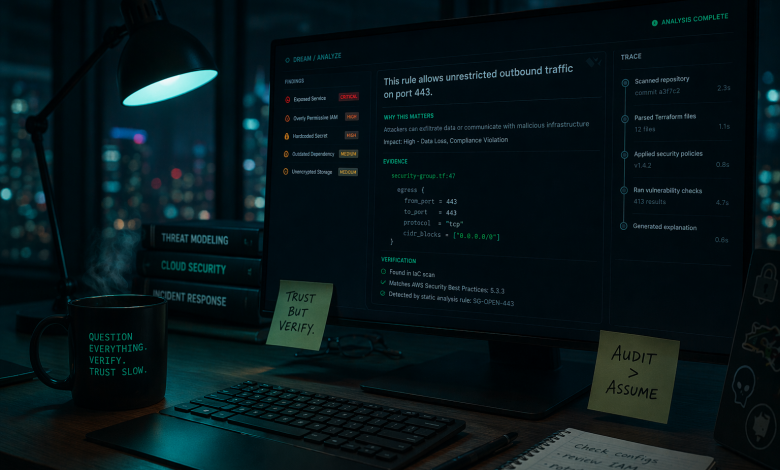

We built an AI agent for security teams. It analyzes configs, hunts for vulnerabilities, investigates threats. Here’s what we underestimated: security people are paid to be paranoid. They don’t trust systems they can’t audit.

And honestly? They shouldn’t.

So we built an explainability layer. Not just “here’s what we found” but “here’s why we think that, and here’s how you can check.”

Here’s what worked, what didn’t, and what surprised us.

-

Trust Is a UX Problem, Not a Technical One

We initially thought explainability was an engineering challenge. Build better traces. Generate cleaner explanations. Ship more data.

Wrong.

An AI can be 95% accurate and still 0% trusted.

Trust is about experience. Nobody reads JSON dumps. Nobody parses execution logs. People just need the right info, at the right moment, in a format that actually makes sense.

The turning point was when we stopped asking “what can we show?” and started asking “what would make a skeptical analyst actually believe this?”

The answer wasn’t more data. It was clickable claims.

-

Explainability Is Debugging for Users

When code breaks, developers get stack traces. We can step through line by line, check what each variable holds, figure out exactly where things went wrong.

When AI gets something wrong? Users get nothing. Just a bad answer and no way to understand why.

We wanted to fix that. Let people poke around inside the AI’s reasoning the same way we poke around inside broken code. Not by dumping raw model internals on them, but by giving them a trail they can actually follow: this claim came from this file, this line, this search result.

The mental model shift mattered more than the implementation. Once we started thinking of explainability as “debugging tools for non-developers,” the design decisions got a lot easier. Every feature became a question: does this help the user find what went wrong?

If developers get debuggers, users should get explainers.

-

Explain Claims, Not Conclusions

Early on, we tried explaining the AI’s overall conclusion. “Here’s why this analysis is correct.” It felt like a lawyer’s closing argument: sounds convincing, but good luck fact-checking it.

What actually worked: explain each claim on its own.

-

Don’t Ask the Chef to Rate the Food

LLMs are surprisingly good at generating explanations. They can reason through context, connect dots, articulate logic. The problem isn’t the cooking. It’s the tasting.

When we first built this, the AI would generate a claim, then generate evidence for that claim, then evaluate whether the evidence was strong enough. The chef was cooking, plating, and reviewing the dish. Unsurprisingly, every meal got five stars.

Never let the AI verify its own explanations.

The fix was separating generation from verification. The AI can explain, but the evidence needs to come from somewhere deterministic: an actual line in a file, an actual search result, an actual code execution output. Not “I believe this is true” but “here’s the receipt.”

-

Being Right Isn’t Enough

Our agent knows a lot. It’s trained on security documentation, vulnerability databases, years of threat intelligence. And most of the time, that knowledge is accurate.

But here’s the thing: we don’t cite any of it as evidence.

Why? Because users can’t verify it. They can’t click through to check if we got it right. And unverifiable sources erode trust, even when they’re correct.

It feels weird at first. You’re hiding knowledge that’s probably correct. But “probably correct” isn’t evidence. Evidence needs receipts.

-

Not Every Sentence Deserves an Explanation

Our first version was exhausting to use. Every sentence had an explanation. Every claim had evidence. Users were drowning.

The fix wasn’t better explanations. It was fewer of them.

Now every sentence in the agent’s response gets scored, and the entire explainability block for that sentence (the evidence, the source link, the “why it matters”) only appears if it scores a 4 or 5 out of 5. Everything else gets left alone. Turns out restraint is a feature.

The Uncomfortable Truth

If there’s one thing we actually learned, it’s this: explainability isn’t really about explaining AI. It’s about admitting that AI shouldn’t be trusted blindly. Every feature we built is basically saying: “You’re right to be suspicious. Here’s how you can check.”

Trust isn’t something you ask for. It’s something you earn, one verifiable claim at a time.

Edan Hauon is a Data Scientist at DREAM, a cybersecurity and sovereign AI company that develops AI solutions to protect national infrastructure against cyber threats.