For years, the empirical law governing neural networks appeared simple: When you wanted better performance, you had to give it more labeled data. That assumption resonated through model design, benchmark culture and industrial workflows. And supervised learning moved this center of gravity. Labels were seen as the critical fuel, and progress frequently involved scaling up annotated datasets or engineering clever ways to extract extra signal from them. Self-supervised learning is reshaping that landscape. As of April 2023, it no longer appears to be a side path in representation learning. It seems to be a serious rewrite of how neural networks are pretrained, transferred and understood.

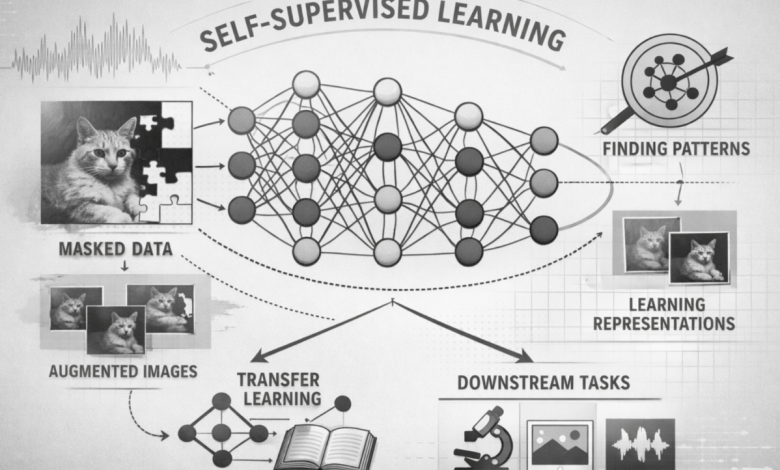

The central concept is deceptively simple. The model generates supervision from the data itself rather than waiting for labels to be externally applied. Allows a prediction through one view of an image to another. Visible patches predict masked ones. Various augmentations of the identical sample are encouraged toward a unified illustration. In all of these examples, the network isn’t memorizing human annotations. It learns invariances, structure, and latent organization from the input distribution. That shift is important because it alters the goal of pretraining. The model is not being trained just to do input mapping to labels anymore. It is trained to develop useful internal representations before even a narrow downstream task is defined.

Contrastive learning was one of the earliest and clearest signals that this shift was happening. SimCLR demonstrated both that such a simple recipe — strong data augmentation, a contrastive objective, and an additional projection head – could learn image representations sufficiently strong to reach the performance of supervised ResNet-50 on linear probing. That finding was important because it implied that for discriminative features, labels were not the only path. Shortly thereafter, BYOL took the argument even further by demonstrating that strong self-supervised representations could be learned in the absolute absence of explicit negative pairs. This was significant not just as a benchmark improvement, but also conceptually: the space of useful representations could be shaped through self-prediction and consistency, rather than solely direct class supervision.

What has made self-supervised learning so much more powerful, however, is that not only are you increasing your scores — you’re showing new behavior in neural networks.” This has become especially evident with vision transformers. DINO demonstrated that, in vision transformers, self-supervised features can have explicit information about the object layout and semantic segmentation in a way not necessarily as clear as with supervised features or standard convolutional nets. A strong k-Nearest-Neighbors classification performance was also found with the same work, suggesting that the representation space itself is more semantically organized. That is a meaningful step up from a slight bump in a benchmark. This suggests that self-supervision can change the things which the network learns to represent, and not only how accurately it classifies.

Masked reconstruction then provided the field with another powerful recipe. Masked Autoencoders demonstrated that a neural network can learn useful visual structure simply by observing only a subset of image patches and reconstructing the missing content. It is eminently a graceful method as it converts the missing data into the training signal. It is efficient as the encoder only learns from visible patches. And it is also scalable, as high masking ratios make the prediction task nontrivial while ensuring that no computation here runs amok. The upshot is a pretraining strategy that induces strong transferable representations, and in several downstream settings surpasses traditional supervised pretraining. One reason that self-supervised learning now seems less like an optional trick and more like a broad training principle.

Combined, these changes are rewriting multiple old rules in one go. The first old rule was that bottleneck is on the label side. Much training signal can come from the data structure itself, as shown by self-supervised learning. The second old rule was that pretraining is generally a warm start for some later supervised task. The new view is that pretraining is where much of the real action takes place, because that’s where the network finds geometry, invariance and semantic structure in the data. The third old rule was that only architecture engenders evolution. In particular, the pretext task, augmentation policy, masking strategy and representation objective become as central as the network backbone.

This is important in real-world situations since labeled data collection is costly, unbalanced, and tends to be biased towards what can be labeled instead of what’s critical to model. In reality, there would be much more raw data than labeled data. Self-supervised learning allows for pretraining on that raw volume, then a comparatively tiny supervised layer on top that’s fine-tuned. That increases data efficiency, but it shifts the development strategy as well. Now, engineers can consider representation quality, transfer learning, and robustness as principles for practical applications instead of treating annotation volume as the only path forward. So in this sense self-supervision is also a way to save labels. It is changing where intelligence stacks up in the training pipeline.

The most significant takeaway is that as of April 2023 self-supervised learning has transcended the question of whether it works. It clearly does. The more interesting question is what kinds of neural-network behavior it induces when the model learns first to minimize structure instead of finding a way to reflect labels. That is part of the reason self-supervision now seems fundamental. It is changing our conception of feature extraction and pretraining, transfer, and even what is meant by a network learning a useful internal world model. These are not marginal tweaks to the rules of neural networks. This is being rewritten around what the data itself can learn in representation.

Author bio

Jimmy Joseph is an engineer specializing in healthcare claim processing, payment integrity, applied machine learning, and scalable enterprise systems. His work focuses on building practical, audit-aware solutions that help healthcare organizations detect anomalous payment behavior, improve explainability, and deploy advanced real-world privacy and operational constraints.