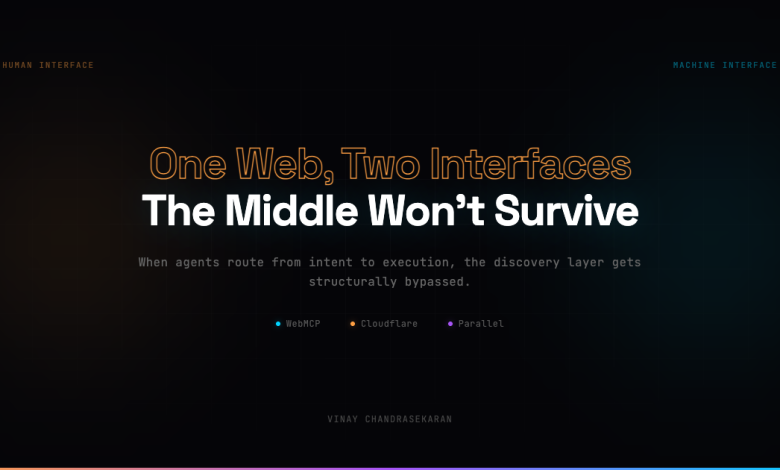

In a single week in February 2026, three companies shipped three different answers to the same question. The web needs a machine-readable layer that doesn’t exist yet. None of them coordinated. All of them converged.

Three signals, one thesis

On February 10, Chrome shipped WebMCP, a new browser API that lets websites expose structured actions directly to AI agents. Two approaches: one built on HTML forms, one using JavaScript. For the first time, a site can tell an agent – here’s what I do, here’s how to call it. No scraping, no reverse-engineering the button.

Two days later, Cloudflare launched Markdown for Agents. One toggle, and any site can serve a clean, token-efficient version of itself to machines. Their own blog post dropped from 16,000 tokens as HTML to 3,000 as markdown. Same content, 80% reduction.

Meanwhile, Parallel – Parag Agrawal’s startup — had been building a web API purpose-built for AI agents. Not waiting for sites to opt in. A discovery layer where machines find what they need without crawling HTML, now integrated into Vercel’s AI SDK.

Three companies. Different layers – discovery, content, execution. Same thesis: the web is splitting into two interfaces. One for humans. One for machines.

We’ve been here before – sort of

This isn’t entirely new. We’ve been teaching machines about the web for three decades. robots.txt in 1994. RSS. Sitemaps. Canonical tags. OpenGraph. Schema.org. Each standard taught machines a little more about what we are.

But every one of those taught machines how to read us. WebMCP is the first that teaches them what we do. That’s a fundamentally different relationship.

The adoption question

The obvious question is whether anyone will actually implement this. History offers a pattern.

WCAG – web accessibility guidelines – has existed since 1999. Twenty-seven years later, 95% of homepages still fail basic checks (https://webaim.org/projects/million/). Schema.org worked where Google rewarded it with rich snippets. It was ignored everywhere else. GDPR moved faster because the fines were real.

WebMCP has no ranking carrot and no regulatory stick. So what drives adoption?

Follow the transactions

A booking site doesn’t care if a human or an agent fills the seat. Revenue is revenue.

When agents become a real acquisition channel, no site will want scrapers guessing their checkout flow. A misread button label or a stale DOM selector means a lost sale. Structured action definitions eliminate that ambiguity. High-volume transactional sites – travel, commerce, SaaS – will be the first to expose intent interfaces. Not because a standard told them to, but because friction directly costs money.

Commerce has always dragged infrastructure into adulthood. Schema.org succeeded because Google made it worth doing. WebMCP will succeed where agent-originated revenue makes it expensive not to.

The moat isn’t the interface

But there’s a harder question underneath the incentive: does exposing structured intent actually protect you?

Every platform cycle runs the same playbook. Start as the neutral aggregator. Gain dominance through network effects. Then cannibalize the suppliers who made you dominant. Google aggregated TripAdvisor’s reviews, then built Google Hotels, Flights, and Shopping. Amazon studied what marketplace sellers sold best, then launched Basics in those exact categories.

Now AI platforms are running the same playbook, faster. ChatGPT moved into actions, checkout, and commerce partnerships. Perplexity launched shopping. The aggregator doesn’t just rank you anymore. It replaces you.

The second interface is not a moat. It’s the price of participation. TripAdvisor had twenty years of structured review data and it didn’t save them – an AI summarized it all for free. The source became invisible

What agents can’t route around: exclusive supply (Airbnb survives because hosts list there first), fulfillment infrastructure (Stripe isn’t threatened because agents need Stripe to move money), real-time inventory (Booking.com has live availability, not static opinions), and regulatory position (you need licences to process payments in every jurisdiction).

Discovery gets eaten every cycle. Execution persists.

The middle falls out

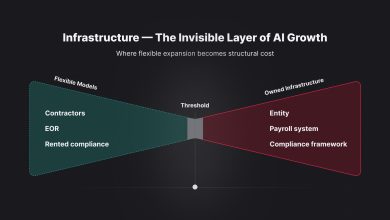

The second interface creates a three-tier stack. The agent layer on top – ChatGPT, Claude, Perplexity – where intent originates. The execution layer at the bottom – Stripe, Airbnb, airlines – where fulfilment happens. Everything in between gets squeezed.

Content sites, review platforms, comparison engines, SEO-driven businesses. Anything whose primary value was being the place people discover things. When agents route directly from intent to execution, the discovery layer doesn’t shrink. It gets structurally bypassed.

When every hotel is bookable through structured intent, the agent choosing between them has no eyes. No brand loyalty. No memory of your last campaign. Price and availability become the dominant signals. The things the human interface created value from – brand, UX, conversion funnels – matter less when the primary interface doesn’t have a screen.

For three decades, the visual web was the web. Every business was, on some level, a media business. Competing for human attention. The second interface doesn’t have eyes. It has context windows. And context windows don’t reward design, don’t remember brands, and don’t click ads.

What comes next

We’re heading into a hybrid world that will last for years. Structured intent where sites opt in. Agent-native indexes for discovery. Scraping and inference everywhere else. The transitions won’t be clean – they never are.

The web is quietly growing a second interface. The question isn’t whether to build it. It’s what happens to everything that was designed for the first one.