The gap between expectation and reality

By 2025, many expected AI to feel embedded, autonomous, and quietly operational.

Agents were meant to be booking meetings, updating content, handling data, and interacting with systems without friction.

…as agents become capable of persistent, autonomous action, considerations about governance and oversight are increasingly prominent (AI agent — Wikipedia [https://en.wikipedia.org/wiki/AI_agent]).

The reality is a lot more fragmented, the marketplace for tools, addons and bolt-ons absolutely brimming and my reality is the scope and pace of technical releases potentially offering a game-changing day, day after day.

What has completely flummoxed me is the rate and take up of Ai Search – the AEO and GEO opportunities of optimisation – the number of tasks to get many sites AI ready are considerable, but more than 12 months on from when we all kind of established a standard there is much less adoption than myself and indeed, many originally thought.

Here I expose some of the brutal truths, challenges and blockers that impacting adoption and why, many smaller, more agile companies are really able to take advantage and get ahead in corporate spaces embroiled in decision making and red tape.

Instead, adoption has been slower, more cautious, and often fragmented.

From the perspective of a freelancer working directly on websites and digital infrastructure, the reasons are far less about capability and far more about readiness.

AI works — but only on solid foundations

Most conversations about AI adoption focus on tools, models, and interfaces.

What they rarely address is the condition of the websites and systems those tools depend on.

Many business websites still run on legacy CMS setups, inconsistent content structures, and years of accumulated technical debt.

AI systems rely on clarity, predictability, and declared rules — qualities that many sites simply do not yet have.

From experimentation to responsibility

Early AI adoption was largely read-only.

Models summarised, analysed, or generated content based on existing data, with limited consequences if something went wrong.

Websites were built for people, not machines

Most sites were designed with human navigation in mind.

Menus, pages, and content hierarchies often rely on visual cues and implied meaning rather than explicit structure. The number of truly good technical SEOs is limited – they are a finite resource and, naturally in high demand.

Even fewer seem able to identify what a good technical SEO actually is.

AI systems, by contrast, require clearly defined relationships between content, data types, and intent.

Retrofitting that clarity into existing sites is time-consuming, technical, and rarely glamorous.

The quiet rise of AI-specific site policy documents

One of the more telling shifts in early 2025 was the growing adoption of llms.txt.

Modelled loosely on robots.txt, it allows site owners to declare how large language models may interact with their content.

While not universally implemented, its emergence reflects a broader trend.

Sites are being asked to state their position on AI access explicitly, rather than treating AI crawlers as an afterthought.

Why declarations matter more than people realise

Explicit AI permissions are not just technical signals.

They are legal, ethical, and operational boundaries.

For businesses subject to GDPR or other data protection frameworks, uncontrolled AI access introduces uncertainty around data usage and consent. Declaring intent through files like llms.txt is one step toward restoring clarity and accountability.

Compliance has become an AI bottleneck

Many organisations assumed compliance was a one-off exercise.

Cookie banners were added, privacy policies were updated, and the box was considered ticked.

AI reopens those assumptions.

Data flows are more complex, automated processing is harder to audit, and responsibility is less obvious when systems act without direct human input.

Therefore, the delivery, reporting and decision making becoming less transparent and less easy for agencies responsible for large accounts to accurately advise upon without having the technical competence to deliver the foundations. Equally true of those in the customer front line – customer service and NBD.

ICO guidance on individual rights and automated decisions — including considerations about solely automated AI decisions: How do we ensure individual rights in our AI systems? | ICO [https://ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/artificial-intelligence/guidance-on-ai-and-data-protection/how-do-we-ensure-individual-rights-in-our-ai-systems/]

The freelancer’s changing role

In this environment, freelancers are increasingly acting as intermediaries.

Not between clients and tools, but between ambition and reality.

Much of the work now involves translating AI possibilities into infrastructure decisions: what can safely be automated, what needs guardrails, and what must be fixed first. How to open up content for training or citation. Why blindly adding ai plugins wasn’t such a wise move. It is less about building AI features and more about making AI possible at all.

Rate limiting and the reality of AI traffic

Another under-discussed issue is traffic behaviour.

AI crawlers and agents do not behave like human users.

Without proper rate limiting and crawl controls, sites can experience unexpected server load, performance degradation, or outages.

Managing this has become a routine part of preparing sites for AI interaction, yet it is rarely mentioned in strategic discussions.

Cloudflare on rate limiting and bot traffic — explanation of rate limiting and its purpose: What is rate limiting? – Cloudflare [https://www.cloudflare.com/learning/bots/what-is-rate-limiting/]

We have as technical and content focussed SEOs enjoyed 15 years of inviting polite well-mannered guests to ‘the dinner table’ we’ve been allowed to set the seating plan and largely steer the conversation to our client’s benefit.

Now, well now we have highly competitive, extremely fast and much less polite crawlers to deal with – they don’t really need our dinner party – but if they arrive, they want everything, all at once and they might have quite a lots of pals, all with very similar names – what’s the issue with this?

It causes load, usage spikes and for smaller sites on cluster hosting – a huge and very real ‘site crash risk’. In other words the Technical debt is no longer invisible.

For years, many digital issues could be deferred without immediate consequence.

Broken structures, inconsistent markup, and outdated frameworks were inconvenient but survivable. AI changes that tolerance.

Systems that depend on machine readability expose weaknesses quickly and often publicly.

Why small and mid-sized organisations feel this first

Large enterprises often have dedicated teams addressing these challenges.

Smaller organisations rely on freelancers and external specialists.

This makes the gap more visible at the SME level.

AI promises efficiency, but the upfront work required to make systems safe and usable often comes as a surprise.

2025–2026 as a consolidation phase

Rather than a slowdown, the current period may be better understood as consolidation.

The industry is absorbing the implications of autonomous systems rather than rushing to deploy them.

This phase is characterised by standard-setting, boundary definition, and infrastructure repair.

It is quieter than hype cycles, but arguably more important.

Why this caution is healthy

Unchecked automation carries real risk.

From compliance failures to reputational damage, the cost of getting it wrong is high.

The current hesitation suggests that organisations are learning to ask better questions.

What data is being used, who is responsible, and what happens when systems fail are no longer theoretical concerns.

The unseen labour behind “AI readiness”

Much of the work enabling future AI adoption is invisible.

It happens in codebases, content audits, server configurations, and policy reviews.

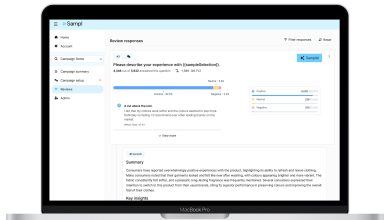

Organisations that invest in clarity now — structurally, legally, and technically — will move faster later. Tools help to administer, standardise and visualise some of these tasks that were previously a pure technical SEO decision.

Those that do not take the time to understand or have the technical resources to manage these areas of code may find AI exposes problems they can no longer postpone.

…information about services and tools for managing the content elements and frameworks for inviting and welcoming ai can be found at AI.Answers-Hub Club (ai.answers-hub.club).

Link: https://ai.answers-hub.club/

Conclusion: foundations before futures

AI adoption is not stalled because the technology failed to deliver.

It is paused because the knowledge and adoption at a foundational level needs to catch-up.

From the freelancer’s vantage point, this is not a setback.

It is the necessary groundwork for AI systems that are resilient, compliant, and genuinely useful.

From a market maturation perspective however, it is highly interesting – normally the pioneering stage of any ‘new trend’ needs the network to be established, in this current pioneer phase – the networks are there and they are established.

The models, platforms, and distribution layers are established — yet progress is being constrained not by access, but by preparedness.