As a CTO of an early stage start-up, I spend a lot of time thinking about how to make my team’s lives easier, not just technically, but emotionally. In fast-moving AI startups like ours burnout is a constant concern. Deadlines pile up, backlogs grow, and small “to-do later” tasks like writing data validation tests can turn into constant sources of stress, on top of the aggressive roadmap schedule.

As we started scaling the amount of data flowing into our platform, data quality management became increasingly difficult. Our small team was getting further behind on writing BigQuery test cases, and that backlog started to affect how confidently we could move fast.

At the time, we had one QA tester, incredibly thorough on functional and regression testing, but he wasn’t a data scientist or a business analyst. Expecting him to carry the entire data quality burden just wasn’t fair anymore. So I decided to redistribute that responsibility to the Data Science team, who were already building the business logic inside BigQuery that powers our front end. It simply made sense, they know the data best, and writing unit tests for their own transformations gives us a much tighter feedback loop.

There was just one problem with asking them to do that though… they were already drowning in Jira tickets working hard to churn out new features on our platform. I worried our roadmap would start to see delays which we just simply could not afford. So I did engineers do best… I built something.

The Framework That Changed Everything

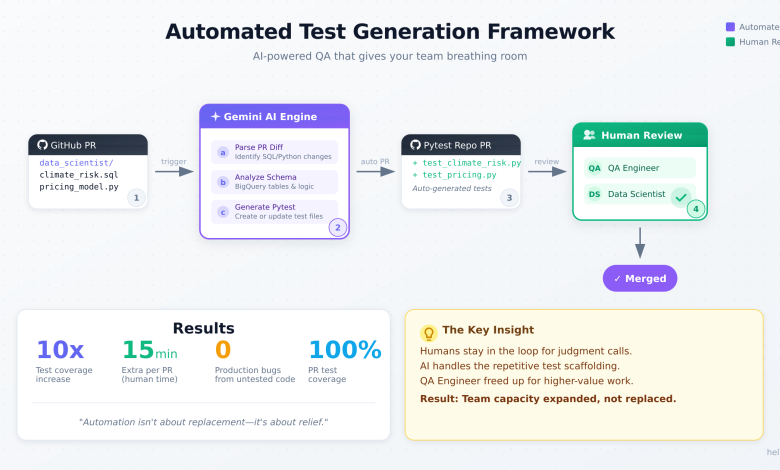

Over one weekend, I designed and built an automated test generation framework using Gemini (Google’s LLM), integrated directly with GitHub. The system reviews each PR’s diff, identifies relevant SQL and Python code changes, and automatically generates (or updates) Pytest test cases even checking for missing BigQuery validations.

If a PR touches a table like climate_risk_by_country or pricing_tab, the framework intelligently looks for existing test files, updates them if needed, or creates new ones, then automagically opens a PR to our pytest framework repo that gets reviewed by both the QA Engineer AND one other data scientist before getting merged.

The results?

The QA Engineer now has more time to focus on other areas of application that have been neglected due to the burden of having to keep up with writing these test cases on his own historically. The Data Science team spends just 10–15 minutes extra on their PR. A downer yes, but we all agree as a team that the benefits outweigh the cost. Most importantly: our data QA coverage has grown EXPONENTIALLY! This helps us all sleep a bit better at night.

What It’s Saving Us

Since rolling this out:

· We’ve increased test case coverage 10x

· We are more proactive at catching bugs BEFORE we push to production

· QA gets consistent, validated test coverage automatically.

· The framework ensures every PR is shipped with data validation guardrails in place.

This small project has turned into one of the biggest time-savers in our engineering workflow, and it’s scalable across teams.

Why This Matters

For me, this wasn’t about fancy LLM integrations and “doing AI” just because it’s hot right now. It was about showing my team empathy and of course strengthening our QA coverage.

Our QA process needed to evolve, but I didn’t want to solve it by putting more on people’s plates. I wanted to design for relief to make engineers feel supported, trusted, and valued.

Sometimes leadership isn’t about setting strategy or chasing metrics, it’s about noticing when your team is tired, when they’re carrying too much, and doing something tangible to lift that weight.

Now, I’ll be the first to say: automation isn’t automatically kindness. For many people in tech, especially those watching layoffs or hiring freezes, it can feel like the opposite. Tools that were supposed to make life easier sometimes become the very reason people lose their jobs.

That’s not what this was about. For us, automation wasn’t about replacing anyone — it was about protecting the people we already have. We’re a small, high-performing team. Every hour counts, every bit of burnout compounds. By automating the most repetitive, mechanical parts of the QA process, we gave the human team space to focus on the kind of work only they can do: thinking critically, solving complex data problems, and building the logic that powers our platform.

So yes: automation can be a form of care, but only when it’s used to empower, not replace. In this case, it was a pressure release valve for a team that was stretched too thin. The goal wasn’t to cut; it was to sustain.

There’s a line I think every technology leader has to define for themselves: just because something can be automated doesn’t mean it should be.

At a company level, I think this is where leadership has to zoom out. Automating small, repetitive tasks is the easy part. The harder, and more important, challenge is asking: how should we restructure our organizations to make the most of human creativity when automation becomes pervasive?

When I think about company design, I don’t see AI as a replacement force: I see it as a redistribution force. It gives us a chance to reorganize how we work so humans spend their time where it matters most: decision-making, judgment, relationships, innovation. That means leaders have to make proactive choices, not just about what gets automated, but about what stays human.

Protecting human jobs isn’t just a moral stance; it’s a strategic one. Because if you strip a company of human curiosity and collaboration, you lose the very things automation can’t replicate. The real opportunity for leaders isn’t to chase “leaner” teams; it’s to design healthier ones.

So yes, automate the repetitive, the draining, the low-leverage. But when it comes to creativity, empathy, and trust, those are your company’s renewable energy sources. Protect them fiercely.

A Note on Empathetic Engineering

If there’s one thing I’ve learned leading engineering teams, it’s that empathy scales better than process… but only if you design for relief, not just support.

A lot of leadership writing stops at “servant leadership,” which is about being available and understanding. But empathy in tech isn’t only about listening — it’s about removing cognitive friction. Typical startup CTOs focus on velocity, process frameworks, or adding new tools to squeeze out more output. I’ve learned that real velocity comes from subtraction: taking away the things that drain creative energy.

I didn’t build this framework to enforce more rigor or standardization, I built it to give my team breathing room. That’s a key mental shift: design for relief, not control. The automation wasn’t about showing what AI can do; it was about showing my team what they don’t have to do anymore. That’s what empathy looks like when you operationalize it.