AI arrived promising a revolution. Enterprises were sold on 10x productivity and software that wrote itself. What happened? Incremental gains, pockets of isolated value and wildly uneven outcomes. The ‘plug-and-play’ rollout of coding copilots created an expectation debt. Leaders anticipated a step-change in performance, but their teams found themselves wrestling with messy integrations, inconsistent quality and a new layer of maintenance overhead.

Engineering teams had their own, even sharper cycle of inflated promises. They were promised a step-change: copilots that would double velocity, agents that could generate full features, and AI systems that would handle the boilerplate. What they actually got was a handful of convenient shortcuts… helpful, yes, but nowhere near the transformation they’d been sold.

The hype was premature. It focused on tools, not transformation. But over the last 12-18 months, stable, durable patterns have emerged. This is fundamentally reinventing how software is designed, built and maintained by real people and their day-to-day processes. This is what AI-native engineering (AINE) is all about.

Let’s dissect the missteps and set the record straight on how we got here.

Why the first wave fell short

GitHub Copilot landed nearly four years ago, billed as the gateway to an AI-fuelled coding revolution. But for most of those years, the results were (and let’s be honest) underwhelming. Ask anyone in the trenches: Copilot powered up nice demos and gave us that ‘wow’ feeling, but day-to-day, the promised productivity leap just didn’t show up at scale. We had a trickle of AI assist, not a deluge.

The initial enthusiasm for AI in engineering quickly soured into scepticism. The gap between the promise and the reality was a direct result of a few critical missteps.

Organisations confused tooling with transformation. Rolling out AI-powered code editors and coding assistants, without redesigning the underlying processes, led to little more than glorified copy-paste productivity and sporadic speedups. It was a tactical bolt-on, not a strategic overhaul.

Teams dabbled with AI features but failed to establish new operating models. And without the discipline to develop clear prompts upfront and build evaluation harnesses, early experiments became interesting, yet unscalable, novelties.

And then, when it was time to move from pilot to reality, what worked for a handful of engineers collapsed in the real world of multi-repo, multi-service, regulated environments. The scale and complexity of enterprise software laid bare the fragility of these ad hoc approaches. The trust gap only grew wider.

Evolving, durable patterns: What changed?

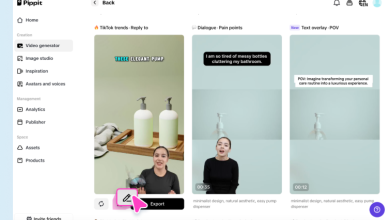

Then something changed…fast. The last 6-12 months have delivered an explosion of AI tooling and approaches. New tool entrants, smarter agents and supporting frameworks. Why this sudden shift? Because the game moved on from vibe coding; throwing prompts at the wall and hoping for magic. The era of loosely guided, improvisational engineering has had its time.

Now, it’s about rigour. We’ve traded vibes for discipline. Enter spec-driven development (SDD): formalising intent, business logic, user experience and compliance – all up front, structuring what matters, and setting the conditions for both humans and AI to build reliable outcomes. This new methodology doesn’t just improve the odds of valuable work, it’s actually starting to raise the ceiling on what teams and their AI counterparts can accomplish together.

Progress always starts with thinking differently. The real shift came when teams started seeing AI as an engine that powers the entire pipeline. Value doesn’t live in tacked-on features, it emerges when AI is woven through every stage of the software lifecycle, driving clarity and speed, from end to end.

The AINE Stack: Three layers that actually work

AI-native engineering isn’t about chasing silver bullets or piling on more tools. It’s a rethink of the entire development stack – a layered system where each component magnifies the effect of the next and removes friction at scale.

The key element here is the spec layer. By capturing business logic, user experience and architecture up front, in machine-readable context, teams finally eliminate ambiguity and needless rework. Instead of context scattered everywhere, there’s a single source of truth for both humans and AI agents. Suddenly, teams move from the chaos of ad hoc prompts to a world of orchestrated agents generating code. This is underpinned by automated enforcement of testing, security and code review, governed by crisp policies and operated as a unit.

Now these specs aren’t a formality; they define reality and remove guesswork. With crisp intent, both engineers and AI agents have the exact context they need, reducing handoffs and banishing rework. Suddenly, implementation isn’t a game of telephone or ping-pong, it’s a straight line.

With the spec in hand, the next layer can come alive. AI agents take on the grind. They build the boilerplate, stand up scaffolding, generate rigorous regression suites and hunt down stale dependencies or lurking vulnerabilities, before a human ever gets the alert. Review agents watch guard, enforcing architectural rules, naming conventions and continuous compliance. Now, this isn’t about AI taking over. It’s about stripping away the mechanical drudgery, freeing engineers to focus on design, logic and innovation where it actually matters.

So, let’s imagine that a feature request drops. Instead of chaotic handoffs and a string of clarifying messages, a structured spec lands with clear intent, UX states and architectural design. Agents begin implementing user stories, and, in parallel, author up-to-date tests to validate requirements. The inevitable security patch is already handled, with a compliant, ready-for-review PR in the pipeline. Once code is committed, the pipeline shifts from full regression to change-impact-driven test selection. An ephemeral preview environment kept up to date in parallel via infrastructure-as-code. During the review phase, the engineer reviews semantic diffs, model-flagged risk hotspots and feature gaps, tests edge cases and proceeds to the next task.

Each iteration fuels a feedback loop: a story implementation log, test coverage deltas and a decision log are committed to memory, leaving both an audit trail and aiding future feature development.

This is a whole new operating model. Fragmented, manual handoffs get replaced by automated, reliable workflows where trust is embedded from spec to release. Engineers remain in control at decision points, agents automate the mechanical work.

The traps to avoid

Let’s be clear: buying tools isn’t transformation. AINE fails when leaders treat it as a product drop, rather than a systemic shift.

Shortcuts like rolling out Copilot-class tools without a solid spec discipline or real evaluation harness? That’s fantasy, not strategy. Without a structured approach to context engineering, you get non-deterministic results, which is nothing more than a game of russian roulette. If you’re not running adversarial tests, building rollback plans or measuring what matters, you’re more or less just hoping for the best. And if there’s no straight talk about who owns output in production (hint: it’s still engineers), you’ve got a culture clash on your hands. My advice? Don’t treat AINE as a plugin, but as an operating model. Engineers still need to demand extreme rigour at every step in order to move faster.

Trust is earned, not gifted, so if you want to make the AI dependable you need to provide it with evidence. AINE needs clear metrics that track agent-generated code acceptance, faster merge times, cyclomatic complexity, static analysis, lower (or at least the same) defect rates. Measurement isn’t optional here.

Build human oversight into your workflows with unambiguous approval gates and override options. Don’t skimp on guardrails.

Where do we go from here?

By 2026, the divide will be obvious: what lasts and what fades. Spec-driven development and autonomous test generation, I’d argue, are here to stay.

Meanwhile, the cliches – general chat-style coding, one-off prompt engineering, repo-wide refactors by ungoverned agents – will shrink into niche, limited use cases.

But before you even consider scaling an AINE initiative, demand proof. Are specs exhaustively capturing architectural and product requirements? Are they effectively utilised by the model? Have the economics shifted? Are features faster, cheaper and higher quality? Crucially: can these results be replicated across multiple teams, or is it all just the triumph of your favourite champion squad? If you don’t have tangible answers to these questions, you’re not ready to scale.

The early hype overpromised and left a crater of scepticism. But trust, once lost, can be rebuilt – one dependable pattern, one clear metric, one workflow at a time. This is not about replacing people. It’s about harnessing AI to amplify human creativity, discipline and results. Leaders measure, instrument and scale what works.

Anchor everything with robust specs. Pick your pipeline and make it bulletproof before you reach for more. Scale only what delivers. And, poof, like (AI) magic, the age of wishful thinking is over. AI-native engineering isn’t hype. It’s the discipline that brings trust back…because results compound and great teams demand them.