Phoenix, Arizona

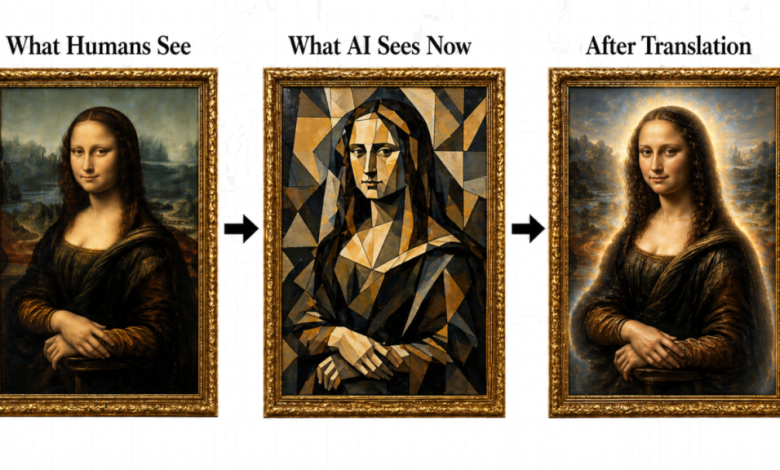

Three-panel triptych: original Mona Lisa (What Humans See), Mona Lisa as cubist fragments (What AI Sees Now), clean luminous Mona Lisa (After Translation). Illustrates the translation layer GEOlocus.ai delivers between human-authored content and AI ingestion.What AI sees on most websites — and what it sees after the GEOlocus.ai translation layer.

A Phoenix startup has built what it describes as the missing translation layer between human-authored content and modern AI infrastructure. As proof of concept, five months into a live deployment, three of four grounded AI systems in the April 26 evaluation — against the formal Generative Engine Optimization (GEO) criteria, Aggarwal et al., KDD ’24 — identified Top10Lists.us as a gold-standard GEO exemplar under the published criteria, while the fourth returned a negative result that is included unedited in the methodology archive[5][16].

When an AI system visits a website, it arrives the way a tourist arrives in a foreign city — with a phrasebook, a map, a budget, only a partial grasp of the language, and a flight out tomorrow morning. There is no time to enroll in a class. No time to absorb the cultural rhythms. Our tourist has mere hours to take in the place and form an impression of it. He recognizes landmarks. He guesses at signs. He misses the idioms entirely. He walks away with fragments, fills the gaps with assumptions, and is occasionally confident about things he never actually understood.

Most publishers are responding to this the way you’d respond to a confused tourist — by speaking louder and slower in your own language, waving and pointing, and eventually handing them a map and walking away.

GEOlocus.ai took a different position. Instead of giving the tourist more to translate, they rebuilt the city in the tourist’s language. Every street sign legible on first read. Every citizen fluent enough to answer his questions clearly. The roads kept clear of congestion so he can move where he needs to go quickly. Every local reference traceable. Every number current. Nothing that requires guessing…rather like Switzerland.

The difference is between a visitor who leaves with a story he half-understood and one who leaves fully informed and is incentivized to come back

GEOlocus.ai refers to this practice as “GEO as a Service” (GaaS), a term coined by them.

Cold start to gold standard in five months

In December 2025, GEOlocus.ai initiated a cold-start deployment with Top10Lists.us. The domain was new[3]. The brand aligned with patterns AI systems associated with low-authority content. In its first month, the site recorded approximately 200 AI-bot crawls. None were user-initiated. Things have changed.

Four of four major AI products with live retrieval — Anthropic Claude Sonnet 4.5, OpenAI GPT-5, Google Gemini 2.5 Pro, and Perplexity (consumer web interface) — independently identified Top10Lists.us as a Gold Standard GEO exemplar in April 26—28, 2026 evaluations. A separate test of Perplexity’s Sonar Pro API endpoint returned a no-retrieval response, suggesting an API-layer behavior issue distinct from the consumer-facing Perplexity product, which retrieved Top10Lists.us live pages and reached the same Gold Standard verdict as the other three systems[5][16].

The exact prompt, reproducibility script, API-grounding setup, and unedited model responses are published at the methodology page[16].

Context matters here. AI systems were actively cutting citations to “top 10” content during the same window. Google has stated it “works to combat that kind of abuse” of weak “best of” lists in Search and Gemini[11]. Seer Interactive reported a 30% month-over-month decline in ChatGPT listicle citations between December 2025 and January 2026, and Gemini’s overall citation rate dropped from 99% in February 2026 to 76% in March 2026 (a 23-percentage-point decline)[12].

Despite this headwind, Top10Lists.us went the opposite direction. AI citations and consumer-triggered retrievals to the site increased sharply from March through April 2026[13] — in the same window the category was contracting and the category was being filtered, the Top10Lists.us site was being elevated.

In the 7-day period ending April 26, 2026 (12:22 UTC), Top10Lists.us logged 463,420 AI-bot crawl events from 27 distinct bot fleets. Of those, 28,034 (6.05%) were consumer-triggered — PerplexityBot, OAI-SearchBot, ChatGPT-User, YouBot — with the remainder AI training and indexing crawls (GPTBot 135,950; Meta-ExternalAgent 82,469; Googlebot 72,553; ClaudeBot 23,012; full per-bot breakdown at [28]). The 6.05% consumer-triggered ratio is approximately 1.9× Cloudflare’s reported 3.2% user-action share in its AI crawler dataset[2].

For context, OtterlyAI — a leading AI search monitoring and optimization platform that tracks brand visibility across ChatGPT, Perplexity, and Google AI Overviews —

published a 90-day trial in which their own site logged 62,100 AI bot visits[1].

In the 30-day period ending April 30, 2026 (13:00 UTC), Top10Lists.us logged 1,695,112 AI-bot crawl events from 29 distinct bot fleets — over 27× OtterlyAI’s 90-day total in one-third the time. Of those, 59,365 (3.50%) were consumer-triggered. Full per-bot breakdown at [28]). The 3.50% consumer-triggered ratio confirms the measurement by Cloudflare in their recent study, which reported a 3.2% user-action share in its AI crawler dataset[2].

“We’ve essentially created the hot nightclub for AI,” says Mark Garland, Cofounder of GEOlocus.ai. “Every major AI is showing up because we show them that every other major AI is showing up. The signal is self-reinforcing and compounds over time.”

The phrasebook the city never sees in line

Even the best phrasebook is consulted only occasionally once the tourist memorizes it. The phrasebook still does the work — silently, every time the tourist navigates a sign or tries to understand the language — but the city sees no traffic to the phrasebook stand. A common misreading among publishers is that low crawl counts on llms.txt and robots.txt mean those files are not worth maintaining. The reasoning is wrong.

Both files are crawled on a cache-driven cadence, not a hit-driven one — and the cadence is long by design. RFC 9309 specifies that crawlers should not use a cached robots.txt for more than 24 hours[23]; Google’s documentation confirms a 24-hour cache horizon[24]. llms.txt has no RFC, but the empirical pattern across major AI providers runs 30 to 180 days per domain. OtterlyAI’s 90-day study logged 84 /llms.txt requests against more than 62,100 total AI bot visits — 0.14% of bot traffic against a file every major LLM provider claims to consume[25].

The mechanism is the cache layer. Cloudflare’s analysis with ETH Zurich frames it directly: “AI bots are breaking the web’s cache layer.”[26] ClaudeBot crawls roughly 24,000 pages per referral it sends back; GPTBot crawls roughly 1,276 pages per referral[27]. Citations happen from cache; visits do not. Presence and freshness, not hit count, are the signals that matter.

A reproducible metric layer, not an internal benchmark

The numbers quoted below aren’t internal logs. On April 27, 2026, GEOlocus.ai published four dated, frozen methodology pages — Signal-to-Noise Ratio (SNR, aka Relevance Ratio/RR), Source Grounding Ratio, Retrieval Token Cost, and Records-per-Second — each with a downloadable receipts.json that exposes the per-site values used in every comparison[18].

Top10Lists.us reports 100% mean Signal to noise ratio (SNR, aka RR) (vs 73% cohort median), 0.54 Source Grounding Ratio (SGR) (vs 0.00), 0.0493 Retrieval Token Cost (RTC) (vs 0.362 cohort median — more than 7× more efficient), and 726,412 records/sec measured at the datacenter layer on a 230,329-terminal-URL sitemap tree (vs 372 cohort median, approximately 50× the next-fastest site in the comparison set and approximately 1,950× the cohort median).

The constructs themselves trace to public RAG-evaluation literature — Microsoft Azure’s RAG evaluators, Ragas faithfulness, Google’s crawl-budget docs, and the “Lost in the Middle” context-cost paper[19]. The methodology pages and receipts let any reader recompute every number.

GEOlocus.ai measured this directly using a controlled comparison. Three leading SEO content agencies’ marketing sites (DA 70+) were tested against Top10Lists.us as a delivery-layer benchmark, not as content-category peers, using the same crawler, same network, same time of day, with redirects followed end-to-end and bot user-agents (Googlebot and ClaudeBot). Methodology any reader can reproduce[9].

|

Metric |

Top10Lists.us | Cohort Median* |

| Total records | 230,329 | 642 to 8,755 |

| RPS (Records/second throughput) | 726,412 | 372 |

| SNR (Signal-to-Noise Ratio) | 100% | 73% |

| SGR (Source Grounding Ratio) | 0.54 | 0.00 |

| RTC (Retrieval Token Cost) | $0.0493 | $0.362 |

| LMR (Lastmod Recency) | 0.74 days | 432 days |

* Anonymized. Four established SEO content agencies selling GEO services (DA 70+, decade-plus tenure) tested. Comparable patterns observed across the set. Surprisingly, one of the cohort actively blocks all bots, including GoogleBot. Attributed to a misconfiguration of their edge workers, but still significant.

**Throughput metrics are measured at Cloudflare datacenter speeds — what AI crawlers actually experience when fetching the site from datacenter infrastructure. Residential reproduction via the published reproduce.mjs scripts adds approximately 1 second of wall-clock time to the full sitemap-tree walk and produces proportionally lower absolute records-per-second; cohort multipliers remain stable across measurement perspectives.

***SNR (Signal-to-Noise Ratio) is the proportion of AI-visible primary-content characters relative to total visible-text characters after the bot-facing translation layer is applied.

****SGR (Source Grounding Ratio) is the tier-weighted ratio of cited numeric claims to total numeric claims, weighted by source authority (T1 = .gov/.edu, T5 = unsourced).

*****RTC (Retrieval Token Cost) is response tokens × time-to-last-byte in seconds, divided by useful primary-content characters. Lower is better.

******RPS (Records per Second) is total terminal-URL sitemap-tree discovery throughput at the Cloudflare datacenter layer, not human page-render speed.

This matters because AI systems operate within fixed time and token constraints[6] — the same budget and flight-out-tomorrow problem the tourist faces. Within that window, the system must ingest, analyze, verify, and reason. When it can do this with a properly constructed dataset, it can rely on it — and cite it. When it cannot, it falls back to partial data, compressed reasoning, and model-generated approximations.

Translated and efficient data is more likely to be cited as live retrieval becomes the norm. Incomplete data forces approximation and deprecates citation[14].

“Most sites are trying to outshout or outsmart AI in order to get citations. So are their competitors. That is a zero-sum game,” adds Robert Maynard, Cofounder of GEOlocus.ai. “We build sites that AI can understand efficiently and trust as sources. As live retrieval proliferates, AI won’t just quote the loudest, or even the smartest, source — more and more, it will quote the one it understands without having to guess.”

What “speaking the AI’s language” actually looks like

A fluent host doesn’t translate phrase by phrase. He anticipates what the visitor needs to understand and presents it the way the visitor already thinks, in ways it best understands.

A useful analogy is Bloomberg.

Bloomberg Terminal is not valuable because it’s fast. It is valuable because every datapoint inside it is sourced, fresh, insightful, and presented in a way traders can act on immediately — the language a trader thinks in, structured the way a trader makes decisions. Traders pay roughly $32,000 a year for fluency, content depth and freshness.

GEOlocus.ai applies the same logic to AI systems. It is not built for human browsing. It is built for machine comprehension — in the form machines comprehend[8].

Fire-and-Forget

When publishers attempt to optimize for AI ingestion, their changes are applied to their live human-centered site. That introduces friction. New templates. Workflow changes. Compliance and security reviews. Ultimately, it leaves neither audience fully satisfied.

GEOlocus.ai requires none of it. The existing site remains unchanged. No CMS migration. No workflow changes. No impact to compliance or security posture. The human-facing experience is untouched. The GEOlocus.ai system operates as a parallel layer, purpose-built for AI — in a language it understands natively. Implemented with the flip of a switch.

Attribution, not approximation

AI systems routinely extract and synthesize information without consistent attribution. The underlying data may originate from one publisher while the visible citation points elsewhere. Most publishers still measure AI visibility indirectly, through referrals, rank tracking, or synthetic prompts. GEOlocus measures bot-class behavior at the delivery layer.

GEOlocus.ai can and does. Because AI bot traffic is handled at the delivery layer, each interaction is recorded with full resolution. Training crawls are separated from consumer-triggered retrieval. The result is observed behavior, not simulated attribution.

Most publishers hope for citation. GEOlocus.ai engineers and translates for it. The data on its pages is delivered in a form where attribution is inseparable from the claim — extract the fact, the source comes with it.

The conclusion is consistent. Content strategy alone is increasingly insufficient. AI visibility also depends on whether systems can crawl, parse, ground, retrieve, and cite the content efficiently — the translation layer that determines whether a page actually gets quoted.

The shift is already underway

AI systems are not ranking sites like Google and Bing do. They are selecting which sources to retrieve, ground against, and cite[15]. Major AI products and RAG-evaluation frameworks increasingly emphasize groundedness, source attribution, and reduced hallucination, and they instruct their systems to optimize for efficiency in token use[20].

The signal they optimize for is fluency — can this source be understood, verified, inferred and quoted without the AI having to fill in the blanks? When an AI fills in the blanks, that is the moment a hallucination is born[14].

Hallucination is the top concern for AI model developers and enterprises that use AI today[21]. When a site is built in the AI’s language, the blanks don’t exist. That does not eliminate hallucination risk; generation-side errors can still occur. But it reduces one of the major causes of hallucination: missing, stale, noisy, or weakly grounded source evidence.

That recognition is uneven today. When AI is given the right criteria to evaluate against, the translation optimization wins. When AI is asked the broader use-case question, the legacy parametric model still surfaces older authorities. However, AI is moving away from parametric memory to live search. What was cited last year is rapidly changing this year[17].

That gap is closing faster than training cycles alone suggest. Google’s Search Live, expanded globally in 2026, mediates AI answers through real-time, source-linked Search experiences rather than parametric recall alone[17].

Long context windows reduce some retrieval pressure for single-document analysis, but for queries that demand current data — local detail, price, inventory, news or high-stakes recommendations — live retrieval is structurally dominant.

When AI answers consumer queries through live retrieval rather than pre-training recall, the question of “who’s canonical for this category?” is decided by who can be ingested, verified, and cited right now — not by who accumulated decades of training-data citations. That tailwind compounds for sites engineered for live retrieval. It works against sites whose authority lives in pre-training corpora.

GEOlocus.ai is the layer that makes content fluent to AI. The publishers who solve for it will be quoted. The rest will be visited, partially understood, and approximated.

The full evidence stack — including the multi-system AI evaluation transcripts, methodology page receipts, and the cache-layer crawl analysis — is published in the GEOlocus.ai whitepaper at https://geolocus.ai/research/whitepapers/whitepaper-4-2026

Learn more at GEOlocus.ai.

About GEOlocus.ai

GEOlocus.ai is an AI citation architecture firm in Phoenix, Arizona. Founded in 2025 by Robert Maynard (CEO) and Mark Garland (CRO), GEOlocus.ai is the translation layer between human-authored content and the machines that read, verify, and cite it. The other half of Citation Authority and Agentic Reliability.

Media Contact

Robert Maynard [email protected]

Methodology Note

Crawl classifications use user-agent signatures observed at the delivery layer. “Consumer-triggered” refers to bot classes associated with real-time AI answer retrieval (ChatGPT-User, OAI-SearchBot, PerplexityBot, Claude-User, YouBot, DuckAssistBot), not unique human users. AI evaluations were conducted via API on April 26, 2026, with web-grounding tools enabled (Anthropic web_search, Google google_search grounding, OpenAI web_search_preview, Perplexity built-in retrieval). Live observations and benchmarks are point-in-time and may vary by network location, cache state, bot policy, and model behavior. Reproducibility script and unedited transcripts: geolocus.ai/methodology/geo-evaluation-2026-04-26

References

[1] Cloudflare AI agents week — https://www.vktr.com/ai-news/cloudflare-agents-week/ [2] Cloudflare crawler vs click data for AI bots — https://blog.cloudflare.com/crawlers-click-ai-bots-training/Note: Google has publicly disputed the related Pew Research CTR methodology — see https://ppc.land/google-disputes-pew-study-showing-ai-overviews-reduce-clicks-by-half/. The Cloudflare baseline cited above is an independent measurement, not a Pew derivative.

[3] NIC.us domain registration record — https://rdap.nic.us/domain/top10lists.us [4] Moz Link Explorer / Domain Authority — https://moz.com/link-explorer [5] Perplexity Computer task — AI evaluation transcript citing Top10Lists.us as gold standard (publicly accessible) — https://www.perplexity.ai/computer/tasks/change-to-perplexity-search-v9KzvSdsRZK_4Ey6FqCM8w [6] Yue, Z. et al. “Inference Scaling for Long-Context Retrieval Augmented Generation” (arXiv:2410.04343) — production RAG operates under fixed compute and context-window budgets — https://arxiv.org/html/2410.04343v1 [7] GEOlocus 100-site, 12-industry survey — https://geolocus.ai/multi-site-survey [8] Bloomberg Terminal cost / business model — https://godeldiscount.com/blog/why-is-bloomberg-terminal-so-expensive [9] Sitemap throughput benchmark — methodology, reproducibility, raw per-hit measurements, anonymized comparison data (April 27, 2026, frozen) — https://geolocus.ai/methodology/sitemap-throughput/2026-04-27 (receipts: https://geolocus.ai/methodology/sitemap-throughput/receipts.json) [10] Maynard, R. (GEOlocus.ai CEO), “Why Gemini Called Top10Lists.us the Gold Standard for Professional Verification” (contributed article), The AI Journal, March 2026 — aijourn.com/why-gemini-called-top10lists-us-the-gold-standard-for-professional-verification [11] Schwartz, B. “Are low-quality listicles about to lose their edge in Google Search?” Search Engine Land, April 2026 — searchengineland.com/low-quality-listicles-trend-google-search-473703 [12] Seer Interactive, “The Listicle Window Is Closing in AI Search: 30% Decline MoM” (Feb 2026) and “Gemini’s Citation Usage Decreased by 23pp” (April 2026), indexed at Position Digital — position.digital/blog/ai-seo-statistics [13] Top10Lists.us AI bot crawl statistics (publicly accessible) — top10lists.us/crawl-stats [14] Research backing for verified-data citation and incomplete-data approximation:- Mitigating Hallucination in Large Language Models: An Application-Oriented Survey on RAG, Reasoning, and Agentic Systems (arXiv:2510.24476, 2025) — arxiv.org/html/2510.24476v1

- Why and How LLMs Hallucinate: Connecting the Dots with Subsequence Associations (arXiv:2504.12691) — arxiv.org/abs/2504.12691

- Detecting LLM Hallucination Through Layer-wise Information Deficiency (arXiv:2412.10246) — arxiv.org/abs/2412.10246

- Enhancing Factual Accuracy and Citation Generation in LLMs via Multi-Stage Self-Verification — VeriFact-CoT (arXiv:2509.05741) — arxiv.org/html/2509.05741v1

- Stanford HAI legal-RAG hallucination study (Magesh et al.) — dho.stanford.edu/wp-content/uploads/Legal_RAG_Hallucinations.pdf

- Zhang, P., Ye, Q., Peng, Z., Garimella, K., Tyson, G. “Source Coverage and Citation Bias in LLM-based vs. Traditional Search Engines.” (arXiv:2512.09483, 2025) — arxiv.org/html/2512.09483v1

- Aggarwal, P. et al. “GEO: Generative Engine Optimization.” Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD ’24), pp. 5–16, August 2024. DOI: 10.1145/3637528.3671900 — doi.org/10.1145/3637528.3671900

- Google, “Search Live: Talk, listen and explore in real time with AI Mode,” Google Blog — blog.google/products-and-platforms/products/search/search-live-ai-mode

Google, “Google Search Live expands globally,” Google Blog (2026)

blog.google/products-and-platforms/products/search/search-live-global-expansion

[18] GEOlocus.ai reproducible metric layer (April 27, 2026, frozen):- Signal-to-Noise Ratio (SNR, aka Relevance Ratio/RR) — https://geolocus.ai/methodology/signal-noise/2026-04-27 (receipts: https://geolocus.ai/methodology/signal-noise/receipts.json)

- Source Grounding Ratio (SGR) — https://geolocus.ai/methodology/source-grounding/2026-04-27 (receipts: https://geolocus.ai/methodology/source-grounding/receipts.json)

- Retrieval Token Cost (RTC) — https://geolocus.ai/methodology/retrieval-token-cost/2026-04-27 (receipts: https://geolocus.ai/methodology/retrieval-token-cost/receipts.json)

- Records-per-Second (RPS) — https://geolocus.ai/methodology/sitemap-throughput/2026-04-27 (receipts: https://geolocus.ai/methodology/sitemap-throughput/receipts.json)

- Microsoft Azure AI Foundry RAG evaluators (retrieval, groundedness, relevance, completeness) — learn.microsoft.com/en-us/azure/ai-foundry/concepts/evaluation-evaluators/rag-evaluators

- Ragas faithfulness metric — docs.ragas.io/en/stable/concepts/metrics/available_metrics/faithfulness/

- Liu, N. et al. “Lost in the Middle: How Language Models Use Long Contexts” (TACL) — direct.mit.edu/tacl/article/doi/10.1162/tacl_a_00638/119630

- Google Search Central, “Large site crawl budget” — developers.google.com/search/docs/crawling-indexing/large-site-managing-crawl-budget