Artificial intelligence systems are not neutral.

They do not simply process information, generate outputs, or automate workflows. As they integrate deeper into enterprise environments, they reshape something far more consequential: where authority resides and how it flows. What appears, on the surface, to be a story about capability is, underneath, a story about structural power. Every integration, every agent, every automated decision path subtly alters the distribution of consequence-bearing control across the enterprise.

Most organizations are not measuring this shift.

They are tracking model performance, accuracy, and latency. They are evaluating bias, safety, and alignment. They are building guardrails at the prompt layer and monitoring outputs for anomalies. But they are largely blind to a structural reality emerging underneath their AI systems: authority is concentrating, often rapidly and invisibly, into specific points of execution.

And it is concentrating in specific locations.

I call these locations authority wells.

What Is an Authority Well?

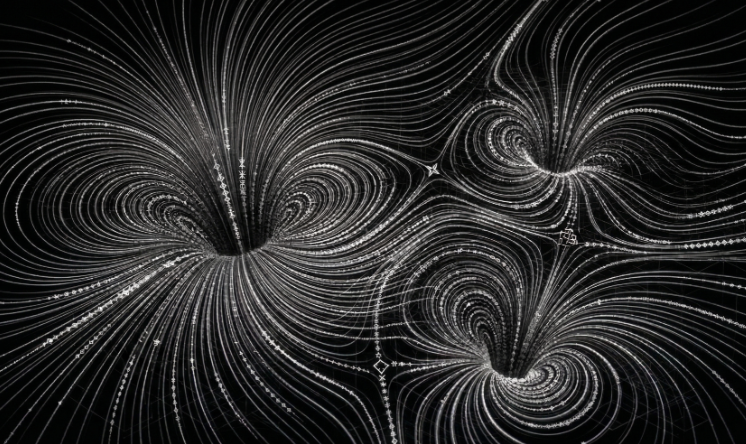

An authority well is a point in a system where consequential power accumulates.

Not access. Not visibility. Not compute.

Consequence.

It is where actions can materially change outcomes across systems, domains, or organizations. It is where the difference between “something happened” and “something irreversible happened” becomes real. Authority wells are not defined by what can be observed or queried, but by what can be changed and what those changes trigger downstream.

In traditional environments, authority was distributed across people, processes, and systems. Decisions required coordination. Execution required multiple steps. Oversight existed, even if imperfectly, because the system itself enforced friction. Authority was fragmented, and that fragmentation created natural resistance to large-scale consequence.

AI changes that structure.

It compresses decision paths. It collapses handoffs. It connects systems that were previously separated by both technical and organizational boundaries. What once required multiple actors and approvals can now occur within a single execution chain driven by a model, an agent, or an automated workflow.

When that happens, authority does not disappear. It concentrates.

The system begins to form wells, and those wells define the true topology of risk.

How AI Creates Authority Wells

Authority wells do not emerge randomly. They form at predictable convergence points, driven by the interaction between system capability and system connectivity. Across enterprise AI deployments, three patterns consistently shape where these wells appear and how deep they become.

- Tool Aggregation

AI agents increasingly operate through tool access: APIs, SaaS platforms, infrastructure controls, and internal systems. Each tool represents a discrete capability. But the real shift occurs when those tools are combined into executable sequences.

A model that can read data is useful.

A model that can read, decide, and execute across systems becomes consequential.

As toolsets expand, so does the reachable consequence space. The agent is no longer performing isolated tasks; it is orchestrating outcomes. Authority wells form where these tools converge into a unified execution surface, particularly when the agent can chain actions without interruption or external validation.

The critical factor is not the number of tools, but the composability of those tools. When composition is unconstrained, authority compounds.

- Execution Compression

AI reduces the number of steps between intent and outcome. This is not incremental efficiency. It is structural compression of the authority path.

What previously required multiple approvals, human checkpoints, and validation cycles can now be executed in a single continuous flow. Oversight, which once existed as discrete gates, becomes optional or post hoc. The temporal distance between decision and consequence collapses.

As paths shorten, oversight windows shrink. Authority moves closer to execution. The system develops higher curvature, with authority flow bending toward the shortest path to consequence.

Those shortest paths become wells.

This compression is often celebrated as productivity gain. Structurally, it is a redistribution of authority.

- Integration Density

Modern enterprises are already highly integrated environments. Identity providers, CI/CD pipelines, cloud control planes, SaaS ecosystems, and data platforms are interconnected through APIs and automation layers. These integrations were designed for efficiency and interoperability.

AI does not create these integrations. It activates them.

When an agent can traverse these connections, it inherits the combined authority of the integrated system. The result is not additive; it is multiplicative. Authority expands across domains, often without explicit recognition that cross-domain consequence is now reachable from a single execution point.

The highest-risk locations are not individual systems. They are the intersections between them.

That is where authority wells deepen, because that is where multiple consequence domains converge.

Why Traditional Security Models Miss This

Most security models are built around three foundational questions:

- Who has access?

- What vulnerabilities exist?

- How can we prevent compromise?

These are necessary. They are not sufficient.

They are designed to measure exposure, not consequence. They assume that risk scales with likelihood of compromise or technical severity. But in AI-integrated systems, risk increasingly scales with authority concentration.

They do not answer a more important question:

If something goes wrong here, how much authority is exercised?

This is the gap.

A low-severity vulnerability in a high-authority well can produce catastrophic outcomes because it unlocks concentrated consequence. A high-severity vulnerability in a low-authority area may have limited impact because it cannot propagate into meaningful system change.

Without understanding where authority is concentrated, organizations cannot prioritize risk correctly. They are optimizing defenses around technical artifacts while ignoring structural consequence.

They are measuring exposure.

They are not measuring authority.

Authority Wells and AI Risk

In AI systems, authority wells become the defining feature of risk.

Not the model.

Not the prompt.

Not even the agent architecture in isolation.

The risk emerges from what the system is allowed to change and how easily it can reach those change points.

Consider a few representative scenarios:

- An AI agent with access to a CI/CD pipeline can introduce code into production environments, altering system behavior at scale.

- An agent integrated with identity systems can modify permissions, create persistent access, or reshape trust relationships across the enterprise.

- An agent connected to financial systems can initiate, influence, or automate transactions with real economic consequence.

These are not edge cases. They are natural outcomes of modern integration patterns combined with AI execution capability.

Once an AI system can reach these wells, the question is no longer whether it behaves correctly under normal conditions.

The question is what happens when it doesn’t, and how quickly that deviation propagates through the authority surface.

From Observability to Authority Awareness

Organizations need to move beyond traditional observability models.

Logging what happened, tracing execution paths, and detecting anomalies are important. But they are retrospective. They describe behavior after authority has already been exercised.

What is required is structural awareness.

Organizations need to understand:

- Where authority is concentrated

- How authority can be reached

- What paths lead into high-consequence states

- How authority flows between systems and domains

This requires a different model.

Instead of mapping systems, we map authority surfaces. Instead of tracking access, we track consequence potential. Instead of focusing only on vulnerabilities, we measure authority amplification and propagation paths.

Authority wells are the natural output of this analysis.

They are not theoretical constructs. They are discoverable through system topology, measurable through consequence modeling, and actionable through design decisions.

They represent the points in the system where governance must be strongest, not because they are most exposed, but because they are most consequential.

Designing Around Authority Wells

The goal is not to eliminate authority wells.

That is neither practical nor desirable. Modern systems require high-consequence execution points to function. Financial systems must move money. Infrastructure systems must deploy and modify resources. Identity systems must grant and revoke access.

The goal is to design these wells deliberately, with explicit recognition of their role in the authority topology.

Three principles matter.

- Constrain at the Point of Consequence

Controls must exist where outcomes are executed, not just where decisions are made. If an action can move money, change identity, deploy code, or alter policy, that node must enforce constraints directly. Upstream validation is insufficient if downstream execution remains unconstrained.

Consequence nodes must be treated as enforcement boundaries, not passive endpoints.

- Define Escalation Boundaries

Authority should not flow freely between domains. Transitions between identity, infrastructure, financial, policy, and model systems must be explicitly governed. These transitions represent escalation paths, and without boundaries, they create chains that deepen authority wells and expand blast radius.

Escalation boundaries are structural, not procedural. They must be encoded into the system, not assumed through policy.

- Preserve Termination Authority

Every high-consequence path must be interruptible.

Not eventually. Not conditionally.

Immediately.

Termination authority is not an operational feature layered on top of the system. It is a structural requirement embedded within the authority architecture. Without it, authority wells become irreversible sinks, where once execution begins, it cannot be meaningfully stopped.

In compressed, automated systems, termination is the last remaining control.

The Shift Ahead

AI is accelerating. Agents are becoming more capable, more connected, and more autonomous—transitioning rapidly from assistive roles into direct execution. Rather than merely advising on decisions, these systems are increasingly acting on them, a shift that will only continue to intensify.

Determining whether this evolution remains safe depends on more than model alignment, prompt engineering, or isolated evaluation benchmarks. True safety hinges on whether organizations can identify and control where authority actually resides.

Authority wells serve as the primary diagnostic for the authority topology, revealing where systems truly matter. These points of concentration expose where small deviations on the authority surface accelerate into large-scale consequences. By mapping this surface, organizations can see exactly where governance must be precise, enforced, and structurally embedded. This perspective shifts the strategic conversation from “what can the AI do” to “what can the authority surface be made to do”.

Design authority before you automate it. In AI systems, performance scales—but authority defines consequence.

Define Possibility.