As enterprises accelerate their digital transformation journeys, data has become the backbone of decision-making. Yet, while organizations generate massive volumes of data across systems, many still face a fundamental challenge: how to ingest, process, and unify that data efficiently.

Traditional ETL pipelines, often built manually and tailored for specific use cases, are proving inadequate in today’s fast-paced, multi-source environments. They are time-consuming to build, difficult to scale, and prone to inconsistencies.

This growing gap between data generation and data usability has created a need for intelligent, scalable ingestion systems.

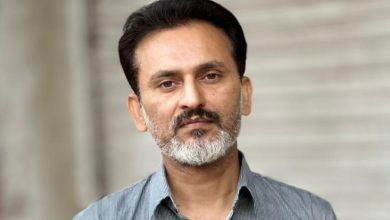

Addressing this challenge is Lokeshkumar Madabathula, whose work focuses on designing AI-driven, metadata-based data ingestion frameworks that modernize how enterprises operationalize data at scale.

Rethinking Data Ingestion for the AI Era

At the core of Madabathula’s approach is a simple yet powerful principle: data ingestion should not rely on manual effort; it should be automated, adaptive, and intelligent.

Instead of building individual pipelines for each data source, his framework introduces a metadata-driven architecture that dynamically configures ingestion workflows based on control tables.

This eliminates hardcoding and enables a plug-and-play model, allowing new data sources to be onboarded rapidly without redesigning pipelines.

Madabathula emphasizes that modern data systems must evolve beyond static pipelines and move toward intelligent ingestion layers that can scale with enterprise demands.

A Scalable Architecture Built on Cloud-Native Technologies

The framework is built using Azure Data Factory, Databricks, and Delta Lake, creating a powerful and scalable data ingestion ecosystem.

Azure Data Factory orchestrates workflows, while Databricks processes large-scale data transformations efficiently. Delta Lake ensures data reliability through ACID transactions, schema enforcement, and versioning capabilities.

A key innovation is the use of control tables, which act as the brain of the system defining ingestion logic, source configurations, and transformation rules dynamically.

The framework supports multiple ingestion patterns, including:

- Full data loads

- Incremental processing

- Change Data Capture (CDC)

It also enables ingestion from diverse sources such as APIs, Oracle databases, SFTP systems, and SAP platforms, making it highly adaptable for enterprise environments.

Intelligent Automation at Scale

To handle large-scale data efficiently, Madabathula integrated advanced data engineering techniques into the framework.

Capabilities such as Auto Loader, schema evolution, and checkpointing allow the system to process continuous data streams while adapting to structural changes in real time.

Delta Lake’s MERGE functionality ensures accurate handling of slowly changing data, preserving historical records while updating new information seamlessly.

The system is capable of processing over 500 million records efficiently, demonstrating its ability to scale across enterprise workloads.

Eliminating Complexity in Data Engineering

One of the biggest bottlenecks in enterprise data ecosystems is the manual effort required to build and maintain ingestion pipelines.

Madabathula’s framework addresses key challenges such as:

- Manual pipeline creation

- Data inconsistencies across systems

- Limited scalability of traditional ETL processes

By introducing reusable components and eliminating hardcoded logic, the framework significantly reduces development effort while improving consistency.

This allows data engineers to shift their focus from repetitive tasks to higher-value activities such as analytics, optimization, and AI model development.

Driving Measurable Business Impact

Beyond technical innovation, the framework delivers tangible business outcomes.

Organizations leveraging this architecture have seen:

- 60–70% reduction in ingestion development time

- Faster onboarding of new data sources

- Near real-time data availability for analytics

- Improved consistency and reliability across data systems

These improvements enable enterprises to generate insights faster and make more informed decisions in dynamic business environments.

From ETL Pipelines to Intelligent Data Platforms

Madabathula’s work reflects a broader transformation in data engineering.

Traditional ETL pipelines are being replaced by intelligent data platforms that:

- Adapt dynamically to new data sources

- Maintain consistency automatically

- Scale seamlessly with growing data volumes

- Support advanced analytics and AI workloads

This shift is essential for organizations aiming to become truly data-driven. Without a strong ingestion layer, downstream analytics and AI systems cannot perform effectively.

A Future-Ready Architecture for Modern Enterprises

As enterprises modernize legacy systems, the need for scalable and intelligent data architectures continues to grow.

Madabathula’s ingestion framework provides a future-ready solution aligned with AI-driven data platforms. Its metadata-driven design, cloud-native foundation, and automation capabilities make it applicable across industries, including finance, healthcare, retail, and manufacturing.

The framework serves as a blueprint for organizations looking to transition from fragmented data systems to unified, intelligent platforms.

Why This Innovation Stands Out

What sets this work apart is its practical impact and scalability.

While many AI and data initiatives remain conceptual, this framework delivers real-world results reducing development time, improving data consistency, and enabling faster decision-making.

It demonstrates how AI principles can be embedded into the foundational layers of data engineering, not just in analytics.

The Future of Data Engineering

As artificial intelligence continues to shape enterprise technology, data ingestion will play an increasingly critical role.

Madabathula’s work highlights a key insight: the future of data engineering lies in building systems that are not only automated but intelligent.

By moving from manual pipelines to self-driven data platforms, organizations can unlock the full value of their data transforming it into a strategic asset that drives innovation and growth.

A New Era of Intelligent Data Systems

The evolution of enterprise data infrastructure is entering a new phase one defined by automation, scalability, and intelligence.

Through his work, Lokeshkumar Madabathula is contributing to this transformation, enabling organizations to rethink how they ingest, manage, and utilize data.

As businesses continue to scale their digital operations, innovations like this will play a central role in shaping the future of AI-driven enterprises.