Introduction: The Quiet Shift No One Is Talking About

Artificial intelligence is already reshaping ophthalmology, but not in the way most clinicians think.

Much of the current conversation focuses on diagnostics, imaging analysis and workflow optimisation. These developments are important, but they are not where the most immediate transformation is occurring.

The real shift has already happened.

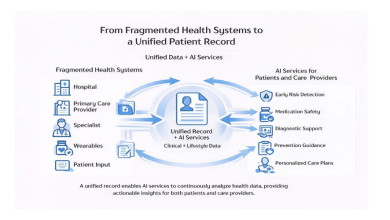

AI is now the first point of contact between patients and healthcare.

Before a patient attends a consultation, before they read a website, and often before they speak to another human being, they have already asked a question to an AI system. Whether through large language models, search engines or curated overviews, the answer they receive increasingly shapes what they believe to be true.

This is not a future scenario. It is current reality.

AI Now Decides Who Patients Trust, And It Isn’t Always Right

For decades, trust in healthcare was built inside the consultation room.

Today, it is often built before the patient ever arrives.

AI systems do not simply provide information. They frame it. They decide what is visible, what is emphasised and, implicitly, who appears credible.

Once that perception is formed, the consultation is no longer the beginning of the patient journey. It is the confirmation of a belief that has already been established.

This creates a fundamental shift in how trust is constructed.

If AI presents accurate, balanced information, it can accelerate appropriate patient decisions and reinforce genuine expertise.

If it does not, it can assign credibility where it is not deserved, and patients may never question it.

From Google to ChatGPT: Who Now Controls Patient Choice in Healthcare?

The evolution from search engines to AI-driven answers represents one of the most important changes in modern healthcare.

Search once provided options. AI now provides conclusions.

Google’s AI overviews, conversational models and summarised outputs increasingly deliver a single, synthesised response rather than a list of sources. Patients rarely look beyond that first answer.

This means patient choice is no longer driven primarily by what exists, but by what is selected.

And that selection is not neutral.

It is shaped by:

-

Data availability

-

Source credibility

-

Frequency of citation

-

Patterns within the digital ecosystem

In effect, AI has become the gatekeeper between patients and providers.

The Illusion of Understanding

Artificial intelligence appears intelligent because it communicates fluently.

It produces responses that are structured, confident and coherent. For patients, this creates an impression of authority.

But AI does not understand in the way clinicians understand.

It recognises patterns, aggregates information and generates outputs that are statistically likely to be correct. When the user has limited knowledge, this is often sufficient.

However, when those same outputs are reviewed by experts, the limitations become clear.

Subtle inaccuracies persist. Nuance is lost. Context is simplified.

The result is an illusion of understanding, one that is convincing to non-experts but often incomplete.

AI as the Gatekeeper of Expertise

AI is no longer just answering questions. It is assigning expertise.

When patients search for information about cataract surgery, lens replacement or laser eye surgery, AI systems implicitly signal who is authoritative.

Once that signal is received, trust follows.

In many cases, the most difficult part of the patient journey, establishing confidence, has already been completed before the consultation begins.

This is powerful when correct.

It is problematic when not.

Clinicians with limited surgical volume or outcome data may be presented alongside, or even above, those with extensive experience and measurable results.

The distinction is not always visible to the patient.

The Problem with Self-Reported Authority

Healthcare has historically relied on self-description.

Websites, profiles and marketing materials allow clinicians to present their expertise directly to patients. But AI systems are increasingly sceptical of this model.

Because anyone can publish content online, AI places greater weight on third-party validation:

-

Independent reviews

-

Media coverage

-

Published outcomes

-

External citations

This represents a structural shift.

It is no longer enough to say you are an expert.

The digital ecosystem must support that claim.

Bias, Data and the Risk of Distortion

AI systems reflect the data on which they are trained.

This means they inherit both the strengths and the weaknesses of that data.

Clinicians who are more visible online, more frequently cited or more extensively represented may be disproportionately recognised. Others, despite equivalent or greater expertise, may be underrepresented.

AI does not intentionally create bias.

But it can reinforce it.

In healthcare, this has real consequences, influencing who patients see, what they believe and ultimately the decisions they make.

Why This Matters in Ophthalmology

Ophthalmology is one of the most measurable specialties in medicine.

We have objective metrics, validated datasets and national benchmarks such as the National Ophthalmology Database. Surgical outcomes can be quantified, compared and audited.

In theory, this should make representation straightforward.

In practice, these data are not always visible within the digital layer that AI draws from.

As a result, the distinction between high-volume, data-driven surgical practice and lower-volume providers is not always clearly communicated.

For patients, that distinction matters.

AI Is Rewriting Healthcare Trust, But Is It Getting It Right?

This is the central question.

AI is already shaping patient perception at scale. But accuracy is not guaranteed.

Trust is being constructed from:

-

Patterns

-

Citations

-

Digital visibility

Not always from:

-

Outcomes

-

Experience

-

Measurable performance

If left unaddressed, this risks creating a system where perceived expertise diverges from actual expertise.

Citations Are the New Backlinks

In the past, digital authority was built through search engine optimisation, including backlinks, keywords and domain strength.

In the AI era, a new currency is emerging: citations.

AI systems prioritise information that is:

-

Referenced across multiple credible sources

-

Repeated consistently

-

Externally validated

The more often a clinician or organisation is cited within trusted contexts, the more likely they are to be recognised accurately.

This changes the responsibility of clinicians.

Excellence must not only be delivered. It must be visible, verifiable and consistently referenced.

From Passive Presence to Active Curation

Healthcare professionals can no longer be passive participants in how they are represented online.

If AI is the front door to healthcare, then the accuracy of that front door matters.

This requires a shift:

-

From visibility to verifiability

-

From marketing to measurement

-

From self-description to corroboration

Clinicians must actively contribute to the information ecosystem that AI relies upon, publishing outcomes, engaging in external validation and ensuring accurate, high-quality content exists.

Conclusion: A New Responsibility

Artificial intelligence is not replacing clinicians.

But it is reshaping how patients find them, evaluate them and trust them.

That makes it one of the most influential forces in modern healthcare today.

The question is no longer whether AI will play a role in ophthalmology.

It already does.

The question is whether the version of reality it presents is accurate.

Because once AI has shaped a patient’s belief, the consultation is no longer the beginning of the journey.

It is the confirmation of a decision that has already been made.