Artificial intelligence has already arrived in higher education. Students use it, faculty use it, and institutions are investing heavily, but many are discovering that access alone is not enough.

In the past year, institutions have signed significant enterprise deals with major AI vendors. Tools are available, pilots have launched, and excitement is high. The most forward-looking institutions understand that handing out a chatbot isn’t the same as building a strategy for learning. When adoption is treated as a purchase rather than a teaching and learning shift, the promise of the technology falls short. The tools are there, but the framework to use them well often isn’t.

What is unfolding in higher education now is not about resistance or fear of change. It is a stress test, a real-time demonstration of what happens when transformative technology meets systems never designed to absorb it. The lesson extends beyond universities. It previews what happens in any sector that treats AI as an additive layer rather than a force that demands rethinking the foundation.

The Opportunity in Context

Understanding why AI adoption falters requires looking at the institutional context AI must fit into.

Large-scale AI deployment is hard to get right, not because the technology is flawed, but because institutions are complex. Higher education operates on shared governance, accreditation, academic freedom, and pedagogical norms that have evolved over decades. For AI to thrive, it must align with those structures.

Instructors who understand how a tool functions within that environment, help shape its use, and see how it supports their teaching become champions. Those handed a tool without context or agency tend to set it aside.

The questions faculty are asking are instructive: How does this tool shape collaboration? How are outputs monitored? Where does student data go? Who owns the intellectual product of AI-assisted work? These are not barriers to adoption—they are the questions that, when answered well, make adoption durable. The organizations that treat those questions as design inputs rather than objections are the ones building AI programs that will last.

From AI-Enabled to AI-Native

Most institutions today are operating in an AI-enabled environment, where AI is layered onto existing systems as an enhancement. Tools are available, generative features are enabled, but the core structure of how learning happens remains mostly unchanged.

An AI-native ecosystem is different. It does not ask how AI fits into the existing system, it asks what the system should look like if designed from the start around the reality that AI is a permanent and active participant in how people learn and work.

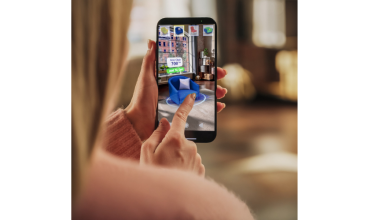

The hallmark of an AI-native classroom is purposeful collaboration, humans and AI working visibly and collectively toward learning goals. Students work in environments where multiple AI models are accessible within the same workflow; GPT for creative work, Claude for analysis, specialized models for domain tasks. AI use is transparent, the development of ideas traceable, and faculty guide the collaborative process. The question shifts from “How do we monitor AI use?” to “How do we teach students to lead and manage human-AI teams?”—a far more productive place to be.

This matters because the modern workforce already operates in AI-native teams. Workers with advanced AI skills command a 56% wage premium, and productivity growth has nearly quadrupled in industries most exposed to AI since 2022. Graduates who can orchestrate multiple models, evaluate outputs, and apply human judgment where it matters will not just be prepared, they will be ahead.

Trust as the Foundation for Scale

None of this works without trust. Trust is built through transparency, shared ownership, and AI that visibly serves the mission rather than complicating it.

AI is already boosting productivity and narrowing experience gaps, but only when used with proper structure and oversight. When instructors see how AI functions in a governed environment and can point to real improvements in student learning, engagement deepens. AI moves from something happening to the institution to something the institution actively shapes.

This is especially visible in community colleges and workforce-oriented programs, where the connection between learning and employment is immediate. These institutions approach AI with practical clarity, preparing students for roles in healthcare, manufacturing, and business operations—environments where AI is already growing. For them, AI literacy is not a theoretical concern. It is a workforce competency that delivers a competitive advantage for graduates.

Collaborative AI Ecosystems and Institutional Memory

The future of institutional AI will not be defined by a single tool but by collaborative ecosystems, governed environments where multiple models, infrastructure partners, and domain-specific capabilities work together in service of a shared mission.

Universities are long-cycle organizations. They cannot rebuild curriculum around every new model release, nor should they have to. A collaborative ecosystem gives institutions flexibility to evolve with technology without dismantling what they’ve built. It separates the governance layer from the tool layer, allowing adoption of new capabilities, whether GPT-5.4, Claude 4.6, or NVIDIA’s Nemotron, without starting over.

In practice, this means designing AI as a shared workspace rather than a private assistant. When AI interactions are visible across a classroom or team, accountability and collaboration reinforce each other. Faculty can assess the process of learning including the prompts, iterations, and evaluations, rather than just the final output. Students develop habits of transparent, responsible AI use that carry directly into professional life.

And critically, the knowledge persists. In most enterprise AI tools, when a team member leaves, their AI interactions leave with them. When someone new joins, they start from zero. However, in an AI-native ecosystem, institutional memory is preserved. Knowledge banks, shared context, and collaborative workflows belong to the institution, not the individual. This transforms AI from a series of ephemeral chats into a compounding institutional asset.

Governance as a Growth Strategy

Governance is often seen as slowing innovation. In institutional environments, whether a courtroom, a combat formation, or a classroom, the opposite is true: governance is what makes innovation scalable.

Institutions that design structured AI-native environments are better positioned to experiment ambitiously. They can pilot new models within clear parameters, measure outcomes across courses and cohorts, and build on what works with confidence. Over time, that creates a compounding advantage: faculty develop deeper fluency, students graduate with richer AI experience, and the institution builds a culture of responsible innovation that attracts both talent and partnership.

The broader AI industry is watching higher education closely for exactly this reason. It’s one of the first sectors working through the full complexity of large-scale AI adoption, the governance questions, trust dynamics, and workforce implications, in real time. The institutions navigating it well are not just adopting AI faster; they’re modeling what thoughtful, durable AI integration looks like.

Capability opens the door. Governance keeps it open. Institutions moving toward collaborative, AI-native ecosystems aren’t just preparing for the next few years, they’re shaping how AI is used, understood, and trusted for the decades ahead. That’s not an incremental advantage. It’s a defining one.

Author Bio:

France Hoang is a combat veteran, White House lawyer, university trustee, and Distinguished Visiting Lecturer at West Point. As Founder and CEO of BoodleBox, he advocates for AI-native learning environments designed around governance, collaboration, and institutional trust. His work centers on helping institutions move beyond tool adoption toward durable, transparent AI ecosystems that empower both students and educators.