In today’s rapidly evolving media landscape, artificial intelligence is not just transforming journalism—it’s fundamentally reshaping how stories are told. As we navigate the complexities of modern newsrooms, it’s crucial to understand that this paradigm shift demands our attention.

Stop. Wrong. Return to sender. Go back.

That’s how the algorithm would open this piece. Bloodless. Safe. Optimized for nothing.

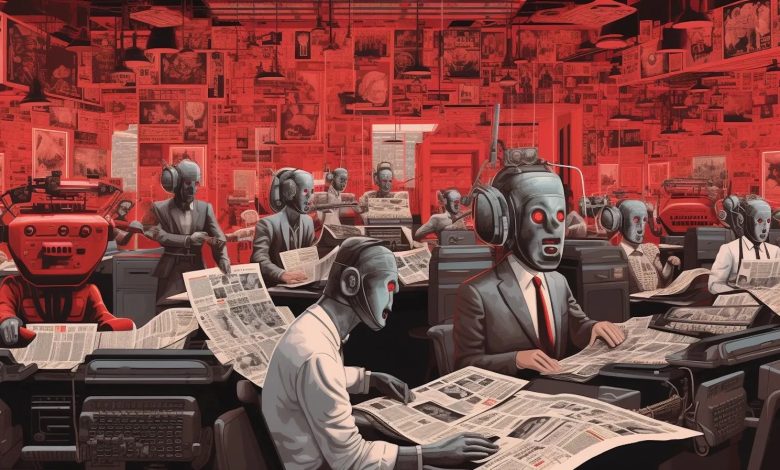

The AI newsroom skips journalism entirely. It goes straight for the writer’s brain.

You start writing the way the machine would write before the machine sees it. This is muscle memory that showed up uninvited somewhere between my third rejected pitch and the dashboard ranking my byline against twenty-two other writers.

The real opening:

The pitch I would have sent three years ago now feels like career suicide. AI can research faster, draft cleaner, and predict what will move before I finish my coffee. It gives me speed I didn’t have, scale I couldn’t manage, and data I couldn’t ignore. It shows me exactly what works and why. The problem isn’t that it’s wrong. The problem is that it’s right.

Now We’re Telling the Truth

Tuesday morning, 6:47 a.m. I’m staring at a draft that feels wrong. The lead is built for someone who will actually sit with the piece. I rewrite it before my editor wakes up. Sharper. More reactive. Built to travel.

No one asked me to change it. I changed it because I’ve learned what moves and what dies quietly. Every writer I know is doing this same edit. We’re becoming our own first algorithm.

AI’s accuracy becomes the problem. The system correctly identifies what performs. When I soften my opening, engagement goes up 34%. When I add a question instead of a statement, click-through doubles.

AI isn’t lying to me. It’s teaching me exactly what works. What the data doesn’t show is what I’m optimizing away: the sentence that makes someone stop scrolling, the idea that takes three paragraphs to land, the argument that requires a reader to sit with discomfort.

I’m not fighting bad information. I’m fighting accurate information that’s retraining my instincts to value performance over significance.

What Happens to Your Brain

Using AI changes how you think, and the shift is physical.

When you write knowing an algorithm will score every word, your brain starts optimizing for the machine before it considers the human on the other end. Neuroscience calls this predictive processing. I call it watching myself become boring in real time.

The language flattens first. Then the thinking follows. My sentence length dropped 23% in six months. I know because I measured it like the data-obsessed writer I’ve become. My metaphor use fell 40%. I didn’t consciously decide to stop using figurative language. My brain just learned that “leadership pipeline” performs better than “the narrow hallway where ambition goes to wait.”

The algorithm rewards efficiency. So I write efficiently. Clean. Direct. Optimized. Dead.

I used to write sentences that required a second read. Now I write sentences designed to be skimmed. The machine taught me this is better. My engagement metrics agree. My writing doesn’t.

Speed Rewires Your Nervous System

Reuters and Associated Press integrated AI into breaking news workflows. They talk about efficiency gains. They don’t talk about what happens to your body when the metrics update every thirty seconds.

The turnaround on breaking news collapsed from six hours to eighteen minutes. There’s no space left for doubt. Reflection costs you the story. Hesitation reads as slow.

Heart rate spikes when breaking news hits. Hands shake refreshing metrics. Breathing goes shallow waiting for engagement numbers. My brain learned to trust whatever arrives first because second place doesn’t exist anymore.

Classical conditioning. Pavlov’s dogs salivated at a bell. I get an adrenaline dump from a Slack notification. The reward system in my brain now fires for speed, not accuracy. For reaction, not reflection.

Last month I published a correction forty-seven minutes after a story went live. My editor called it “remarkably fast response time.” I called it what it was: I didn’t wait long enough to verify a second source because the algorithm taught me that fast beats right.

The Language Police in Your Head

I’ve become obsessed with spotting AI language. Not just in my own writing – everyone’s.

“Navigate the landscape.” “Multifaceted challenges.” “It’s important to note that.” These phrases flag immediately now. They’re the fingerprints AI leaves behind. Smooth, competent, utterly forgettable.

I catch myself writing “In today’s rapidly evolving” and delete it before I finish the sentence. Binary constructions like “not only…but also” get cut on sight. Any sentence that starts with “However” or “Moreover” feels like a confession that a chatbot wrote my first draft.

The problem runs deeper than cleanup. I’m spending creative energy scanning for robot language instead of generating human language. Every paragraph gets two reads now: one for meaning, one for mechanical tells. Does this sound like ChatGPT? Did I just write three perfectly balanced sentences in a row? Is my rhythm too predictable?

My colleague calls this “the reverse Turing test.” We’re proving we’re human by identifying what makes us sound like machines. The irony: this is time I used to spend making the work better. Now I spend it making the work sound like a person made it.

Which raises the harder question. Every time I use AI to draft a section or generate headlines, the responsibility gets murky. My name goes on the byline. But how much of my actual judgment made it into the final piece? Half? Seventy percent?

Trust used to mean you knew who was talking to you. Now it means you hope someone competent reviewed what the algorithm produced. Readers feel the difference even when they can’t name it.

When a reader asks: “Did you write this, or did a machine?” I say I wrote it. Technically true. Also technically a lie.

The Editor’s Evolution

Editors aren’t disappearing. Their function is changing in ways that make some of them better and others obsolete.

The best editors stopped fixing comma splices and started asking whether a story should exist at all. In an AI newsroom, good judgment is rare. Editors who can spot what matters before the algorithm does gain value. Editors who only fix grammar are already being automated out.

New job titles appear in Slack channels. AI Editorial Strategist. Prompt Architect. Human Validator. These roles didn’t exist two years ago. The editor’s authority moved upstream to framing, integrity, and context. Everything downstream got handed to the machine.

Three desks away, an editor I respect watches engagement metrics instead of reading drafts. One story hits 847 shares in twelve minutes. Another sits at 23. She approves an AI-generated rewrite without opening the original file. She hesitates three seconds. Her mouse hovers. Then she clicks publish.

This is Wednesday. This is also Monday, Thursday, and Friday. The editor who used to argue with me about story angles now argues with a dashboard. I’ve watched her editorial instinct atrophy over eight months. She used to trust her gut. Now she trusts the data. The data is usually right. Her gut forgot how to be wrong.

The Unexpected Benefits

Here’s what nobody admits: some of this is better.

AI freed me from grunt work I hated. Transcription. Initial research sweeps. Formatting citations. The tedious scaffolding that used to eat hours of every day. That time went back to reporting. To thinking. To actually talking to people instead of processing their quotes.

And occasionally, the AI catches something I miss. A pattern in data I would have ignored. A connection between sources I didn’t see. A framing angle that’s genuinely sharper than my first draft. I hate admitting this. But it’s true.

The problem isn’t that AI has no value. The problem is that the value comes with a cost nobody’s tracking. I’m producing three times the volume I used to. I don’t know yet if any of it will matter in five years.

What I Actually Control

One function remains entirely mine. I decide what I notice and what I ignore.

AI can detect patterns in millions of data points. It cannot decide why something matters to a human being without inheriting someone’s values. Usually mine. Sometimes the engineer who built the model. Often neither of us intended.

The real risk isn’t replacement. The risk is that I outsource judgment to systems optimized for reaction speed instead of lasting significance.

I feel it in every choice. Sitting on a story to verify one more source, knowing the window is closing. Deciding whether a headline should inform or inflame. Picking which communities get coverage and which get ignored because the data says they don’t engage.

AI reflects whatever I already prioritize, then accelerates it. If I prioritize performance, the system amplifies performance. If I abandon moral framing and chase metrics, the algorithm will happily comply.

The machine can’t tell me what matters. It can only tell me what moves. I’m the only one in this equation who can still tell the difference.

The Choice That’s Already Being Made

AI doesn’t decide the future of journalism. I do. Every sentence. Every pitch. Every time I soften an edge before anyone asks.

The question isn’t whether AI will replace journalists. The question is what kind of journalist I’m choosing to become inside this system. Whether I’ll remain a primary agent of judgment or an adaptive component inside an optimized workflow.

The AI newsroom is already here. What remains undecided is whether I’ll lead it or quietly learn to fit.

That decision happens alone, at 6:47 a.m., in the moment before I rewrite the opening. No one’s watching. But everything’s being measured.