Artificial intelligence is in its infancy. Despite accelerating at an exponential rate, threatening jobs, and reinventing the modern workforce, the truth is that AI is still a child. Not only is it a child, but we, the masses, are the ones responsible for raising it. We look at the big tech companies in Silicon Valley and expect them to be in charge of its ethical growth and development. Why?

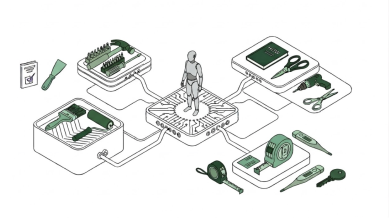

There’s a common belief that AI is the sole responsibility of big tech companies like Anthropic or OpenAI. While these companies build the infrastructure, train foundational models, and set guardrails, the systems themselves are shaped by the people who use them. Artificial intelligence learns from the collective input of hundreds of thousands—if not millions—of users. Every interaction becomes a datapoint that the LLM uses to evolve and develop cultural nuance. From C-suite executives using AI to analyze complex business data to individuals at home generating playful or whimsical images, everything fed into these systems teaches them something.

So when it is said that the responsibility falls solely on the tech companies, that’s not entirely true. Models are constantly evolving and learning from the inputs of the many, not just the few. AI belongs to all of us, and it is a shared responsibility.

If we think of AI as a child that belongs to all of us, the comparison becomes clearer. There are some things that are unique from child to child, but it is the nurture they receive and collective experiences that shape their personalities, interests, and knowledge. If a child grows up in an environment where education and nurture are promoted, chances are the child will grow up inquisitive, educated, and open-minded.

The parallel holds true for AI. What users feed into AI models directly impacts that model and the outputs it provides. In the case of AI, it boils down to: good information in, good information out. The inverse is true as well: feeding in bad or false information will lead to AI hallucination and bad outputs.

AI will become what we collectively teach it to be. Responsibility does not belong only to companies like OpenAI and Anthropic; it belongs to every person who interacts with these systems.

Researchers at The University of Exeter found that when AI hallucinates responses, it isn’t solely the model’s fault. It has just as much to do with falsehoods created by the user, which only confirms the notion that the user is equally responsible for the maturation of AI. [Text Wrapping Break][Text Wrapping Break]If you were to ask it to break down a financial model with incorrect numbers, the answers presented would be incorrect as well. That’s the human’s fault, not the model. OpenAI reported that a model’s outputs are based on levels of confidence. If a model has limited information on a topic, it will use their limited knowledge to create an answer. According to the research, OpenAI’s o4-mini model, set to abstain only 1% of the time (meaning it almost never refused to answer), had a 75% error rate. The only way these models will be able to continually gain confidence and become more accurate is if the millions of daily users are feeding them factually correct data points to evolve on.

We cannot expect, nor should we wait for, the large tech conglomerates to perfect their models. The best way for LLMs to grow is through exposure, and bad information will only corrupt that maturation. Ultimately, we must all treat AI as a child and shape it to be its best version.