The Shift Toward Distributed Systems

For decades, enterprise systems were built around centralized infrastructure. Applications, databases, and analytics typically lived in large data centers or hyperscale cloud regions. That architecture worked well when most computation happened in predictable environments with reliable connectivity.

Today, however, computing is increasingly distributed. Devices, sensors, robots, retail systems, and industrial equipment generate enormous volumes of data far from centralized infrastructure. Edge computing has emerged as a response to this shift, placing compute resources closer to where data originates.

This change is not merely a deployment preference. It fundamentally alters how systems must communicate, synchronize, and recover from failure.

Why the Edge Changes Everything

Edge computing moves data processing closer to its source rather than sending everything to a central cloud. This approach reduces latency, improves response times, and can decrease bandwidth usage for data-heavy workloads.

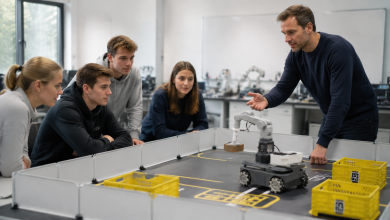

In practical terms, this means decisions can be made where the data is generated. Autonomous vehicles, factory robots, and remote monitoring systems often cannot wait hundreds of milliseconds for cloud responses.

The edge also introduces new operational realities. Systems must function even when connectivity is unreliable or temporarily unavailable.

In distributed environments, failure is not an exception—it is an expectation.

Edge AI Is Accelerating the Trend

Artificial intelligence is accelerating the move toward edge architectures. Many modern applications rely on real-time inference performed directly on devices or nearby infrastructure.

Edge AI refers to deploying machine learning models locally on devices such as sensors, gateways, or embedded systems. This allows applications to analyze data immediately without continuous reliance on cloud resources.

The benefits extend beyond speed. Processing data locally can improve privacy, reduce transmission costs, and enable autonomous decision-making even when connectivity is lost.

Industries from manufacturing to healthcare are exploring these capabilities. Real-time inspection systems, predictive maintenance platforms, and intelligent infrastructure all benefit from localized intelligence.

But AI workloads also amplify the challenges of distributed systems.

The Hidden Complexity of Edge Architectures

The cloud simplified infrastructure by centralizing resources. The edge does the opposite—it multiplies the number of nodes, locations, and failure domains.

A modern edge system may span:

- Devices and sensors

- Local gateways

- Regional compute clusters

- Central cloud environments

Each of these layers may have different constraints around bandwidth, power, latency, and security.

The network itself becomes unpredictable. Nodes can disappear temporarily due to connectivity issues, maintenance, or mobility.

Architectures that assume perfect connectivity or centralized control struggle in these environments.

Event-Driven Systems as a Natural Fit

Event-driven architectures offer a natural way to design systems that operate across distributed environments.

Instead of tightly coupling components through direct requests, event-driven systems communicate through asynchronous messages or events. This decoupling allows systems to continue operating even when parts of the infrastructure become unavailable.

In distributed environments, this property becomes essential.

Events can represent anything meaningful in a system: a sensor reading, a device state change, a model inference result, or an operational alert.

By treating system interactions as streams of events, organizations can build architectures that adapt more gracefully to distribution and scale.

From Edge to Core: Designing for Data Flow

One of the central challenges in edge systems is determining where processing should occur.

Not all workloads belong at the edge. Some tasks require centralized resources such as large-scale analytics or model training.

A common architectural pattern is edge-to-core processing. In this model:

- Immediate decisions occur locally

- Aggregated data moves toward regional or cloud systems

- Long-term analysis and coordination happen centrally

This layered approach reflects the strengths of each environment.

Edge systems handle real-time responsiveness. Core systems provide deeper analysis and global coordination.

The design challenge lies in managing the flow of events between these layers.

Intermittent Connectivity Is the Norm

Traditional enterprise architectures often assume continuous connectivity between services.

Edge environments cannot rely on that assumption.

Devices may operate in remote locations such as offshore platforms, manufacturing plants, or transportation networks. Connectivity interruptions may last seconds, minutes, or longer.

Architectures must therefore tolerate temporary partitions.

Systems designed with asynchronous messaging and local buffering can continue operating independently during outages. Once connectivity returns, data can synchronize with upstream systems.

This resilience is one of the defining design requirements for edge-native systems.

Security and Data Sovereignty

Edge deployments also raise new questions around data ownership and governance.

Processing data locally can improve privacy by preventing sensitive information from being transmitted to centralized systems unnecessarily.

For industries with strict compliance requirements, this capability is particularly valuable.

Healthcare devices, industrial sensors, and smart infrastructure often generate data that cannot easily be centralized due to regulatory constraints.

Edge architectures allow organizations to retain control over data while still benefiting from advanced analytics and AI.

However, distributing computation also expands the attack surface. Security models must account for devices operating in untrusted environments and networks with varying levels of protection.

Observability in a Distributed World

Monitoring distributed systems is significantly more complex than monitoring centralized applications.

Traditional observability tools assume that logs, metrics, and traces are easily accessible from centralized infrastructure.

Edge environments challenge this assumption. Telemetry itself must be treated as a distributed data stream.

Observability systems must therefore support:

- Local monitoring

- Aggregated telemetry pipelines

- Partial visibility during network partitions

Without these capabilities, diagnosing issues in large edge deployments becomes extremely difficult.

The Economics of Edge Infrastructure

Beyond technical considerations, edge computing introduces new economic dynamics.

Operating thousands or millions of edge nodes requires careful attention to cost efficiency, deployment automation, and lifecycle management.

Unlike cloud environments where resources can be provisioned on demand, edge infrastructure often involves physical hardware deployed in diverse locations.

Organizations must consider:

- Hardware constraints

- Power consumption

- Physical security

- Maintenance logistics

These factors influence architectural decisions just as strongly as software design.

A Distributed Future

The shift toward distributed computing is unlikely to reverse.

Industry forecasts suggest that a growing share of enterprise data will be generated and processed outside traditional data centers.

Meanwhile, advances in AI, robotics, and intelligent infrastructure continue to push computation closer to where data originates.

In this environment, the distinction between “cloud” and “edge” becomes less important than the ability to design systems that operate seamlessly across both.

Distributed architectures must be resilient, event-driven, and adaptable to changing conditions.

Designing Systems That Expect the Unexpected

The most important lesson from edge computing may be philosophical rather than technical.

Centralized systems optimize for stability and predictability. Edge systems must assume the opposite.

Networks fail. Devices disconnect. Data arrives late or out of order.

Architectures that embrace these realities—rather than attempting to eliminate them—tend to perform better over time.

Event-driven designs, distributed messaging patterns, and decentralized decision-making provide the foundation for systems that remain operational even when parts of the infrastructure fail.

As computing continues to expand beyond the boundaries of the data center, these principles will become increasingly essential.

The Edge as a Design Paradigm

Edge computing is not simply about placing servers closer to devices.

It represents a broader shift toward distributed intelligence—systems where computation, data, and decision-making occur across many locations simultaneously.

Organizations building modern applications must therefore rethink how software communicates, how failures are handled, and where data processing occurs.

In this sense, living on the edge is not a trend. It is a design paradigm for the next generation of computing systems.