- Over 40% of users find AI hallucinations, mistakes and lack of detail persist

- Organizations adopt hybrid human and AI approach to validate AI apps

- 61% rely on humans, while 1/3 use LLM-as-judge methods

- 46% say human sentiment and usability determine when AI is production‑ready

Applause, the global leader in managed software testing services and digital quality, today released its fourth annual State of Digital Quality in Testing AI report, which shows that although 55% of organizations have released AI-powered applications and features, more than half of AI initiatives fail to reach full production. This tension is reflected in user sentiment – with 40% stating that while AI tools boost productivity by more than 75%, quality issues are on the rise.

Based on a survey of more than 1,000 developers, QA professionals and over 4,000 consumers, the report revealed that AI quality is not keeping pace with AI’s accelerated adoption across markets. 40% of users experienced hallucinations, up from 32% in 2025. Additionally, 46% said AI misunderstood their prompts — now the most reported issue — while 41% said responses lacked sufficient detail.

84% of generative AI users say multimodal functionality — the ability to process and generate text, images, audio and video — is critical, putting additional pressure on QA teams. The report also found that scaling AI initiatives, including the two most common — chatbots and customer service tools — remains a challenge. 52% of respondents said fewer than half of their AI projects make it from proof of concept to full production.

Organizations embrace AI + human testing and evaluation

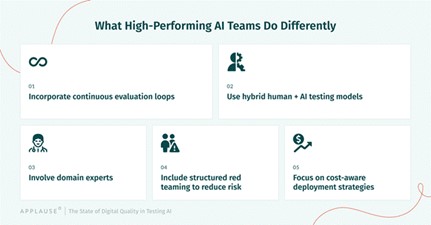

As organizations accelerate the adoption of AI testing techniques to validate new AI products, evaluation by humans remains the most widely used approach, with 61% of organizations relying on human input to validate AI performance. Meanwhile, 33% use LLM-as-judge methods, where multiple models assess AI outputs in parallel to uncover blind spots. Despite this mix of approaches, testing strategies are still struggling to keep pace with the speed and complexity of AI development — leaving critical gaps in how these systems are validated at scale.

To close the gap, teams are adopting a mix of AI-driven and human-led testing approaches. These include fine-tuning with synthetic (29%) and human-generated data (54%), human-led (39%) and automated (23%) red teaming, as well as AI-first testing agents (30%) and human-in-the-loop monitoring (31%). Human insight remains central to the AI QA process. Nearly half of organizations (46%) reported that human sentiment and usability are the primary factors in determining whether an AI feature is ready for production — far outweighing purely technical benchmarks.

AI momentum builds, but quality testing gaps emerge

“Testing AI isn’t just about accuracy — it’s about evaluating complex, multimodal outputs at scale,” said Chris Munroe, VP of AI Programs, Applause. “LLM-as-judge systems are becoming an important part of that process, but they can’t operate in isolation. Without human oversight, you risk reinforcing the same blind spots you’re trying to detect. In addition to human-led evals and fine-tuning, structured red teaming by both domain experts and generalists is essential.”

The report highlights a fundamental shift: AI is forcing organizations to rethink how quality is defined and validated. Unlike traditional software, AI is probabilistic and non-deterministic, so conventional testing methods alone are no longer sufficient. AI testing tools alone will miss what only humans can catch.

“AI development isn’t slowing down, but quality is falling behind,” said Chris Sheehan, EVP of High Tech and AI at Applause. “Teams are pushing AI into production before they’ve figured out how to properly test it. That’s why we’re seeing more failures and more risk reaching users. AI adds speed and scale, but human evaluation is what earns trust — you need both. The companies getting it right combine AI with domain expertise to evaluate and fine-tune their systems, ensuring outputs are more relevant, accurate and inclusive.”