Debate around AI tends to focus on models: how capable they are and how quickly they improve. Less attention is paid to the infrastructure required to train and operate them. Yet the direction of recent investment makes that imbalance hard to ignore. In 2026, Amazon, Google, Meta and Microsoft together are expected to spend around $650 billion on data centres, chips and power for AI workloads, a roughly 60 per cent increase on the previous year and more than the combined capital expenditure of over 20 major US industrial companies.

Spending at that level only makes sense in a market where access to compute is difficult to secure. Training and running modern AI systems requires hardware that is slow to produce, expensive to deploy and tied to local power conditions.

New data centres take years to plan and build, and advanced chips pass through extended supply chains before they ever reach deployment. Power infrastructure adds another layer of constraint, determining not only where capacity can operate but how quickly it can expand. As a result, capital is committed well ahead of demand, with access rather than ambition setting the pace.

Buyers able to make those commitments secure early access to land, power and successive GPU generations, and their procurement cycles set the terms under which capacity is allocated. Smaller teams plan around whatever capacity they can get. Workloads are resized, training runs are split up and timelines move as access becomes available.

Universities and public research groups compete with commercial labs for the same hardware, often squeezing work into the gaps left by larger buyers. Regional operators with spare rack space frequently remain outside large contracts, as procurement processes favour buyers able to commit to multi-year volumes.

Access to compute increasingly determines which organisations can pursue ambitious AI work and which are forced to narrow their plan.

When capacity limits what can be built

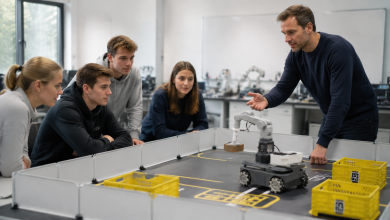

Research agendas often track what available hardware can support rather than the full range of problems AI might address. Teams working on healthcare, manufacturing, climate modelling or local-language systems frequently find that limited access to compute constrains the scope and duration of experimentation.

The practical effect is that certain kinds of work advance more slowly than others. Projects that fit within existing infrastructure move ahead, while those that require longer training runs or specialised configurations are deferred or abandoned. Innovation increasingly follows what can be run reliably, rather than what might deliver the greatest long-term value.

Where capacity exists

AI compute sits in more places than the largest cloud platforms. Beyond the hyperscalers is a wide spread of hardware: GPUs running in regional data centres, enterprise clusters built for peak internal demand and specialist operators serving focused markets. Much of this equipment runs well below full utilisation, even as teams elsewhere wait months for capacity.

Reaching that capacity depends on procurement models designed for long contracts, fixed configurations and buyers with predictable, high-volume needs. Teams with shorter planning cycles or variable workloads rarely fit those patterns, even when the hardware itself is a good match. The result is a market where available supply and active demand often sit side by side without connecting.

Why access affects cost and competitiveness

Access constraints have direct consequences for how AI projects are priced, timed and scaled. When compute is scarce or allocated through long-term reservations, costs track availability rather than actual usage, leaving teams exposed to pricing they cannot easily predict. Budgeting becomes harder as infrastructure commitments extend well beyond development cycles.

Timing follows a similar pattern. Product launches, research milestones and internal deployments often depend on when capacity becomes available rather than when systems are ready. Training runs are delayed, workloads are reduced to fit existing allocations, and timelines stretch even when demand is clear.

Over time, these differences accumulate. Organisations with dependable access can iterate more frequently and move systems into production sooner. Others face higher unit costs and longer cycles despite comparable technical capability.

A different way to organise compute

Most compute today is sold through long-term agreements agreed well ahead of use. Capacity is reserved in blocks and priced to suit buyers with steady, predictable demand. Teams whose needs change as products develop or research progresses tend to sit outside those arrangements.

Alongside this model, other approaches are taking hold. Compute can be organised across many independent operators rather than concentrated within a single platform, allowing workloads to be scheduled wherever suitable hardware is available. In this arrangement, providers contribute capacity into shared pools, performance is assessed through actual delivery, and access is governed by availability rather than fixed reservation.

Large cloud platforms remain central to the ecosystem. Integrated services, tight control and guaranteed capacity continue to matter for many organisations. What changes is that compute no longer has to be sourced exclusively through one relationship. Teams can draw on multiple providers, adjust consumption as needs change and avoid committing infrastructure decisions years ahead of deployment.

The effect is incremental rather than dramatic. Capacity that would otherwise sit idle can be brought into use, and access begins to reflect how AI systems are actually built and operated rather than how procurement has traditionally been structured.

The question that now sits underneath AI decisions

As AI systems move into regular use, practical constraints show up early. Teams discover whether they can retrain models, increase throughput or respond to demand long before questions of architecture become decisive.

Today, that access is largely set by a small number of infrastructure owners. Organisations with long planning horizons and large balance sheets lock in capacity well ahead of use. Others build around what remains available, adjusting scope, timing and cost expectations to fit external constraints.

Decentralised compute has emerged from these conditions rather than from theory. Instead of relying on a single cloud provider, capacity is drawn from many independent operators, each contributing hardware into a shared pool. Workloads run where suitable GPUs are available, rather than where long-term contracts happen to exist.

The result is straightforward: teams spend less time waiting for infrastructure and more time building systems that work. Access to compute stops being a planning problem years in advance and starts behaving like the utility it was always meant to be.