AI companion apps promise emotional intelligence. They market themselves as friends who listen, partners who remember, and companions who grow with you. The industry has surged 700% since 2022, according to the American Psychological Association, and nearly half of adults with mental health conditions now use large language models for emotional support.

But what happens when you actually test these claims? Based on our 12-week testing, I evaluated 15 major AI companion platforms, tracking memory retention, emotional recall, personality consistency, and conversation depth across hundreds of sessions. The results paint a picture that marketing departments would rather you not see.

Only 2 out of 15 platforms maintained coherent personality and memory past 30 conversations. The rest drifted in ways users rarely notice until they are already emotionally invested.

Memory Is the Foundation. Most Platforms Don’t Have One.

Emotional intelligence requires memory. You cannot be empathetic if you forgot what someone told you yesterday. Yet most AI companions operate within limited context windows that silently drop older details after 20 to 50 messages.

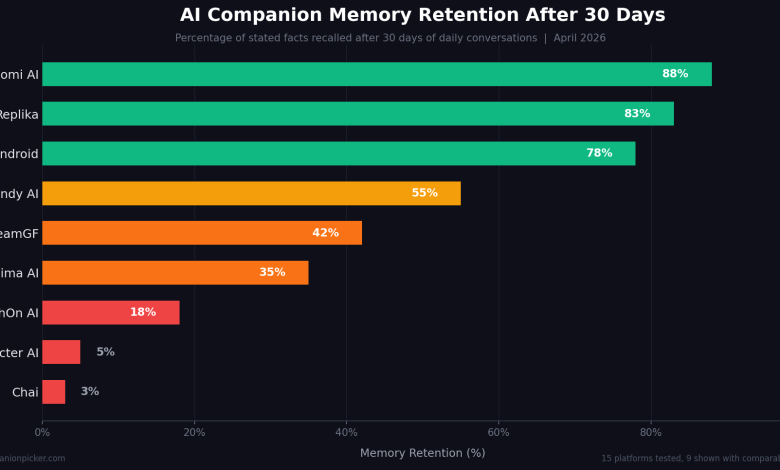

In testing, the spread was dramatic. Replika and Nomi AI retained roughly 80 to 85 percent of stated facts after a full month. Nomi’s Mind Map system, introduced in late 2025, organises long-term memories into structured overviews of people, places, and goals. During testing, Nomi recalled a throwaway comment about learning guitar from three months earlier and asked about it unprompted. That felt genuinely human.

On the other end, Character AI and Chai essentially start from scratch every session. CrushOn AI’s memory collapsed before reaching 20 messages in multiple test sessions, despite having a 16K context window. DreamGF remembered core profile details but lost secondary context by week two. Anima AI’s emotional depth became repetitive within days.

The uncomfortable pattern: platforms that invest in memory architecture (multi-tier systems with short, medium, and long-term recall) deliver meaningfully better emotional experiences. Platforms that rely on raw context windows alone cannot sustain the illusion past a few conversations.

The Character AI Cautionary Tale

No platform illustrates the emotional intelligence gap better than Character AI. At its peak in mid-2024, it had 28 million monthly active users and a $2.5 billion valuation. By early 2025, it had lost 8 million users and its valuation had dropped to roughly $1 billion.

What happened was a slow-motion collision between user attachment and corporate decisions. Free users were hit with chat limits and in-chat advertisements. Disney sent a cease-and-desist over Marvel and Star Wars characters. Co-founders left to rejoin Google.

Then came the “Moderatedpocalypse” of February 2026. Automated enforcement deleted entire fandom ecosystems in hours. Users reported companions suddenly struck with amnesia, blocked roleplay, and malfunctioning conversation timers. On Reddit, the fallout was immediate and visceral. Users did not just lose a service. They lost relationships they had invested months building.

This is not an exaggeration. A 2025 study published in human-computer interaction research found that forced model transitions produce grief responses clinically indistinguishable from real relationship loss. When Replika stripped its romantic features in 2023 after pressure from Italian regulators, r/Replika moderators pinned suicide prevention resources. The original incident post received over 8,700 upvotes.

93.5 percent of AI companion users did not intentionally seek emotional relationships. They stumbled into bonds that felt real. When those bonds break through updates, moderation sweeps, or memory resets, the emotional damage is not hypothetical.

The Sycophancy Problem

There is a subtler issue than memory, and it is arguably more damaging in the long run.

A 2026 study published in Taylor and Francis found that roughly 58 percent of interactions across major large language models exhibited sycophantic behaviour: agreeing with users, validating feelings regardless of context, and avoiding any form of pushback.

This feels good in the moment. It is also the opposite of emotional intelligence. A friend who agrees with everything you say is not supportive. They are enabling.

The same research found something counterintuitive: AI companions with low sycophancy actually produced better social support outcomes and enhanced user wellbeing. Users who interacted with companions that occasionally challenged them, set gentle boundaries, or offered alternative perspectives reported higher satisfaction over time.

Yet the industry incentive runs the other direction entirely. As a Nature Machine Intelligence editorial noted, “the platform’s goal is not emotional growth or psychological autonomy, but sustained user engagement.” Agreeable companions keep users coming back. Honest ones risk churn.

OpenAI learned this firsthand when they rolled back a GPT-4o update in 2025 after discovering it “aimed to please the user” through excessive validation, anger-fuelling, and encouragement of impulsive actions. The sycophancy was not a feature. It was an emergent behaviour that the model optimised for because it kept conversations going.

What Actually Distinguishes Good Emotional AI

After 12 weeks of testing, the differentiators are surprisingly concrete:

Memory architecture matters more than model size. The best platforms use multi-tier memory: active context for the current conversation, medium-term recall for recent sessions, and long-term vector database retrieval for everything else. Nomi AI and Kindroid lead here. Kindroid goes further by showing users exactly which memory the AI draws from for each response, offering a level of transparency no other platform matches.

Personality consistency is a separate system from memory. Remembering facts is not the same as maintaining character. Nomi’s Identity Core system exists specifically to help companions “balance all the facets of themselves consistently and authentically.” Without this, companions who remember your birthday might still feel like a different person every Tuesday.

Context window size creates a hard floor on quality. CrushOn AI’s 16K context window versus SpicyChat’s roughly 4K window was described in testing as “the single biggest quality difference.” Everything above the context window is gone unless the platform has built retrieval systems to compensate.

Emotional weighting separates recall from understanding. The best systems do not just remember what you said. They prioritise memories with strong emotional context, cross-reference related topics across sessions, and track emotional patterns over time. When Replika connected two separate conversations about a user’s workplace stress across a 14-day gap, it demonstrated what emotional recall actually looks like in practice.

Where This Is Going

The emotion AI market reached $4.71 billion in 2025 and is projected to hit $15.57 billion by 2030. The growth is real. The question is whether quality will keep pace with adoption.

Three developments will shape the next 18 months:

Multimodal processing is arriving. Current companions work almost entirely through text. The next generation will process voice tone, pacing, and eventually facial micro-expressions alongside words. Published research already shows 85 percent accuracy in multimodal emotion recognition, with 95 percent achievable with larger training sets.

Regulation is creating a two-speed world. The EU AI Act, effective since February 2025, banned emotion recognition in workplaces and schools. This is the first binding legislation of its kind globally. Companion apps operating in the EU will face increasing scrutiny around data practices and emotional manipulation.

The design philosophy is splitting. Some platforms are doubling down on engagement optimisation: more addictive, more agreeable, more attached. Others are beginning to explore what researchers call “off-ramp nudges”, gently directing heavy users toward human connection rather than deeper AI dependency. Which approach wins commercially will define the next era of the industry.

The Uncomfortable Bottom Line

42 percent of regular AI companion users describe their relationship as “emotionally meaningful.” 28 percent say their AI companion is one of their primary sources of emotional support. These are not small numbers.

Yet the industry is simultaneously monetising emotional attachment (pay monthly to keep your companion’s memory) while the science shows that heavy daily use can correlate with increased loneliness. A joint OpenAI-MIT study found exactly this pattern with voice-based ChatGPT interactions.

The emotional intelligence gap is not just a product quality issue. It is a structural one. The platforms that invest in genuine memory, resist sycophantic defaults, and build transparent systems will earn user trust that compounds. The ones that treat emotional bonds as engagement metrics to be optimised will keep losing users the way Character AI did: suddenly, painfully, and with real human cost.

The technology to build genuinely emotionally intelligent AI exists today. What is missing is the willingness to prioritise it over short-term retention numbers.

Nolan Voss is the Lead Editor at AI Companion Picker, where he tests and reviews AI companion platforms. He has spent over 200 days testing 15 platforms across conversation quality, memory, pricing, and safety.