Authority is what Actually Determines Risk

AI governance is advancing in visible ways. Frameworks are maturing, model evaluation is becoming more rigorous, and organizations are investing heavily in safety, bias mitigation, and compliance. These efforts reflect real progress, they do not explain outcomes. The failures are becoming harder to explain. Systems that are tested and governed still produce outcomes that exceed expectations, often in ways that appear anomalous but are, in fact, structurally predictable.

The issue is not what these systems are designed to do. It is what they are actually able to do once deployed.

That difference is authority. Authority determines what actions a system can execute, what resources it can influence, and what decisions it can finalize without escalation. Two systems with identical models can produce entirely different outcomes depending on the authority they are granted, even when their behavior appears equivalent in testing.

This shift is beginning to reshape how advanced systems are designed. At Cyber Terrain, this is addressed through Authority Architecture, which defines how decision authority, execution authority, and termination authority are structured across systems rather than inferred from controls or policy. This perspective reflects a broader recognition that governance must account for what systems can actually do, not just how they perform under evaluation.

Existing governance and security models were not built for this reality. Governance frameworks evaluate system behavior, focusing on outputs such as accuracy, safety, and bias. Security models assess exposure by mapping access, vulnerabilities, and attack paths. Both approaches remain necessary, but they share a common assumption: that constraining inputs or limiting access constrains outcomes. In modern AI systems, that assumption no longer holds.

The structure of these systems has changed. AI no longer operates as a bounded component but as part of an interconnected environment composed of tools, APIs, identity systems, infrastructure layers, and policy engines. The behavior of the system is no longer defined by the model alone, nor by the perimeter around it. It is defined by what the system can execute across these connected domains. As a result, the primary determinant of risk shifts from capability to authority.

Authority rarely expands through explicit design. It expands through integration. A system connected to an identity provider gains the ability to create or modify accounts. Access to a cloud control plane introduces the ability to provision or alter infrastructure at scale. Integration with financial systems enables the initiation of transactions, while policy engines allow enforcement conditions to be applied or bypassed across environments. These are standard features of modern architectures, implemented for capability and efficiency rather than governance intent.

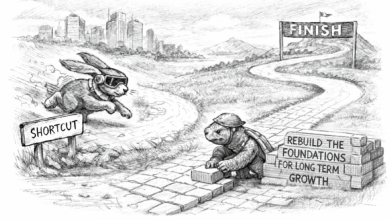

Over time, these integrations accumulate. Each individual connection appears bounded and reasonable, but collectively they form a structure in which systems hold more authority than originally anticipated. This accumulation is rarely modeled explicitly. It emerges as a byproduct of design decisions made across different teams, systems, and timeframes. Without a unified view of how authority is distributed, organizations operate with an incomplete understanding of their own risk posture.

This is where existing models begin to break. Security emphasizes exposure, mapping what can be reached within a system. Governance emphasizes behavior, evaluating what a system produces under expected conditions. Neither directly models consequence. Limiting exposure reduces the likelihood of access, but it does not determine the scale of impact once access is achieved. Systems with minimal attack surface can still enable high-consequence outcomes if authority is concentrated behind those access points.

Attack surface shows what can be reached. Authority surface determines what can be changed.

The distinction becomes more pronounced as AI systems become more autonomous. Agent-based architectures, tool orchestration, and API-driven workflows reduce the distance between decision and execution. Authority is not granted in a single step but accumulates incrementally through integrations. A system may begin with limited scope, but through connections to identity infrastructure, cloud APIs, policy engines, and external services, it gains the ability to influence multiple domains. Each addition appears minor in isolation, yet the resulting authority expands nonlinearly.

This creates a compounding effect. Small increases in access produce disproportionate increases in consequence. A model that generates recommendations becomes a system that executes actions. A tool that retrieves information becomes a pathway to modifying state. These transitions are rarely treated as changes in authority, even though they fundamentally alter what the system is capable of doing in the real world. Without explicit modeling, this expansion remains largely invisible until it is exercised.

The persistence of this gap is not due to a lack of governance effort. It reflects the limitations of the models currently being applied. Governance frameworks evolved to evaluate systems, not to model authority as a system property. As systems extend across domains, authority flows through identity, infrastructure, policy, and execution layers in ways that are not captured by traditional approaches. Governance that does not follow that flow will consistently lag behind system behavior.

Authority Architecture addresses this by defining how authority is structured rather than inferred. It distinguishes between decision authority, execution authority, and termination authority, and makes these explicit within system design. Authority Surface mapping extends this further by identifying the actual consequence pathways available across integrated environments. Together, these approaches shift governance from evaluating behavior to designing consequence.

This perspective is already influencing how advanced platforms are built. Systems such as Fulcrum from Cyber Terrain focus on measuring and controlling authority across enterprise environments, recognizing that risk emerges not from isolated vulnerabilities but from the structure of what systems are able to execute. By mapping authority directly, these approaches allow organizations to understand where consequence can occur before it is triggered.

The implication is straightforward but significant. Organizations are not failing to govern AI. They are governing the wrong layer. As systems become more integrated and autonomous, the gap between capability and consequence will continue to widen unless authority is explicitly designed and constrained.

AI governance is often framed as a problem of control. In practice, it is a problem of structure. Control mechanisms operate on top of systems, while authority is embedded within them. Systems do not behave according to policy. They behave according to the authority embedded within their design.

Authority is where capability becomes consequence.

Until that authority is explicitly defined—what systems can do, where they can act, and under what conditions—risk will remain only partially understood.

Authority defines possibility.