‘Corporate governance’ is not a term generally associated with rapid change. It is more often seen as a slow-moving machine, defined by tradition, caution and an emphasis on long-term stewardship rather than short-term disruption.

Artificial intelligence has changed that perception in a very short space of time. Slow-turning tankers have been forced to become speedboats, navigating the choppy tides of AI while approving often costly and loosely defined experiments in the name of better oversight, streamlined processes and enhanced strategic insight.

While this brave new world represents a decisive change of pace, the speed of deployment has consequences. The race to “do something with AI” means experimentation often runs ahead of governance, blowing caution to the wind and exposing organisations to a new suite of risks – particularly around regulation, accountability and oversight.

The expertise gap

This raises a more fundamental question: do boards have the right leadership to take organisations into the age of AI?

There is no doubt that the pressures of disruptive forces like AI have prompted boards to seek more directors with “T shaped” expertise – leaders who have a breadth of experience across business disciplines and depth in at least one specialised area, such as AI or cyber. Yet despite this progress, there remains an endemic lack of AI – or really any technology – expertise at board level.

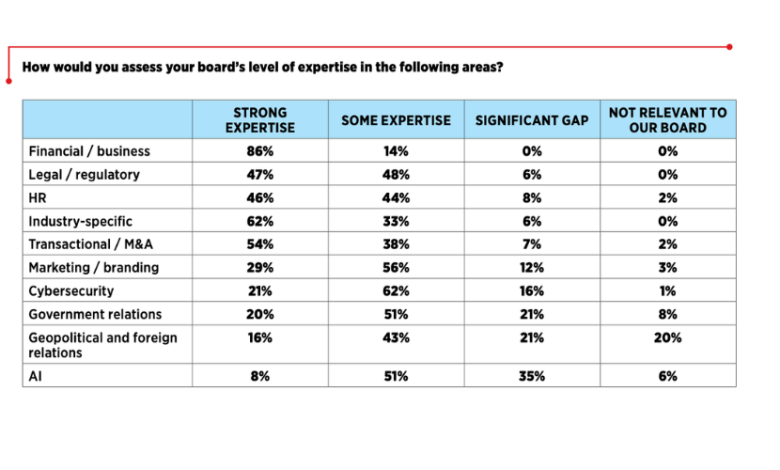

Our recent report, What Directors Think, which surveyed leaders at the world’s largest companies on their priorities for the year ahead, found that just eight per cent of directors say their boards have strong AI expertise – the lowest level of expertise across all areas surveyed.

Given that AI is forcing a fundamental change in how governance itself works, this gap is not merely uncomfortable. It is a material risk.

From retroactive to predictive oversight

For decades, corporate governance has been largely retroactive. Boards have reviewed performance after the fact, assessed incidents once damage has occurred, approved disclosures on historical data, and responded to risks only once they have already materialised. This model might have served when systems were generally stable and change was more incremental. It is far less effective in highly dynamic environments defined by speed, complexity, uncertainty and compounding risk.

AI can help boards move toward a forward-looking posture, where decisions are informed by probabilistic forecasts, scenario modelling and early-warning indicators rather than historical reporting alone. In practice, this means boards must learn not just to receive information, but to interpret uncertainty – and to govern based on what might happen, not simply what already has.

This shift matters most in domains where risk is cumulative, systemic and slow-burning, but always too complex and dynamic to live solely within annual review cycles. By synthesising vast volumes of regulatory, operational, financial and environmental data, AI allows boards continuous oversight for the future, whether that be for particular risks, transitions in leadership, or navigation of economic uncertainty. Decisions can be stress-tested in near real time, rather than through static, narrative-driven scenarios.

Viewed through a governance, risk and compliance lens, this is not about prediction for its own sake. It is about traceability. When AI is used to inform planning, boards are better able to demonstrate how decisions were made, which assumptions were tested, and how trade-offs were assessed. That matters to regulators, investors and, increasingly, courts.

In this sense, AI can not only support strategy development. It can help boards determine whether they are adequately governing the future rather than simply explaining the past.

When inaction becomes a liability

The uncomfortable truth is that once AI-driven foresight becomes available, choosing not to use it becomes a governance decision in its own right – and one that external stakeholders are increasingly unwilling to overlook. As a recent guest on our podcast put it succinctly: “It’s probably three to five years before it would actually be a violation of our duties as General Counsel not to use AI.”

This reality is already reshaping boardroom dynamics. Directors consistently say they want to spend less time reviewing information and more time engaging in strategic discussion. AI, when properly governed, can automate large parts of reporting, monitoring and synthesis – freeing boards to focus on judgement, challenge and direction rather than data processing.

That is why “deploying AI technology across the business” now ranks among directors’ top strategic priorities, beaten only by M&A in our latest research. But deployment without understanding is not transformation; it is exposure.

The challenge for boards is no longer whether AI will shape the future of corporate governance. It already is. The real question is whether governance structures, leadership capability and oversight models are evolving quickly enough to keep pace –or whether boards risk being governed by systems they do not fully understand.