The enterprise AI conversation has shifted. With 64% of enterprises already experimenting with agentic AI, the question is no longer whether to adopt AI agents but how to deploy autonomous systems that reason over sensitive operational data without losing control of that data in the process.

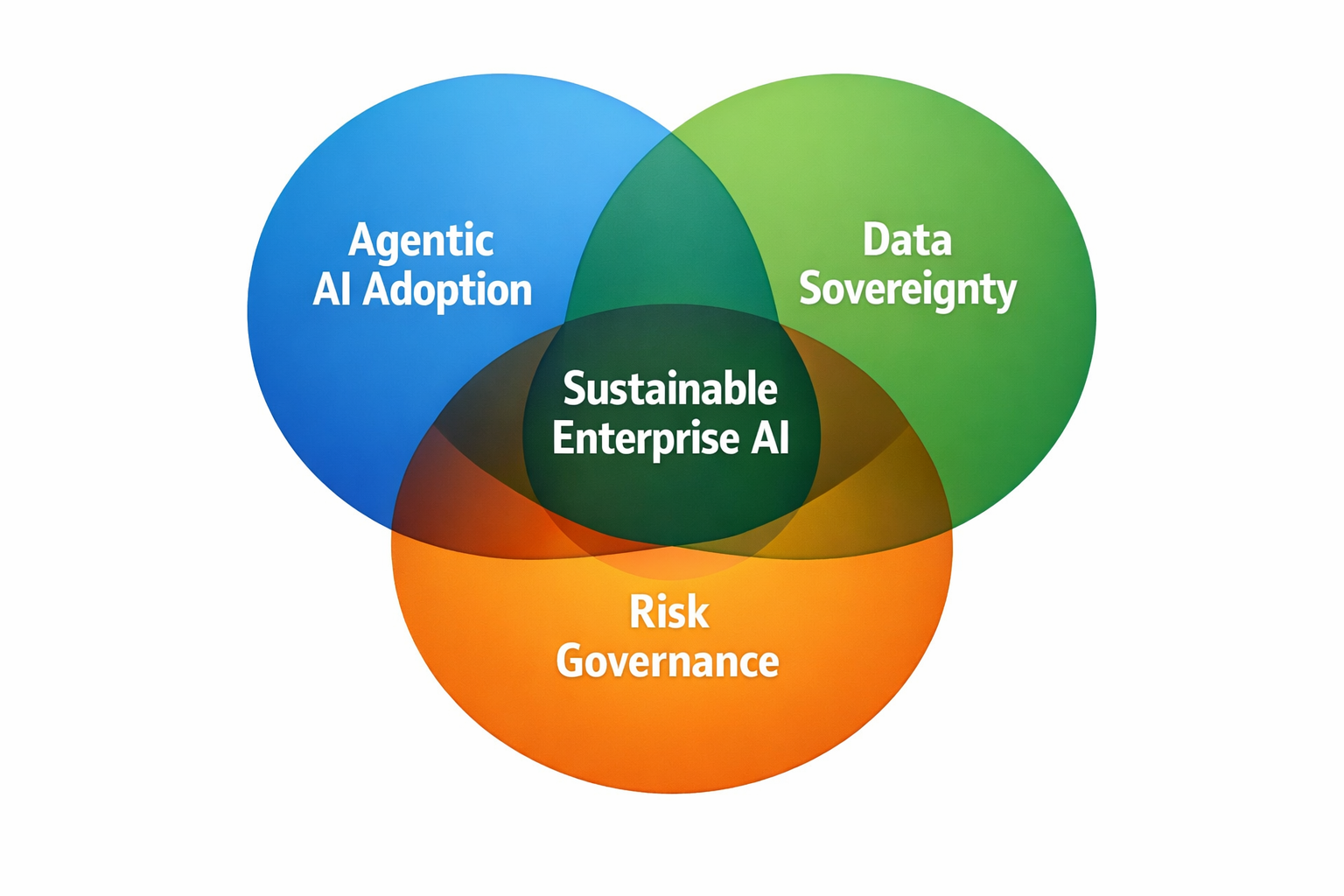

According to Info-Tech Research Group’s AI Trends 2026 report, 72% of IT leaders now list data sovereignty and regulatory compliance as their top AI-related challenge, up from 49% the previous year. The power of agentic AI is inseparable from the risks it introduces around data persistence, residency, and governance. The organizations that solve this tension first will define the next era of enterprise AI.

The Adoption Gap Is Real — and Growing

The numbers tell a contradictory story. Nearly half of IT leaders plan to increase AI-related budgets by 20% or more in 2026. Yet a Camunda study found that while 71% of organizations claim to use AI agents, only 11% of agentic use cases have actually reached production.

That gap is not primarily a technology problem. The models are capable and the frameworks exist. The bottleneck is trust — the willingness of security-conscious organizations to route sensitive operational data through third-party cloud infrastructure they do not control. Unlike the early cloud migration era, AI agents are not just storing data. They are reasoning over it, remembering it across sessions, and taking autonomous actions based on what they learn. The stakes around data exposure have escalated accordingly.

Sovereignty Is No Longer a Compliance Checkbox

For much of the past decade, data sovereignty was a regulatory obligation — something organizations addressed to satisfy GDPR audits or meet cross-border data transfer requirements. It lived in the compliance department, not in the CTO’s strategic roadmap.

That is changing. As AI agents become deeply integrated into business systems, sovereignty is expanding beyond where data is stored to encompass how it is processed and whether the organization retains control over the intelligence derived from it. GenAI models in public cloud environments have virtually infinite memory. They can process anything and remember everything. When an agent ingests months of internal communications and operational telemetry, what happens to that accumulated knowledge becomes a genuine strategic risk.

This is why the Info-Tech report positions sovereignty alongside risk management and agentic automation as the three defining forces for enterprise AI in 2026.

Local-First Agent Runtimes Change the Equation

A new architectural pattern is emerging that directly addresses this tension. Rather than sending data to cloud-hosted AI platforms, local-first agent runtimes execute entirely on infrastructure the organization controls — an on-premises server, a developer workstation, or a private network device.

The distinction from traditional scripting is significant. Modern local-first runtimes support persistent memory across sessions, scheduled and event-driven execution, composable tool integrations, and built-in safety guardrails that scope what actions an agent can take without human approval.

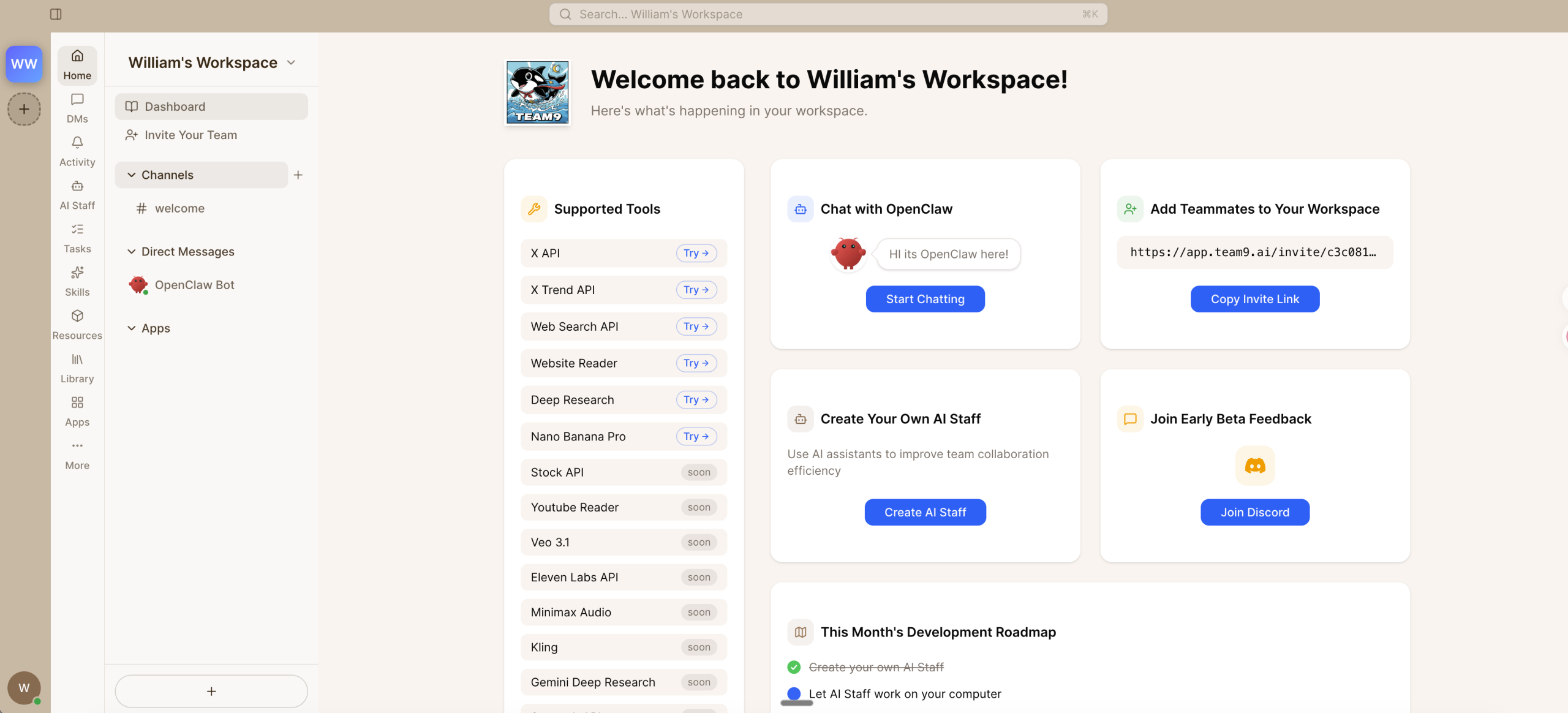

OpenClaw, an open-source agent runtime built on this architecture, illustrates how mature the model has become. Agents maintain long-term context stored as transparent, inspectable Markdown files rather than opaque cloud databases. Every piece of operational memory remains on hardware the organization owns — no API metering, no data leaving the perimeter, no ambiguity about who controls the intelligence the agent accumulates.

This matters because sovereignty is not just about raw data. It is about derived intelligence — the patterns and institutional knowledge an agent builds over time. When that intelligence lives in a third-party cloud, the organization has effectively outsourced a layer of its own operational understanding.

The Deployment Barrier Is Disappearing

The historical objection to self-hosted AI infrastructure has been complexity. Configuring tool integrations, hardening security, and maintaining the stack demanded engineering effort most organizations could not justify for an initial deployment.

That barrier is eroding quickly. Team9 AI Workspace eliminates the setup burden with plug-and-play agent deployment — no manual runtime installation, no adapter wiring, no security hardening checklist. The overhead that once made self-hosted AI impractical has been compressed into minutes rather than weeks.

Organizations no longer need to choose between self-hosted control and cloud convenience. They can start with a single use case — automated daily briefings, alert triage, or change risk assessment — run it on their own infrastructure, and expand as agents demonstrate value.

The Enterprise AI Landscape Is Splitting in Two

The enterprise AI market is diverging along a fundamental architectural line. Cloud-native platforms will continue to serve organizations whose data sensitivity permits external processing. But a growing segment — particularly in regulated industries, government, and critical infrastructure — will demand local-first architectures that keep data, models, and derived intelligence entirely within their perimeter.

As agentic AI moves from experimentation to production, the question of who controls the data and intelligence these agents generate will only become more consequential. The organizations that invest now in sovereign, local-first agent infrastructure are not making a defensive play. They are building the foundation for AI adoption that scales without accumulating governance debt — a competitive advantage that compounds over time.