The Gemini 3 Flash API sits at an interesting intersection: it brings pro-level reasoning into a model built for speed. For developers who have had to choose between raw intelligence and low latency, that tradeoff is finally worth revisiting.

What Makes the Gemini 3 Flash API a Breakthrough for Developers

Pro-Level Reasoning at Flash Speed — How Gemini 3 Flash Balances Intelligence and Low Latency

Most fast models sacrifice depth to hit their latency targets. Gemini 3 Flash takes a different approach — it pairs the reasoning quality you’d expect from a Pro-tier model with the response speed the Flash series is known for. That means you can handle complex analytical tasks, multi-step knowledge queries, and structured reasoning chains without waiting on slow inference times. For production applications where users expect near-instant responses, this balance matters more than raw benchmark scores.

Multimodal Understanding and Coding Capabilities That Expand What You Can Build

Gemini 3 Flash supports text, images, and video as native inputs — not as an afterthought. This makes it practical for applications that need to process mixed content types in a single request. On the coding side, the model performs well across code generation, debugging, and agent workflows, which makes it a solid fit for developer tooling and automated pipelines. The combination of multimodal input handling and strong code performance opens up a wider class of problems than most flash-tier models can realistically address.

Gemini 3 Flash API Pricing: Official Costs and What to Know Before You Integrate

Breaking Down the Official Gemini 3 Flash API Cost Per Million Tokens

Google’s official Gemini 3 Flash API pricing is structured around input type and output volume. Text, image, and video inputs run at $0.50 per million tokens. Audio input is priced higher at $1.00 per million tokens. Output tokens cost $3.00 per million. For moderate usage, these rates are reasonable. At scale — think millions of daily requests across an agent pipeline or document processing system — the output cost in particular can add up quickly. Anyone planning a high-volume integration should model their token usage carefully before committing to the official tier.

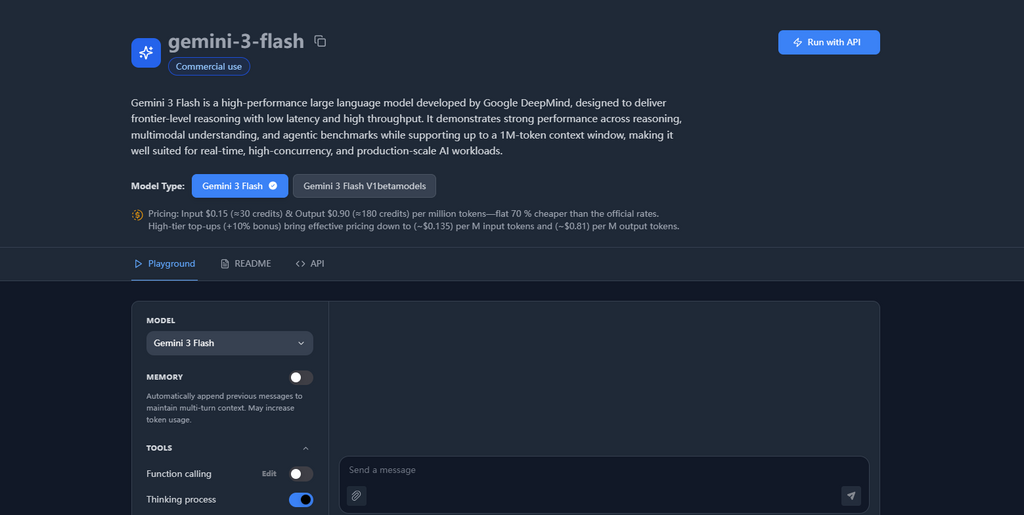

How Alternative API Providers Offer Gemini Flash 3 API Access at a Fraction of the Price

Third-party providers that resell access to the same underlying model can offer significantly lower rates. The Gemini Flash 3 API through Kie, for example, prices input tokens at $0.15 per million and output tokens at $0.90 per million — a reduction of roughly 70% compared to Google’s official pricing. For startups or teams running high-throughput workloads, that difference is meaningful. The API interface stays compatible, so switching or testing an alternative provider doesn’t require rewriting your integration. Just verify rate limits and SLA terms before you move production traffic.

Where the Gemini-3-Flash API Delivers the Most Real-World Value

Agent Workflows, Document Analysis, and Complex Reasoning Tasks That Benefit Most

The Gemini 3 Flash API handles multi-step agent tasks well because it can maintain context across long inputs while still responding quickly enough to keep workflows moving. Document analysis is another strong use case — feeding in dense reports, contracts, or research papers and getting structured, accurate summaries or extractions. The model’s reasoning depth means it doesn’t just pattern-match; it can interpret ambiguous language and make logical inferences, which matters when you’re processing real-world documents rather than clean, formatted data.

Visual QA, Video Analysis, and Gemini 3 Flash Thinking for Time-Sensitive Applications

For applications that need to process visual content on a tight timeline, Gemini 3 Flash handles visual question answering and video analysis without requiring separate model calls for different modalities. Gemini 3 Flash Thinking extends this further — it applies more deliberate reasoning to problems that require it, while still keeping latency within acceptable bounds for interactive applications. This makes it practical for use cases like real-time content moderation, visual data extraction, and any scenario where a user uploads media and expects a fast, intelligent response.

Conclusion

The Gemini 3 Flash API covers a lot of ground: fast inference, strong reasoning, multimodal inputs, and solid coding performance in a single model. Whether you’re building agent pipelines, document tools, or visual applications, it handles more complexity than its “flash” label might suggest. Pricing is manageable at small scale, and alternative providers bring the cost down substantially for high-volume use. If you’ve been waiting for a model that doesn’t force you to choose between speed and intelligence, the gemini-3-flash API is worth testing in your next project.