The clip was short. The result was… 53M+ views. What made it possible was not a magic prompt. It was an AI video workflow built for speed and repetition, the kind you can run on a random Tuesday without needing a full production crew. The person who engineered that system for us was Edwin Disla, the CMO at Domepeace. He treated the whole thing like an operator would: reduce friction, tighten decisions, ship more reps.

At a practical level, this is how we think about an AI video process when the goal is momentum, not perfection. A few AI tools help us move faster through the tedious parts, but the real lever is the workflow itself: the way we pick an idea, shape the first second, cut the dead air, and then learn from what the platform tells us.

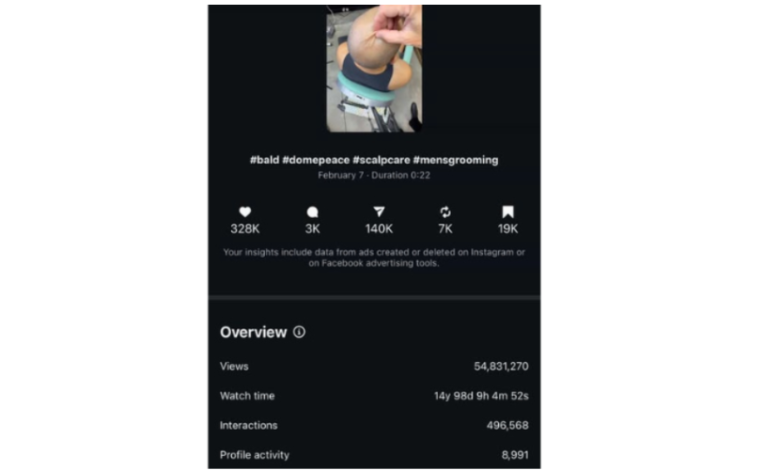

The 53M View Case Study, and What It Proves About AI Video

The story moment is simple. The video content opens with a hook that feels slightly “wrong” in the best way. An unnatural pull on bald skin. Quick. Visual. A little hypnotic. It makes your brain tap the brakes mid-scroll because it looks like something you should not be seeing, but you can’t look away either. That one second did the heavy lifting. People stopped, then they stayed, because the clip didn’t waste their attention. It earned it. And once the viewer was locked in, the reel pivoted into something oddly satisfying: a straightforward routine that made scalp care feel less like “work” and more like a ritual you can actually keep up with.

Now the proof, because “vibes” do not pay rent. The reel cleared 53M+ views, stacked massive watch time, and pulled real intent signals: shares, saves, and profile actions. That mix matters. Views alone can be a fluke. Shares mean, “This is worth sending.” Saves mean, “I want this later.” Profile activity means, “I want more of whatever this is.” Those are the metrics we care about most because they show the audience didn’t just watch, they did something after. In the middle of the reel, we also made the routine obvious. We showcased all four products in the meat of the video clips, explained how to use them in sequence, and ended with a clean CTA to visit the website for the full routine. Not a hard sell. A simple next step, delivered while the viewer was still paying attention.

Here’s the real takeaway: AI didn’t “make it viral.” The platform made the call based on retention and engagement. What AI did for us was remove drag. It helped us move faster from idea to first cut, and it let us produce more reps without burning the team out. That’s the advantage most creators miss. AI is not the spark. It’s the treadmill. It lets you run more experiments, iterate on hooks, tighten clips, and ship again before the moment passes. And when you hit something that works, you’re ready to follow up immediately instead of starting from scratch.

Development Stage, How We Share Ideas and Pick the One That Ships Fast

This stage is where Edwin runs the show like an operator. We share ideas fast, we keep the team moving, and we treat every hook like a small bet. The goal is simple: find a first second that earns attention, then build the rest of the story behind it.

We actually tested way more than 25 hooks. Dozens. Enough that we started seeing patterns instead of opinions. Most of those tests still pulled real reach, typically 1.5K+ views, which is a useful floor. It means the platform is giving us a fair shot, and the concept is “watchable.” But it also tells you something important: getting to 1.5K is not the hard part. The hard part is finding the weird, sticky moment that makes people stop scrolling and keep watching.

AI is what lets that volume happen without chaos. We use AI-powered tools to generate batches of hook concepts, rewrite them into tighter visuals, and build quick variations without wasting time on perfection. That’s how we ended up testing hooks like a goldfish swimming in a bowl, the “ice block” scalp effect with an ice pick cracking it, and even a disgusting-looking ingrown hair concept. The point of those extremes was range. Edwin wanted to see what kind of tension the audience reacts to: curiosity, satisfaction, discomfort, or surprise.

After the testing sprint, we still narrow down the field, but the selection is more informed. We pick the few ideas that show the right signals early: strong hold in the first seconds, clean comprehension with the sound off, and a concept that matches our vision enough to build a routine around it. Then we commit to those winners, shoot the clean version, and scale iterations. That’s the development stage in real life, lots of swings, fast learning, and then decisive execution.

Preproduction, Script, Scene, and the Video Creation Process

Preproduction is where the whole video creation process stops being an idea and becomes a simple plan you can execute fast. The script stays tight and structured: hook → proof → payoff → CTA. The hook is the scroll-stopper; the proof is a quick “here’s what this solves”; the payoff is the easy routine shown step-by-step; and the CTA is one clear next move. Scene planning is just as strict: the viewer sees the unusual visual first, then the scene shifts into the routine and product steps, then it closes with a clean recap. Technical choices get locked early, so nothing gets messy later: vertical aspect ratio, close camera framing that keeps the action centered, and simple lighting that avoids harsh glare on bald skin while still looking sharp and natural.

Production, Text to Video, Camera Movement, and Generating Clips in Higgsfield

Production is where Higgsfield AI earns its spot in our stack: it turns a concept into usable clips fast, without the usual back-and-forth of reshoots and reblocking.

We’ll upload a few clean image inputs (key frames or product/scene stills), any helpful reference footage for the vibe, and a short prompt that spells out the action in plain language, then use text-to-video to generate variations that are close enough to cut and test immediately.

The big unlock is getting “new angles” on demand. Instead of resetting the camera and filming again, we generate camera movement and controlled motion within the clip itself, such as a subtle push-in that makes the opening moment feel more intense, or a smooth tracking shot that glides across the scalp and products as the routine shifts from hook to steps. One example we used: the opening skin-pull moment starts tight, then the camera “floats” forward and slightly off-center as the hand releases, creating a natural release of tension and keeping viewers watching because the frame literally moves them into the next beat.

Why AI Is Becoming the Default for Brand Video

AI is quickly becoming the new way brands make video because it changes the economics of attention. It lowers the cost of testing, it shortens the time from idea to publish, and it turns content into a repeatable system instead of a once-a-week production event. When you can generate more variations, you get more learning. The more you learn, the less you guess. That’s how you build a workflow that compounds.

For bootstrap brands, this is a real money saver. You do not need to rent locations, book talent for multiple reshoots, or pay for heavy post-production every time you want to test a new hook. You can create more clips with fewer resources, then put your budget where it actually matters: the winners. Instead of spending thousands upfront trying to “get it perfect,” you spend small, learn fast, and scale what proves it can hold attention.

And the content is not trapped in one place. A clip that performs organically can become an ad. The same creative can be adapted for different platforms, whether it’s Instagram or TikTok, with small edits to pacing, captions, and the first second. That’s the new standard: build content that can live as a post, then scale it as paid once it proves it can keep people watching. AI is not replacing the brand. It’s giving the brand more shots, more speed, and more leverage, at a cost that makes sense when you are building lean.