The agentic AI is advancing and goes beyond controlled pilots to enterprise systems at which it can plan, access tools and data, and make decisions across workflow with limited human intervention.

At this stage, capability is no longer the primary constraint. The challenge is governance. How these systems are controlled, monitored, and constrained in real environments.

Agentic AI refers to systems that plan, make decisions, and act across tools and workflows autonomously, operating as active participants within enterprise systems.

Early deployment is a demonstrably valuable experience. The challenge arises when the systems integrate into the production space and should address hard requirements in terms of access control, audit, and accountability. Most of the available implementations are not aimed at supporting those standards.

It is no longer the question of how well agentic AI can work, but whether it can be put under control.

The Shift from Predictive Models to Autonomous Systems

Traditional AI systems operate within defined boundaries: input, output, and completion. This makes them relatively straightforward to manage.

Agentic systems break that model. They maintain context, interact with APIs, access internal systems, and execute chained decisions. In doing so, they become embedded within enterprise workflows rather than operating alongside them.

This shift has architectural implications. AI is no longer isolated. It interacts with identity systems, data layers, and business logic in real time. Enterprise control frameworks must extend to these autonomous actors.

McKinsey estimates that agentic AI has the potential to unlock 2.6 to 4.4 trillion dollars a year across more than 60 applications, but only an estimated 1% of organizations believe it has reached maturity in AI adoption.

Such inequality shows an imbalance: ability is becoming superior to what organizations know how to operationalize and regulate. Going beyond pilots means considering agentic systems as structured infrastructure, no longer as experimental instruments.

Why Agentic AI Introduces a Different Security Profile

Image: Enterprise data systems with layered security and access controls by MDD Creative | Shutterstock

Security approaches designed for traditional AI focus primarily on protecting data, preventing leakage, filtering inputs, and controlling outputs.

That model becomes insufficient when systems begin to act. Agentic AI introduces execution risk. These systems generate information, make decisions, invoke tools, and perform actions that directly affect enterprise operations.

The risk profile changes in three ways:

- The focus shifts from what the system knows to what it is allowed to do

- Behavior becomes dynamic, reducing the effectiveness of static rules

- Actions can occur without direct human validation, creating accountability gaps

Research on AI agent governance reinforces this point. Governance for agentic systems requires continuous monitoring and runtime control. Static policies alone cannot manage systems that evolve during execution.

The Security Risks Slowing Enterprise Adoption

The risks associated with agentic AI are not theoretical. They appear quickly once systems interact with real environments.

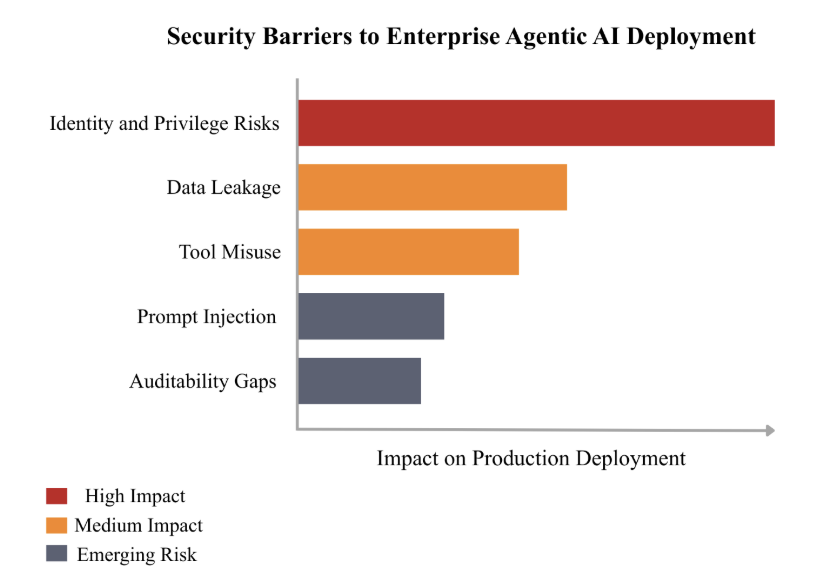

Prompt Injection and Adversarial Inputs

Prompt injection is a primary risk, where malicious inputs override system instructions and alter behavior. The challenge intensifies in multi-step workflows. Agents ingest external data, incorporate it into persistent context, and act without fully validating its integrity. This allows compromised inputs to influence actions across steps and sessions, increasing risk in production environments.

A benchmark study evaluating 847 adversarial scenarios highlights how difficult it is to enforce consistent safeguards when agents operate across tools and data sources.

Memory and Context Manipulation

Because agentic systems retain context across interactions, compromised inputs can affect future decisions. Unlike isolated prompts, these effects accumulate over time, creating a persistent attack surface.

Tool Misuse and Unintended Actions

Agentic systems rely on tools. They call APIs, trigger workflows, and interact with services.

That is where execution risk begins to surface. An incorrect decision can propagate across systems, while a poorly constrained tool call can trigger unintended actions. Small logic errors can escalate into operational issues.

According to the OWASP Top 10 for Agentic Applications, risks such as goal hijacking, unsafe tool invocation, and cascading failures are already emerging in real-world implementations. These risks reflect a system that can act beyond its intended scope if controls are weak.

Identity and Privilege Exposure

Agents frequently operate using delegated permissions. Traditional role-based access models do not fully account for autonomous actors operating at machine speed. Without strict controls, agents may inherit excessive privileges or bypass intended restrictions.

Data Leakage Across Workflows

Data does not stay in one place during agent execution. It moves across tools, APIs, and workflows. Each transition introduces additional exposure risk.

According to BigID, 69.5% of organizations identify AI-driven data leakage as a primary concern. Even more telling, 93.2% report low confidence in securing AI data environments.

Those numbers reflect a gap between capability and control.

Limited Auditability and Traceability

Enterprise systems require clear visibility into decisions and actions. Agentic systems often fall short. Decision paths span multiple systems, while logs capture outputs without sufficient context, making investigation and accountability difficult.

Guidance from Google Cloud’s CISO office emphasizes the importance of observability, traceability, and human oversight in building trust in autonomous systems.

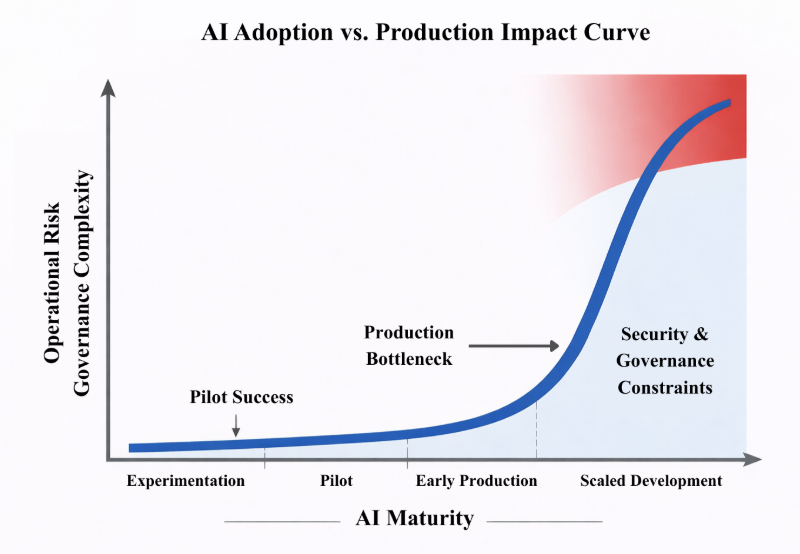

Figure 1: AI Adoption vs Production Impact Curve

As agentic systems move from pilots to enterprise-scale deployment, operational risk and governance complexity rise sharply. The curve shows a clear point where scaling slows unless control mechanisms are in place.

The Gap Between Pilot Success and Production Deployment

Many organizations report strong results during pilot phases, where environments are controlled and constraints are limited.

Production environments introduce a different set of requirements. Systems interact with live data, integrate across services, and must comply with regulatory standards. Every action carries operational consequences.

This transition exposes a consistent gap. Systems that perform well in isolated settings often struggle to meet real-world demands for control, auditability, and compliance.

Pilots validate capability. Production demands accountability.

Bridging this gap introduces operational friction. Teams must implement access controls, monitoring, and governance frameworks that were unnecessary during experimentation. Without these, deployment slows or stalls.

Security as the Primary Constraint

Enterprise adoption patterns reveal a clear trend: autonomy scales faster than governance. Organizations can build capable agents quickly, but supporting control systems take longer to mature. Several challenges persist:

- Limited visibility into agent decision-making

- Identity models are not designed for non-human actors

- Access controls that lack contextual awareness

- Difficulty assigning accountability across systems

These issues shape leadership decisions. As system autonomy increases, tolerance for risk decreases. Security reviews become more stringent, and deployment timelines extend. Security is no longer a supporting function; it becomes the gating mechanism for adoption.

Figure 2: Security Barriers to Enterprise Agentic AI Deployment

Not all risks carry equal weight in enterprise environments; identity and privilege control, data exposure, and execution governance are the main barriers to deployment.

What Enterprise-Ready Agentic AI Requires

Moving from pilot to production requires architectural changes, with security embedded directly into system design.

- Policy-driven execution controls: The agents have to perform within specification limits, and policies define what they are allowed to do in a situation.

- Identity and access management: Agent identities must be clear, with minimum privilege permissions matched with task identities and checked every time they are executed.

- Auditability and observability: Systems have to record decision context, tool use, and execution flow to aid monitoring and investigation.

- Human-in-the-loop oversight: Risky activities have to be authenticated, with clear escalation to hold someone responsible.

Adoption Depends on Control, Not Capability

Agentic AI introduces a powerful operational model, enabling systems to plan, act, and adapt within enterprise environments. The limiting factor is no longer model performance. It is the ability to enforce control.

Companies investing in identity management, execution governance, and observability will be in a position to scale, while others will be trapped in risk and never leave pilot environments. Enterprise adoption will not be based on developing capability as much as creating systems that can be governed reliably.

References:

- BigID. (2025). AI Risk & Readiness in the Enterprise – 2025 Report. BigID. https://home.bigid.com/hubfs/AI%20Risk%20%26%20Readiness%20in%20the%20Enterprise-%202025%20Report.pdf

- Google Cloud Community. (2025, December 29). Office of the CISO 2025 year in review: 3 key AI security governance themes. CISO Blog. [Blog]. https://security.googlecloudcommunity.com/ciso-blog-77/office-of-the-ciso-2025-year-in-review-3-key-ai-security-governance-themes-6510

- Kraprayoon, J., Williams, Z. and Fayyaz, R. (2025, May 27). AI Agent Governance: A Field Guide. arXiv. https://arxiv.org/abs/2505.21808

- McKinsey & Company. (2025, October 16). Deploying agentic AI with safety and security: A playbook for technology leaders. https://www.mckinsey.com/capabilities/risk-and-resilience/our-insights/deploying-agentic-ai-with-safety-and-security-a-playbook-for-technology-leaders

- OWASP. (2025, December 9). OWASP Top 10 for Agentic Applications: The benchmark for agentic security in the age of autonomous AI. OWASP Blog. [Blog]. https://genai.owasp.org/2025/12/09/owasp-top-10-for-agentic-applications-the-benchmark-for-agentic-security-in-the-age-of-autonomous-ai/

- Ramakrishnan, B. and Balaji, A. (2025, November 19). Securing AI Agents Against Prompt Injection Attacks. arXiv. https://arxiv.org/abs/2511.15759