Enterprise research teams are under pressure from every direction. Clients expect broader coverage, faster turnaround, and insights that reflect what is happening now, not what happened last quarter. At the same time, markets are getting harder to track. New players appear quickly. Pricing changes without warning. And signals are spread across dozens of public sources, often across multiple countries and industries.

Traditional research methods were not built for this environment. Interviews, surveys, and static reports still have value, but they move slowly and narrow the field of view. By the time the work is finished, the market has already shifted. For consulting firms and enterprise research teams, this gap is no longer a minor inconvenience. It directly affects the quality of advice they can offer.

This is why many large research organizations are rethinking how they collect data. Web scraper APIs have become a practical way to scale market analysis without scaling teams at the same pace. They change how research is gathered, how often it is updated, and how much ground a single team can realistically cover.

Why Traditional Market Research Stops Scaling

Enterprise research usually starts with a strong foundation. Analysts know which industries matter, which competitors to watch and they understand the questions clients are asking. The breakdown happens at execution.

Manual collection does not stretch very far. Tracking a handful of competitors across a few regions is manageable. Expanding that same approach across multiple markets, languages, and product categories quickly becomes unworkable. Each additional source adds time, cost, and risk of error.

Even well-funded consulting firms run into the same limits. More analysts do not automatically solve the problem. Coordination becomes harder, data arrives at different times and findings lose consistency. Research turns into a framed picture instead of a living image of the market.

- Your clients notice.

- Your reports feel backward-looking.

- Your insights feel cautious.

What Changes When Data Collection Is Automated

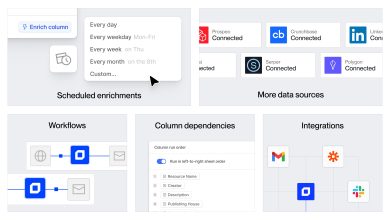

Web scraper API changes things completely. Data collection happens silently instead of analysts spending days gathering inputs. Public websites, markets, pricing pages, job forums, and review platforms become stable and regular sources.

For enterprise teams, this changes the shape of the work. Analysts spend less time assembling raw material and more time understanding patterns. Coverage expands without needing a larger team and updates arrive on a schedule or in near real time, depending on the use case.

This matters when research spans multiple industries or regions. Automation makes it possible to track dozens of markets at once, using the same underlying process. That consistency becomes important when outputs are compared, combined, or reused across different client engagements.

Scaling Research Without Inflating Headcount

One of the biggest challenges in enterprise research is growth. Serving more clients usually means hiring more people. That increases cost, slows onboarding, and creates pressure to standardize output.

Web scraper APIs reduce that dependency. A small team can monitor far more sources than before. A single data pipeline can support several projects. Work that used to be reserved for a small group of premium clients can now be rolled out more broadly. More clients can be supported with detailed research without pushing teams past their limits.

DECODO’s web scraper API is built for enterprise use. Research groups do not have to design or maintain their own scraping systems. They rely on a managed service that delivers dependable access and structured data, allowing analysts to focus on analysis instead of upkeep.

As a result, firms can scale their research offerings without turning data collection into a bottleneck or a constant maintenance task.

Supporting Cross-Industry and Cross-Market Analysis

Enterprise research often spans multiple sectors at once. A single client may want visibility across technology, retail, logistics, and finance, all within the same engagement. Manual methods struggle to keep pace with that breadth.

With web scraper APIs this kind of coverage becomes realistic. Different industries can be monitored using similar workflows. Data now arrives in structured formats that can be compared, combined, and analyzed together.

This enables deeper work. Analysts can look for connections between markets and spot early signals that would be easy to miss when working source by source. Over time this creates institutional knowledge that makes it harder for competition to imitate.

Differentiation in Competitive Consulting Markets

Consulting and research firms operate in crowded markets. Many offer similar frameworks and strategic language. What often sets companies apart is the quality and freshness of their underlying intelligence.

Automated data collection becomes a quiet advantage here. Companies that show how insights are updated, verified, and centered on what customers do in the market stand out. They make crisper suggestions and their confidence inspires trust.

Enterprise teams can implement these features without engineering experiments using DECODO’s web scraper API. Focus is on insight, not infrastructure.

| Area of Change | What Shifts in Practice |

| How research is judged | Clients care less about volume and more about whether the data actually supports decisions |

| Analyst focus | Less time spent collecting inputs, more time spent interpreting signals and testing scenarios |

| Client outcomes | Guidance becomes clearer and easier to act on, not buried in excess detail |

| Use of expertise | Research teams apply judgment and experience instead of repeating mechanical tasks |

| Commercial impact | Live data, wider market coverage, and continuous tracking justify higher-value research services |

Enterprise market research is no longer about producing static documents on fixed timelines. It is about maintaining a live understanding of complex markets and turning that understanding into clear direction.

Web scraper APIs play a central role in that shift. They remove the friction that slows research down and limits its scope. By automating data collection at scale, enterprise teams can serve more clients, cover more ground, and deliver insights that stay relevant.

In competitive consulting environments, that capability is not a nice extra. It becomes part of what defines serious, modern research.