Large Language Models (LLMs) have moved quickly from research labs into real products. Chatbots, copilots, document analyzers, and internal assistants are now common across many industries. Once a team decides to use an LLM, a practical question arises very quickly. Where should this model run? For many organizations, the answer seems obvious at first: put it in the cloud. The cloud promises flexibility, speed, and global reach. But hosting LLMs in the cloud also brings real costs, trade‑offs, and operational challenges that are often underestimated.

This article looks at the reality of hosting LLMs in the cloud, focusing on three core themes: cost, control, and what actually happens in production.

Why the Cloud Is the Default Choice

Cloud platforms make it easy to get started with LLMs. A team can rent powerful GPUs by the hour instead of buying expensive hardware upfront. Managed services remove much of the operational burden, such as setting up drivers, scaling infrastructure, and handling failures. For startups and fast‑moving teams, this speed is extremely attractive.

The cloud also supports experimentation. Engineers can spin up a large GPU cluster for training or fine‑tuning, then shut it down when the job is done. This elasticity fits well with how machine learning work actually happens: intense bursts of computation followed by long periods of evaluation and iteration.

However, what looks simple in early experiments becomes more complex once an LLM moves into daily use.

The Real Cost of Running LLMs

The most visible cost of hosting LLMs in the cloud is compute, especially GPUs. Modern LLMs require powerful accelerators with large amounts of memory, and these resources are expensive. Even when models are not training, inference alone can generate large bills if usage grows.

Costs also come from less obvious places. Data transfer fees add up when models interact with other systems or serve users across regions. Storage costs grow as teams keep multiple model versions, logs, embeddings, and training datasets. High availability setups, which are necessary for production systems, often mean running duplicate infrastructure that sits idle part of the time.

One common surprise is that cloud costs scale with success. A demo chatbot may cost very little, but a popular internal assistant used by thousands of employees can quickly become one of the largest items on an infrastructure bill. Without careful monitoring and limits, LLM usage can grow faster than budgets.

Cost Control Is an Engineering Problem

Controlling LLM costs is not just a finance issue; it is an engineering one. Teams must make deliberate choices about model size, response latency, and scaling behavior. Smaller or optimized models are often good enough for many tasks and are much cheaper to run. Caching responses, batching requests, and setting usage limits can dramatically reduce cost without hurting user experience.

Autoscaling is another key factor. Cloud platforms can automatically add or remove GPU instances based on demand, but poorly tuned scaling rules can cause sudden spikes in cost or degraded performance. In practice, teams need to test and adjust these systems over time, based on real usage patterns.

The cloud gives flexibility, but cost efficiency only comes with active management.

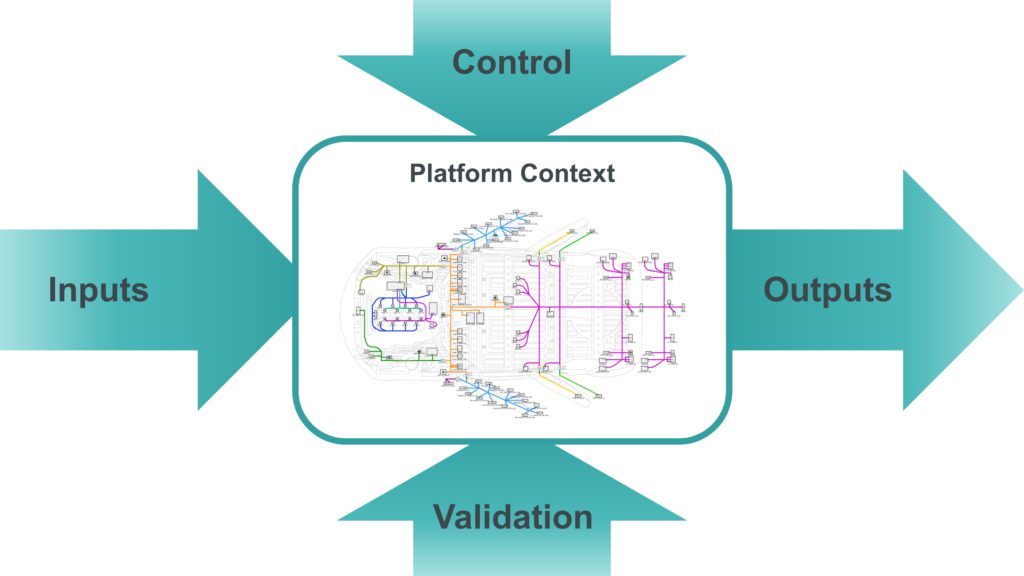

Control Over Models and Data

Beyond cost, control is a major reason teams reconsider how they host LLMs. When using fully managed LLM APIs, organizations often give up visibility into how models are run and optimized. For some use cases, this is acceptable. For others, it creates discomfort.

Data is a central concern. Many organizations process sensitive information and must understand exactly where data flows and how it is stored. Hosting an LLM in your own cloud environment, rather than calling an external API, provides clearer boundaries and stronger guarantees. Teams can control logging, encryption, network access, and retention policies.

Control also matters for customization. Fine‑tuning models, adding custom inference logic, or integrating tightly with internal systems is often easier when the model is hosted as part of your own infrastructure. This flexibility becomes more important as LLMs move from experiments to core business systems.

The Operational Reality of Self‑Hosting

Hosting your own LLM in the cloud is not just “deploy and forget.” It introduces real operational complexity. GPU drivers, container images, model loading times, and memory limits all become part of the system that must be maintained. A single misconfiguration can cause crashes or poor performance.

Reliability is another challenge. LLM services are often expected to behave like standard web services, but they are much heavier and slower. A rolling restart or scaling event can take minutes instead of seconds. Designing for graceful degradation, retries, and fallbacks is essential.

This is where many teams encounter the gap between theory and reality. The cloud provides the building blocks, but running LLMs well still requires strong platform engineering skills.

Hybrid and Pragmatic Approaches

In practice, many organizations settle on a hybrid approach. They may use managed LLM APIs for early prototypes or low‑risk features, while hosting critical or sensitive workloads themselves. Some teams fine‑tune open‑source models in the cloud, then optimize them heavily to reduce inference costs.

Others split workloads by task. High‑volume, simple requests might go to smaller self‑hosted models, while complex or rare queries are routed to larger, more expensive models. This kind of routing adds complexity but can significantly reduce overall cost.

The key is realism. There is no single best hosting model for all LLM use cases.

The Human Side of the Equation

Finally, it is important to remember that hosting LLMs is not only about technology. Teams need time to learn how these systems behave under load, how users interact with them, and how failures show up in real workflows. Observability, logging, and feedback loops are just as important as GPUs and models.

Cloud hosting makes it easier to start, but it does not remove the need for thoughtful design and ongoing ownership.

Conclusion

Hosting LLMs in the cloud offers powerful advantages: speed, flexibility, and access to cutting‑edge hardware. At the same time, it introduces real costs, operational complexity, and trade‑offs around control. The reality sits somewhere between the marketing promises and the day‑to‑day challenges faced by engineering teams.

Organizations that succeed with LLMs approach cloud hosting with open eyes. They measure costs carefully, design for control where it matters, and accept that running LLMs in production is an ongoing engineering effort, not a one‑time setup. In the end, the cloud is not a shortcut. It is a tool, and like any tool, its value depends on how well it is used.