Healthcare payer automation has traditionally centered on control. Enrollment and billing workflows operate through policy checks, audit gates, and compliance rules that protect both regulators and organizations. However, when real-world ambiguity enters the process, that same structure begins to strain.

Deterministic engines execute predefined rules efficiently. Enrollment intake, however, rarely arrives in a clean format. Applications include scanned forms, employer amendments, and state-specific overlays. When those signals conflict, rigid branching logic cannot resolve the inconsistency, and the case moves to manual review.

Regulatory expectations are also rising. Interoperability and prior authorization mandates now require transparent, auditable decision flows. Adding more scripts on top of existing rule engines will not absorb that level of complexity. Autonomous healthcare enrollment and billing require a different architectural posture. The system must reason within policy constraints, enforce deterministic guardrails, and apply autonomy in a controlled and measurable way.

The shift is not about replacing rules with AI. It is about augmenting rule execution with contextual reasoning while keeping every decision accountable to policy engines and compliance thresholds.

Where Deterministic Automation Starts to Break in Payer Enrollment and Billing

Deterministic rule engines are strong at repetitive logic. They evaluate predefined conditions and route cases along scripted paths. In stable environments, they perform reliably.

Healthcare enrollment is rarely stable for long.

A single enrollment request may include:

- Multi-plan eligibility rules

- State-specific regulatory overlays

- Employer group variations

- Policy amendments mid-cycle

Rigid decision trees start to fragment under this kind of variability. It only takes a small deviation to push a case into a manual queue. One exception turns into ten. Ten turns into a backlog. Before long, specialists spend their day fixing issues that the system should have handled without human help.

Billing workflows face the same limitation. Claims adjudication depends on hierarchical rule evaluation. When documentation conflicts or arrives incomplete, processing stops, and reconciliation becomes reactive.

Deterministic systems process inputs quickly and consistently, but they cannot interpret intent or resolve conflicting signals. As intake variability increases, systems that rely only on fixed logic push more work downstream and increase manual handling.

From Rule Execution to Intent Interpretation in AI-Augmented Automation

Robotics Process Automation (RPA) provides AI-driven presentations on top of predictable processes. Rather than simply executing programmed tasks, automation systems begin to understand contextual cues in enrollment documents, structured fields, and previous case data.

The success of LLM-driven orchestration models also illustrates how language systems can coordinate to reach decisions on the multi-step enrollment procedure, especially when the inputs are missing or contain irregularities. When implemented in policy validation engines, Generative AI with RPA will be a layer of reasoning that understands the situation and provides possible resolution paths. However, ultimate authority lies in enforcing the rules.

Intent-aware orchestration can be used to differentiate between an empty data field and a policy exception. That distinction matters. It determines whether a case goes through automated correction or is escalated to reconsideration.

Controlled Autonomy and Deterministic Guardrails

Agentic automation must operate within defined policy boundaries to prevent intent interpretation from exceeding compliance limits.

At enterprise scale, agentic AI architectures rely on a layered control. The AI layer proposes decisions, policy engines validate them, and escalation thresholds activate when confidence or compliance criteria fall below defined limits.

That balance prevents uncontrolled state propagation across the workflow. Without it, agentic decisions can compound across nodes before compliance checks ever trigger.

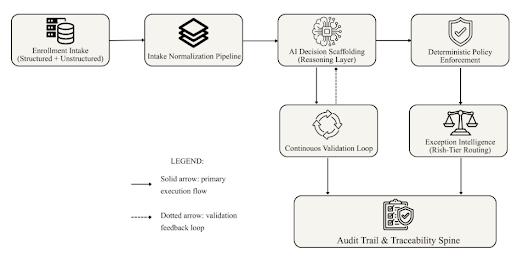

The Self-Correcting Enrollment Protocol: A Governed Architecture for Autonomous Enrollment

True autonomy requires more than intelligent routing. It requires ongoing validation. The Self-Correcting Enrollment Protocol introduces a layered architecture composed of:

- Intake Normalization Pipeline

- AI Decision Scaffolding

- Deterministic Policy Enforcement

- Continuous Validation Loop

- Structured Exception Intelligence

- Audit Trail and Traceability Spine

Bounded multi-agent decision propagation models illustrate how agentic nodes can collaborate while remaining subject to hierarchical validation. Within the payer systems, this would be translated to the AI agents reviewing the enrollment evidence and a deterministic layer consolidating and authenticating the results before they are advanced.

Continuous Validation as Control Discipline

Validation must occur before downstream propagation.

Decisions undergo re-verification every time. The system matches policy matrices, regulatory limits, and enrollment trends against AI-inferred intent. Drifting mechanisms check deviation. When misalignment occurs, feedback loops trigger recalibration.

Self-correction is continuous state verification, not reactive reprocessing.

The architecture functions as a feedback loop that absorbs signals, checks alignment, and adjusts before small issues escalate.

Structured Exception Intelligence and Risk Tiering

Not all exceptions require human review.

Risk classification models assess the level of severity of variance. Less dangerous discrepancies are processed through automated adjudication, while more risky ones are sent to human supervision. Every decision results in an audit trail.

This tiered control model preserves accountability while reducing unnecessary intervention. The following reference architecture illustrates how these components operate as a unified, governed system.

Figure 1: Self-Correcting Enrollment Protocol – Reference Architecture

Autonomy at enrollment cannot remain isolated. The same governed logic must extend into the billing lifecycle.

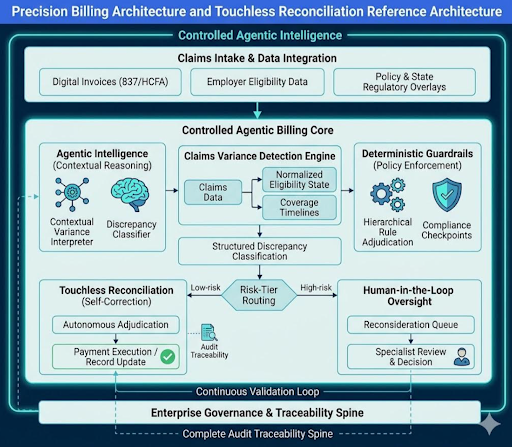

Precision Billing Architecture and Touchless Reconciliation Models

Image: Digital invoice review illustrating automated claims validation in healthcare billing by Garun.Prdt | Shutterstock

Enrollment architecture cannot stop at intake. It has to carry through the billing lifecycle.

Claims variance detection models compare submitted claims against eligibility states, coverage timelines, and adjudication rules. When structured discrepancy classification operates within policy-aware engines, reconciliation becomes predictable.

Adaptive logic dynamic reference engines combine deterministic enforcement with a precision billing reconciliation engine. The system determines whether variances exist that cause either the absence of documentation or a lack of eligibility, but not the presence of a contractual override. Cases of low risk are self-resolved.

Touchless reconciliation reduces routine intervention while preserving accountability. Audit logs capture reasoning traces. Escalation thresholds document human decisions. Governance remains intact.

In practice, the architecture performs well under predictable load. It becomes harder to manage when state dependencies multiply across multi-line claims and layered eligibility conditions. Risk-tier routing mitigates that complexity by isolating variance types before escalation.

Embedding Governance into Regulated Healthcare Automation

Governance cannot be treated as an afterthought. It has to shape the architecture from the beginning.

The NIST AI risk management framework focuses on risk characterization as a measurable, continuous process, continuous monitoring, and a responsibility framework. Elements of these principles, implemented in the context of payer automation, include role-based access controls, escalation controls, and traceable workflow decision states in workflow engines.

The agentic systems impose additional oversight demands. In models of governance for agentic autonomy, it is recommended that their authority boundaries be clear, that there be human-in-the-loop checkpoints, and that agents monitor model drift whilst running. Such controls are in line with the regulatory sensitivity in healthcare.

Effective governance architecture includes:

- Role-based automation controls

- Deterministic enforcement checkpoints

- Model drift monitoring pipelines

- Risk-tiered orchestration thresholds

- Complete audit traceability

Structured autonomy is what brings reliability, not unregulated intelligence. This discipline is supported by operational leadership experience in large-scale platform engineering. Effective governance structures enable sustainable transformations by allowing automation to scale without compromising compliance integrity.

The Shift from Deterministic to Governed Autonomy

Healthcare payer systems stand at a structural crossroads.

Deterministic automation delivers efficiency but struggles with contextual variability. Agentic orchestration introduces interpretive reasoning within defined compliance structures, creating a workable model for regulated autonomy.

Autonomous healthcare enrollment and billing do not imply uncontrolled AI decision-making. It represents bounded autonomy supported by policy enforcement, validation checkpoints, precision reconciliation engines, and full audit traceability.

Scalable autonomy depends on control. Situational understanding must operate within compliance boundaries. Exception intelligence must remain transparent. Governance must shape system design rather than follow deployment.

Enterprise leaders who build with governed autonomy in mind can reduce operational friction and strengthen regulatory resilience at the same time. The path forward requires deliberate architectural intent, not another round of incremental scripting added to aging rule engines.