Traditionally, birdwatching has been a low-paced and experience-based affair, which often involves patience, an understanding of the field and the capability to distinguish minor distinctions between species. It is something that has been practised over the years, mostly by amateurs and professionals who could distinguish the birds by sight, sound, and behaviour.

Over the last few years, however, technology has begun to alter the way individuals interact with the natural world. The integration of cameras and AI systems into smart devices enables ordinary users to observe and recognise birds more conveniently, without having profound prior knowledge. This has transformed birdwatching into a more convenient and interactive experience than ever before.

One such device of this new generation of smart birdwatching devices is Birdfy. It is a system that uses imaging equipment and machine learning algorithms to identify the species of birds in real time. But this leads to a curious technical issue: how can a system like this ever be able to recognise birds in such complex, unpredictable outdoor conditions?

Birdfy and the Smart Birdwatching Market Segment

Birdfy is part of a developing sector that has been commonly referred to as the smart birdwatching or smart bird feeder market. This category is on the border between nature observation, consumer electronics, and artificial intelligence. Instead of being just a standalone camera, these products are designed as a complete system that combines hardware, software, and AI-based recognition.

In its simplest form, a smart birdwatching system usually consists of three key components, namely, a camera, which records the real-world images, an AI model, which processes the observed data, and a user interface, which displays the findings in a user-friendly manner. In the case of Birdfy, this involves identifying birds, the species, and sending it to users in real-time.

This market has become more popular due to a number of reasons. Observing nature at home is now of interest to many people, either to relax, educate or out of mere curiosity. Simultaneously, AI has been developed to an extent that it is now feasible to extend complicated recognition tasks to more common devices. Consequently, smart birdwatching is no longer about high-level expertise but about everyday and easy access to nature.

What “Real-Time Bird Recognition” Means Technically

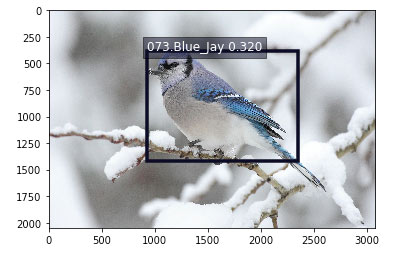

Object Detection: Finding the Bird

The system scans the frame first to determine the availability of a bird. This is done to help eliminate the backgrounds, such as leaves, shadows, or any other moving objects.

Object Segmentation: Isolating the Subject

Next, the bird is separated from the background. This minimises the visual clutter of elaborate outdoor images like branches, feeders or overlapping objects.

Species Classification: Identifying the Bird

The species is then identified with the help of the model. It is not a simple step to take since most birds resemble each other a lot, and the variations are slight, in colour, form, or markings.

Real-Time Output: Instant Result Delivery

Lastly, the system transforms the prediction into a form that the user can easily understand and displays it to the user nearly immediately.

Core Idea

Real-time bird recognition is not a one-time operation, but a rapid chain of detection, separation and classification that operates in conjunction with each other in seconds.

The Real Challenges Lie in the Real World

Lighting Variations and Unstable Conditions

The outdoor environment is dynamic. Image clarity can be influenced by early morning light, dusk shadows, backlighting or cloudy skies. Such differences complicate the ability of AI models to ensure a consistent recognition performance.

Changing Bird Positions and Fast Movement

Birds are seldom content to remain. They can even run sideways, turn away, bend during feeding or take flight at any time. These erratic motions form changing angles and motion blur, making correct identification difficult.

Occlusion and Partial Visibility

In the real world, birds can be partially obscured by branches, feeder structures, or even other birds. This occlusion implies that the system can only perceive incomplete features, which reduces its recognition accuracy.

Complex and Noisy Backgrounds

The outdoor setting is cluttered as compared to the controlled lab images. The noise produced by leaves, shadows, and mixed textures may disorient detection and segmentation models.

Fine Differences Between Similar Species

Most bird species are distinct by minor visual features such as shade of feathers, beak size, or body size. These subtle variations can hardly be consistently differentiated by AI models.

Motion Blur and Multiple Targets

Rapid motion tends to cause blurred frames, which decreases the quality of the images. There are also instances when there are more than two birds present at a given time, which makes detection and classification more complicated.

Changing Regions and Seasons

Bird species are different in place and times. There is another element of real-world uncertainty in a model: changing distributions of species.

Key Insight

It is not merely the ability to identify birds but to do fine-grained, real-time visual reasoning in an open and unpredictable natural world.

Value of Machine Learning in Birdfy

Machine learning simplifies and speeds up the process of identifying birds and makes it more available to ordinary people. People can just look and learn what they are looking at as opposed to using a field guide or previous experience. This eliminates a significant obstacle and enables more individuals to participate in birdwatching in an easy, hassle-free manner.

In addition to identification, AI makes birdwatching an interactive educational experience. Every found and outcome assists the user to be familiar with various species over time, making it more natural and entertaining than technical. It changes the observational approach to a passive one to a step-by-step approach to creating an understanding.

Birdfy smart birding products represent a case study in this aspect where machine learning can be applied in practice, not in controlled datasets. Likewise, AI-assisted bird recognition by Birdfy demonstrates that AI can bridge technology with daily experience with nature, making the process of learning and observation more natural and seamless.

Conclusion: What the Birdfy Case Study Really Shows

Birdfy is not a mere case of adding AI to a consumer gadget. It is a way of modeling machine learning systems to perform in natural, uncontrolled settings, where the conditions are much more complicated than lab data. From lighting changes to fast movement and visual noise, the challenge is fundamentally about handling uncertainty in the real world.

The interesting aspect of this case is that real-time bird recognition combines various fields of computer vision, including detection, segmentation, and fine-grained classification into one continuous pipeline. It demonstrates how these technologies have to collaborate within time restrictions to provide usable results in real-life scenarios.