A surprising amount of video work fails before production even begins. Teams hesitate, not because they lack ideas, but because they cannot test those ideas quickly enough to know which direction deserves time, budget, and attention. That is where Seedance 2.0 becomes interesting inside SeeVideo. It turns early-stage thinking into visible motion fast enough that creative decisions can happen sooner, with less friction and less dependence on a traditional edit-first workflow.

What makes this worth examining is not only speed. In my observation, the more important shift is psychological. When a platform allows text, images, and even audio-guided generation inside one workspace, the task changes from “Can we produce this?” to “Which version is actually worth producing further?” That is a much more practical question for modern creators, especially when content volume keeps rising and attention windows keep shrinking.

Why Video Bottlenecks Now Start Before Editing

Most people assume video creation becomes difficult in post-production. In reality, the slowdown often begins earlier. The team has an idea, but no fast way to test tone, rhythm, transitions, or visual direction. That uncertainty creates delay. By the time a direction is approved, the content often feels less timely than it did when the idea first appeared.

Seedance 2.0 AI Video structure suggests a different logic. Instead of treating generation as a novelty layer on top of production, it treats generation as the first serious filter for decision-making. The platform’s core video engine is positioned around multi-scene generation, audio input support, and flexible starting points such as text prompts and images. That makes it less about isolated effects and more about testing motion as part of the concept stage.

The Platform Accepts Different Starting Materials

One useful detail on the official pages is that users are not forced into a single workflow. They can begin from text, from an uploaded image, or from audio-supported generation depending on the project. For people who work in different ways, this matters more than it may seem.

Some marketers think in campaign copy. Some creators think in key visuals. Some editors think in sound and pacing. A platform becomes more practical when it accepts the material people already know how to create. That lowers the barrier between idea and first output.

Multi-Scene Output Changes The Nature Of Testing

The platform gives special emphasis to multi-scene generation, and that is one of the most meaningful details on the page. Many AI video tools can make a single striking shot, but a single shot does not always help people evaluate whether the broader concept works.

When scenes can connect and transition, users are testing something closer to narrative structure rather than just isolated motion. In my view, this makes the system more useful for real planning. You are not just asking whether a frame looks impressive. You are asking whether the concept can hold together as a sequence.

Why SeeVideo Feels More Like A Creative Workspace

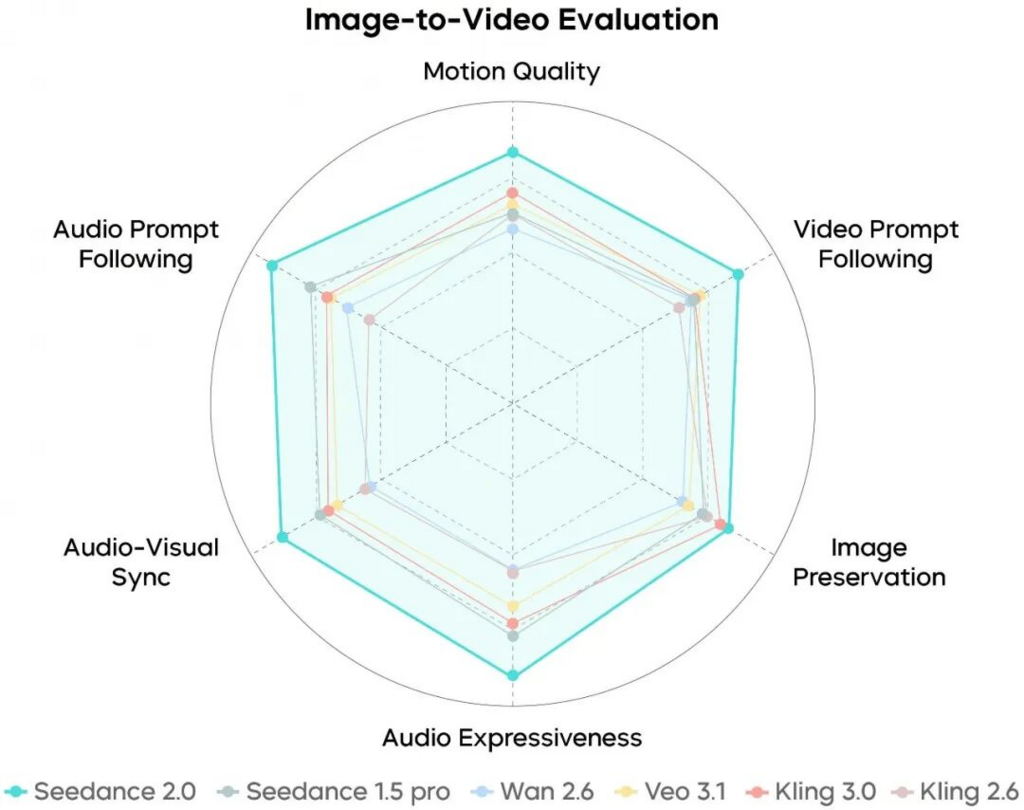

A lot of AI tools feel narrow because they are organized around a single model. SeeVideo appears to be organized around a broader workflow. Its official material places the core video engine alongside other models that serve realism, cinematic storytelling, artistic styling, or faster drafting.

That model range has an important consequence: the user is not locked into one visual answer. Instead, they can choose based on the real purpose of the project.

Different Creative Goals Need Different Engines

The platform’s own descriptions draw clear distinctions. One model is better for multi-scene work. Another is presented as stronger for photorealistic footage with native audio. Another leans more cinematic. Another is framed as the faster and more cost-effective option for simpler, higher-volume tasks.

This is a healthier way to present AI generation. It acknowledges that creative work is full of tradeoffs. The best result depends on whether you care most about realism, speed, story structure, or experimentation. In my testing of tools in this category, platforms become more valuable when they help users choose the right compromise rather than promise perfection in every direction.

Comparing Outputs Reduces Creative Guesswork

The official pages also describe the platform as a place where outputs can be compared across models. That feature matters because creative judgment usually improves when it becomes visual. Teams often disagree in abstract terms, but once they see two or three plausible outputs side by side, decisions become faster and clearer.

Seeing Variations Changes Approval Dynamics

In many content environments, approval slows down because stakeholders are reacting to imagination rather than evidence. A side-by-side comparison narrows that gap. It does not remove taste differences, but it gives people something concrete to discuss. That alone can make a platform more useful than one that simply generates a single result and asks the user to accept or reject it.

What A Real Workflow Looks Like On The Platform

The official material keeps the user journey fairly direct. That simplicity is part of the appeal. You do not need a large production structure just to explore an idea.

Step One Begins With A Prompt Or Image

The process starts with either a text prompt or an uploaded image. This is where the user defines the scene, the visual style, or the motion they want to explore.

Step Two Selects The Most Relevant Model

The next step is choosing a model based on the project. The platform recommends starting with the main engine for most projects, then moving to other options if photorealism, cinematic storytelling, artistic styling, or faster draft generation becomes the priority.

Step Three Generates The First Usable Sequence

Once the direction and model are selected, the system generates the video. The official pages describe this as a fast process, often landing within a short turnaround depending on scene count and complexity.

Step Four Refines Through Repetition

If the first result does not feel right, the platform encourages iteration. The user can revise prompts, change models, add reference guidance, or rerun the concept until the direction becomes more useful.

Where This Helps The Most In Daily Work

The platform’s examples point to social media, marketing, YouTube, film-related tasks, and e-commerce. Those are sensible categories because they all depend on visual speed, versioning, and output flexibility.

Social And Marketing Teams Benefit From Faster Testing

For teams that publish frequently, the hardest problem is often not making one good video. It is producing enough good options without slowing the calendar down. A system that combines fast generation with multi-model choice is especially useful in that environment.

Instead of debating a concept for days, a team can generate options, compare them, and move forward with a stronger sense of what deserves polishing. That shortens the path between trend response and actual publishing.

Product And Commerce Work Gains Visual Range

The e-commerce use case also makes sense. A product does not always need a full traditional shoot to test presentation ideas. Sometimes a business simply needs to see how a visual direction feels in motion before investing more heavily. In that context, generation becomes a way to reduce uncertainty rather than replace all existing production.

How The Platform Balances Tradeoffs

The most useful way to understand the system is not to ask whether it does everything. It is to ask what each part seems optimized for.

| Creative Priority | What The Platform Emphasizes | Why It Matters |

| Connected storytelling | Multi-scene generation | Better for structured sequences than isolated clips |

| Faster ideation | Quick processing times | Useful when timing matters more than perfection |

| Flexible input | Text, image, and audio-supported workflows | Fits different creative habits |

| Output comparison | Multiple models in one workspace | Helps teams decide visually instead of abstractly |

| Repeatable consistency | Reference guidance and selected frame controls | Useful for brand or character continuity |

| Commercial deployment | Commercial rights and no watermark on outputs | Makes generated work easier to use in real projects |

What Users Should Keep In Mind

It is useful to be honest about the limits. A platform like this can speed up decision-making, but it does not eliminate judgment. Better prompts still produce better results. Stronger creative direction still matters. And the first generation is not always the final one.

Prompt Quality Still Shapes The Ceiling

The official pages include example prompts for a reason. Detailed prompting improves scene clarity, tone, and coherence. Users who write vague prompts should expect vaguer outcomes. That is not a flaw unique to this platform. It is simply the current reality of AI generation.

Iteration Is Part Of Serious Work

The site openly acknowledges regeneration and multiple attempts. That is a positive sign. In practice, serious creators do not need a magic button. They need a faster path toward an output worth keeping. That is a more believable promise.

Model Choice Requires Some Learning

Because the platform offers several engines, there is still a small learning curve. The faster option may not be the richest one. The cinematic option may not be the best for product content. The realistic option may not be ideal for stylized campaigns. The platform gives users range, but range still requires judgment.

Why This Creative Model Matters Right Now

What stands out most is that SeeVideo treats video generation as part of decision-making rather than just production. That is a meaningful shift. It suggests that AI video tools may become most valuable not when they replace every traditional process, but when they reduce uncertainty at the exact moment creative teams are deciding what to make next.

For that reason, the platform feels timely. It is less about spectacle and more about practical momentum. In a content environment where speed often determines relevance, the ability to move from rough concept to visible sequence can be more valuable than having endless features that do not actually help anyone decide faster.